Amazon Bedrock Trust and Safety: A Production Checklist for AI Apps

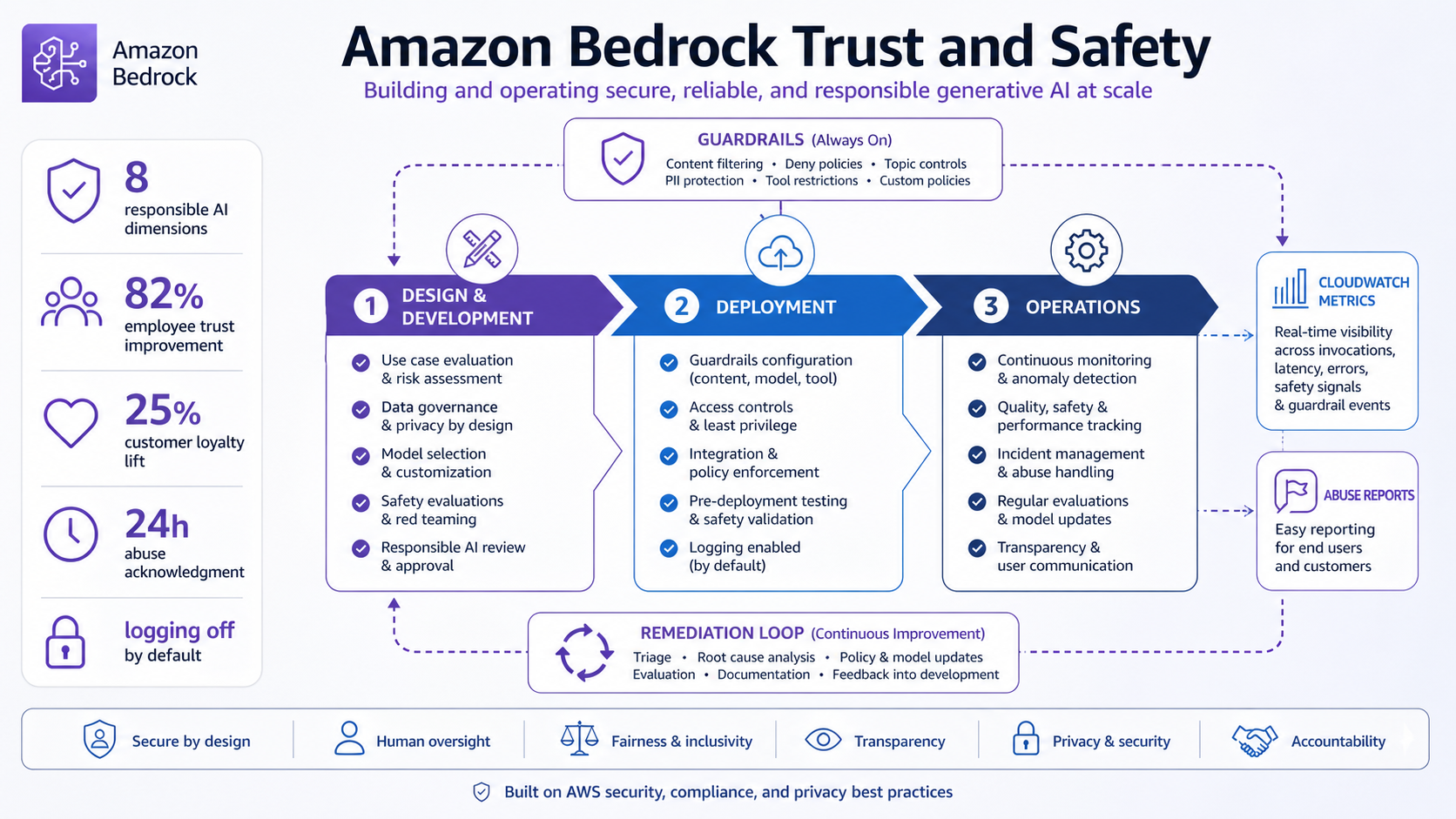

AWS published Bedrock trust-and-safety guidance on April 29, 2026, and two numbers should catch every AI platform team’s attention: AWS cites an 82% improvement in employee trust when organizations communicate mature responsible AI practices, and a 25% increase in customer loyalty and satisfaction for responsible AI-enabled products and services.

Those are business numbers, not only ethics language. If your Bedrock application is heading to production, trust and safety needs the same operating model as security: design controls, deploy telemetry, watch abuse signals, and run incidents when something goes wrong.

What Changed

AWS frames responsible AI across 8 dimensions: safety, controllability, fairness, explainability, security and privacy, veracity and robustness, governance, and transparency. That is a useful checklist because production failures rarely fit one box. A prompt-injection issue can be security, safety, transparency, and governance at the same time.

The lifecycle has 3 phases: design and development, deployment, and operations. The operations phase matters most for teams that already shipped. Safety is not a launch document. It is metrics, alerts, review, and remediation.

If you already use cross-account Bedrock Guardrails, put those controls inside a larger loop: define misuse, monitor rejection rates, investigate abuse reports, and keep evidence for what changed.

The Numbers That Matter

| Fact | Number or date | Source |

|---|---|---|

| AWS post date | April 29, 2026 | AWS Security Blog |

| Employee trust metric | 82% improvement with mature responsible AI communication | AWS / Accenture research cited by AWS |

| Customer metric | 25% increase in loyalty and satisfaction | AWS / Accenture research cited by AWS |

| Responsible AI dimensions | 8 dimensions | AWS Security Blog |

| Abuse response | acknowledge abuse report within 24 hours | AWS Security Blog |

Those facts are the reason this post should be published now, not next quarter. The dates are fresh, the limits are concrete, and the operational impact is clear enough for an engineer to act on today.

How It Works in Practice

Start with a misuse register. List what the app should not help with, what data it should not reveal, and what user behavior would trigger review. Then map each risk to a control: Bedrock Guardrails, application authorization, content filtering, logging, rate limits, or human review.

AWS specifically calls out CloudWatch metrics such as request volumes, response latencies, rejection rates, and content filtering triggers. Turn those into dashboards before launch. Bedrock logging is off by default according to the AWS post, so do not assume you will have forensic data unless you enabled it.

Abuse reports need a clock. AWS says to acknowledge receipt within 24 hours, investigate logs and prompts where available, take action, and report back with findings or remediation. Write that runbook before the first report arrives.

Bedrock production safety checklist

Design:

- Define allowed use cases and misuse cases.

- Choose Guardrails policies and app-level authorization.

Deploy:

- Enable logging where permitted.

- Build CloudWatch dashboards for volume, latency, rejection rate, and filter triggers.

Operate:

- Review anomalies weekly.

- Acknowledge AWS abuse reports within 24 hours.

- Record remediation and policy changes.

Gotchas I Would Check First

- Model safety defaults are not the same thing as your application safety controls.

- If logging is off, the incident review may have almost no evidence.

- A single abusive user can reveal a systemic product flaw. Do not only block the user and move on.

Decision Guide

| Signal | Immediate question |

|---|---|

| Rejection rate spikes | Did a prompt pattern, tenant, or release change? |

| Latency spikes | Is a guardrail or model path causing retries? |

| Abuse report arrives | Can logs reconstruct the prompt, user, and feature path? |

| User complaints about false blocks | Is the policy too broad or poorly explained? |

For related background, keep these existing BitsLovers posts close: cross-account Bedrock Guardrails, Bedrock AgentCore stateful MCP, secure AI agent access patterns, Bedrock versus other AI platforms, AWS Bedrock AgentCore.

Sources

Bedrock safety is not a PDF you attach to an architecture review. It is an operations loop, and the loop needs metrics before production traffic starts.

Comments