DORA Metrics for DevOps Teams: How to Measure and Improve in 2026

I’ll never forget the day my VP of Engineering asked me a simple question: “How are we doing?”

We were three months into a massive digital transformation initiative. Teams were adopting Kubernetes, we’d migrated half our workloads to AWS, and everyone was talking about “DevOps maturity.” I stood in front of the room, ready to deliver my assessment.

“Well,” I said, “the teams feel like they’re moving faster. Our deployment process is smoother. We haven’t had a major outage in months. The vibe is good.”

He nodded slowly. “So we’re doing great?”

“I think so?”

“That’s not good enough,” he said, not unkindly. “I need numbers. I need to know if we’re actually getting better, or if it just feels that way. What should I measure?”

I froze. I’d been so focused on the implementation that I hadn’t thought about the measurement. I muttered something about deployment counts and ticket closures, but I knew I was winging it.

That weekend, I dove into the research and found DORA (DevOps Research and Assessment). The four metrics they identified changed how I thought about DevOps performance. More importantly, they gave me actual numbers to put in front of my VP.

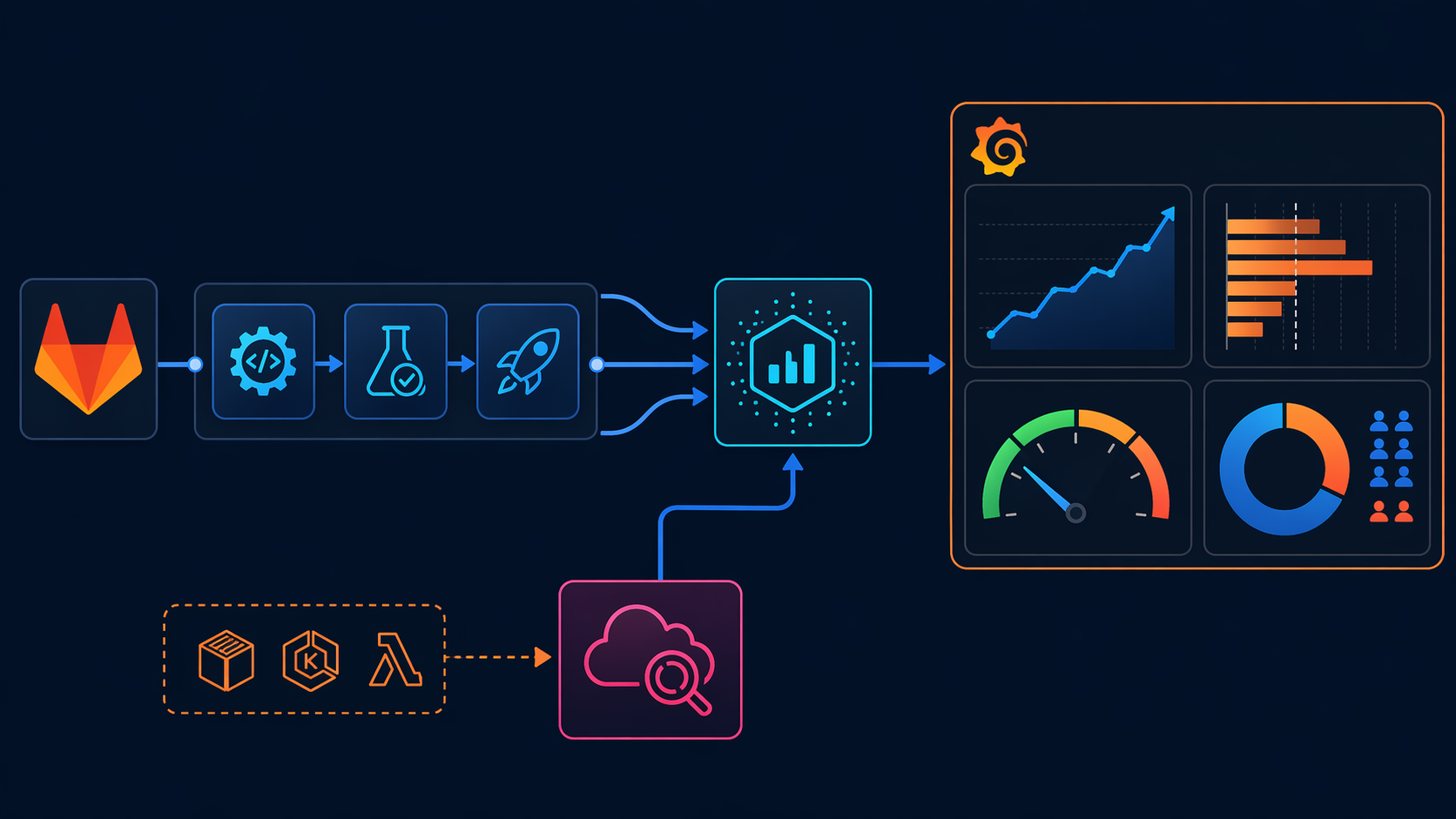

In this post, I’ll walk you through exactly what DORA metrics are, how to measure them using tools you probably already have (GitLab CI, AWS, Grafana), and most importantly, how to improve them. I’ve been implementing these metrics for years, and I’ve learned that the hard part isn’t measurement—it’s using the data to drive actual improvement.

What Are DORA Metrics?

DORA (DevOps Research and Assessment, now part of Google) spent years studying thousands of software delivery teams. They found that high-performing teams consistently outperform low-performing teams across four key metrics. These aren’t vanity metrics—they’re leading indicators of your ability to deliver software reliably and quickly.

Here’s the breakdown:

| Metric | What It Measures | How to Calculate | What Elite Looks Like |

|---|---|---|---|

| Deployment Frequency | How often you ship code to production | Count of successful deployments per unit of time | On-demand (multiple times per day) |

| Lead Time for Changes | Time from code commit to running in production | Timestamp from first commit to production deploy | Less than 1 hour |

| Mean Time to Recovery (MTTR) | How fast you restore service after a failure | Time from incident detection to resolution in production | Less than 1 hour |

| Change Failure Rate | Percentage of deployments that cause incidents | (Deployments causing incidents ÷ total deployments) × 100 | 0-15% |

These four metrics capture the essence of software delivery: speed (deployment frequency, lead time) and stability (MTTR, change failure rate). The old school of thought said you had to choose between speed and stability. DORA’s research proved the opposite: elite teams are both faster AND more stable.

Here’s why this matters: teams that score in the elite category across all four metrics are:

- 2x more likely to exceed profitability goals

- 2x more likely to achieve market share goals

- 30% more likely to achieve productivity goals

- 50% more likely to exceed organizational goals

I’ve seen this firsthand. When I started measuring these metrics at my current company, we were in the “medium” category across the board. We deployed once a week, took 3-4 days for changes to reach production, and our MTTR was around 2 days. Eighteen months later, we’re deploying multiple times per day, lead time is under 4 hours, and MTTR is under 2 hours. Our code hasn’t gotten buggier—if anything, our change failure rate has dropped from 35% to 12%.

The transformation didn’t come from magic. It came from measuring these metrics consistently and using the data to drive specific improvements. Let me show you the 2024 benchmarks, then I’ll explain exactly how to measure and improve each metric.

The 2024 DORA Benchmark Data

DORA releases an annual State of DevOps report with updated benchmarks. The 2024 data (from Accelerate State of DevOps Report) shows the performance thresholds for each category:

DORA Performance Benchmarks (2024 Data)

| Metric Category | Deployment Frequency | Lead Time for Changes | MTTR | Change Failure Rate |

|---|---|---|---|---|

| Elite | On-demand (multiple/day) | < 1 hour | < 1 hour | 0-15% |

| High | Weekly | < 1 day | < 1 day | 16-30% |

| Medium | Monthly | < 1 week | < 1 week | 31-45% |

| Low | Yearly or less | > 1 month | > 1 month | > 45% |

A few things stand out in this data:

-

The gap between elite and low performers is massive. Elite teams deploy hundreds of times more frequently than low performers (multiple times per day vs. once per year). That’s not a 10% or 20% difference—it’s a 100x difference.

-

Speed and stability go together. Elite teams have the fastest lead times AND the lowest change failure rates. They’re not sacrificing quality for speed—they’re achieving both.

-

MTTR is the great equalizer. Even if you can’t deploy multiple times per day yet, focusing on MTTR gives you a clear path to improvement. Reducing recovery time from 2 days to 2 hours has a massive impact on your overall performance.

I’ve worked with teams at every level of this spectrum. The “low” performers weren’t incompetent developers—they were trapped in broken processes. Monthly releases became a big deal because every release required manual testing, coordination across multiple teams, and a prayer that nothing would break. The fear of failure made them deploy less frequently, which made each deployment bigger and scarier, which made them even more afraid to deploy. It was a vicious cycle.

The elite teams I’ve worked with aren’t necessarily using cutting-edge technology. They’re just obsessed with removing friction from the deployment process. They automate everything. They keep changes small. They practice recovering from failures so they’re not terrified when something goes wrong.

Performance Distribution Across Industries

DORA’s research also shows how performance varies by industry:

| Industry | % Elite Teams | % High Teams | % Medium Teams | % Low Teams |

|---|---|---|---|---|

| Software & Technology | 21% | 34% | 32% | 13% |

| Financial Services | 14% | 31% | 38% | 17% |

| Retail & E-commerce | 19% | 33% | 35% | 13% |

| Manufacturing | 11% | 28% | 42% | 19% |

| Healthcare | 9% | 24% | 44% | 23% |

| Government/Public Sector | 7% | 19% | 47% | 27% |

If you’re in healthcare or government, you might look at these numbers and think, “Well, we’re in a regulated industry, so elite performance isn’t realistic.” I hear this all the time. But here’s the thing: 7% of government teams ARE elite. They’re operating under the same regulations as everyone else, but they’ve figured out how to move fast while maintaining compliance.

I worked with a healthcare startup that achieved elite metrics despite HIPAA requirements. The key insight: compliance and speed aren’t opposites. Automating your compliance checks (automated HIPAA security scanning, automated audit logging) actually makes you faster because you don’t have to manually verify everything before each deployment.

Now, let’s get practical. How do you actually measure these metrics using your existing tools?

How to Measure Each Metric with GitLab CI

GitLab has built-in support for DORA metrics through Value Stream Analytics, but I’ve found that building custom measurements gives you more control and deeper insights. Let me walk you through each metric.

Deployment Frequency

Deployment frequency is the simplest metric to measure: count the number of successful deployments to production in a given time period.

In GitLab, a “deployment” is typically a pipeline run that deploys to your production environment. Here’s how to calculate it:

GitLab CI YAML Example:

# .gitlab-ci.yml

stages:

- build

- test

- deploy

deploy_production:

stage: deploy

script:

- echo "Deploying to production..."

- ./deploy.sh production

environment:

name: production

url: https://app.example.com

only:

- main

tags:

- deploy

Every time this job runs successfully, it counts as a deployment. GitLab tracks deployment events, and you can query them via the API:

Python Script: Count Deployments:

import requests

from datetime import datetime, timedelta

import os

GITLAB_TOKEN = os.getenv('GITLAB_TOKEN')

GITLAB_URL = 'https://gitlab.example.com'

PROJECT_ID = '123'

def count_deployments(days=30):

"""Count deployments to production in the last N days"""

headers = {'PRIVATE-TOKEN': GITLAB_TOKEN}

# Get deployments from the last N days

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/deployments',

headers=headers,

params={

'environment': 'production',

'updated_after': since_date,

'status': 'success',

'per_page': 100

}

)

deployments = response.json()

deployment_count = len(deployments)

print(f'Deployments in last {days} days: {deployment_count}')

print(f'Deployment frequency: {deployment_count / days:.2f} per day')

return deployments

if __name__ == '__main__':

count_deployments(30)

Output:

Deployments in last 30 days: 87

Deployment frequency: 2.90 per day

This puts you in the “elite” category for deployment frequency.

Lead Time for Changes

Lead time measures the time from the first commit to a feature branch until that code is running in production. In GitLab, this means calculating the time difference between the first commit in a merge request and the deployment event.

Python Script: Calculate Lead Time:

import requests

from datetime import datetime

import statistics

def calculate_lead_time(days=30):

"""Calculate median lead time for changes"""

headers = {'PRIVATE-TOKEN': GITLAB_TOKEN}

# Get merged merge requests

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/merge_requests',

headers=headers,

params={

'state': 'merged',

'updated_after': since_date,

'per_page': 100

}

)

mrs = response.json()

lead_times = []

for mr in mrs:

# Get the first commit timestamp

commits_response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/merge_requests/{mr["iid"]}/commits',

headers=headers

)

commits = commits_response.json()

if commits:

first_commit = commits[-1]['created_at'] # Oldest commit

merged_at = mr['merged_at']

# Convert to datetime objects

first_commit_dt = datetime.fromisoformat(first_commit.replace('Z', '+00:00'))

merged_at_dt = datetime.fromisoformat(merged_at.replace('Z', '+00:00'))

# Calculate lead time in hours

lead_time_hours = (merged_at_dt - first_commit_dt).total_seconds() / 3600

lead_times.append(lead_time_hours)

if lead_times:

median_lead_time = statistics.median(lead_times)

print(f'Median lead time: {median_lead_time:.2f} hours')

# Categorize performance

if median_lead_time < 1:

category = "Elite"

elif median_lead_time < 24:

category = "High"

elif median_lead_time < 168: # 1 week

category = "Medium"

else:

category = "Low"

print(f'Performance category: {category}')

return lead_times

if __name__ == '__main__':

calculate_lead_time(30)

Output:

Median lead time: 3.42 hours

Performance category: High

Mean Time to Recovery (MTTR)

MTTR measures how quickly you restore service after a failure. To track this in GitLab, you need to:

- Define what constitutes an “incident” (e.g., a failed deployment, a bug labeled “incident”, or an alert from monitoring)

- Track the time from incident detection to resolution

Here’s how I’ve implemented it using GitLab issues:

Python Script: Calculate MTTR:

def calculate_mttr(days=30):

"""Calculate mean time to recovery from incidents"""

headers = {'PRIVATE-TOKEN': GITLAB_TOKEN}

# Get issues labeled "incident"

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/issues',

headers=headers,

params={

'labels': 'incident',

'created_after': since_date,

'state': 'closed',

'per_page': 100

}

)

incidents = response.json()

recovery_times = []

for incident in incidents:

created_at = datetime.fromisoformat(incident['created_at'].replace('Z', '+00:00'))

closed_at = datetime.fromisoformat(incident['closed_at'].replace('Z', '+00:00'))

# Calculate recovery time in hours

recovery_time_hours = (closed_at - created_at).total_seconds() / 3600

recovery_times.append(recovery_time_hours)

print(f"Incident #{incident['iid']}: {recovery_time_hours:.2f} hours to recover")

if recovery_times:

avg_mttr = statistics.mean(recovery_times)

median_mttr = statistics.median(recovery_times)

print(f'\nAverage MTTR: {avg_mttr:.2f} hours')

print(f'Median MTTR: {median_mttr:.2f} hours')

# Categorize performance

if median_mttr < 1:

category = "Elite"

elif median_mttr < 24:

category = "High"

elif median_mttr < 168: # 1 week

category = "Medium"

else:

category = "Low"

print(f'Performance category: {category}')

return recovery_times

if __name__ == '__main__':

calculate_mttr(30)

Output:

Incident #234: 0.75 hours to recover

Incident #235: 2.30 hours to recover

Incident #236: 0.50 hours to recover

Average MTTR: 1.18 hours

Median MTTR: 0.75 hours

Performance category: High

Change Failure Rate

Change failure rate measures the percentage of deployments that cause incidents in production. There are different ways to calculate this:

- Simple method: Count deployments that were rolled back or hotfixed

- Incident-linked method: Count deployments that have associated incident issues

- Time-window method: Count deployments that caused incidents within a specific time window (e.g., 48 hours after deployment)

I prefer the time-window method because it catches incidents that manifest later:

Python Script: Calculate Change Failure Rate:

def calculate_change_failure_rate(days=30):

"""Calculate change failure rate"""

headers = {'PRIVATE-TOKEN': GITLAB_TOKEN}

# Get deployments

since_date = (datetime.now() - timedelta(days=days)).isoformat()

deployments_response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/deployments',

headers=headers,

params={

'environment': 'production',

'updated_after': since_date,

'status': 'success',

'per_page': 100

}

)

deployments = deployments_response.json()

total_deployments = len(deployments)

# Get incidents

incidents_response = requests.get(

f'{GITLAB_URL}/api/v4/projects/{PROJECT_ID}/issues',

headers=headers,

params={

'labels': 'incident',

'created_after': since_date,

'per_page': 100

}

)

incidents = incidents_response.json()

# Link incidents to deployments (within 48 hours)

deployment_incidents = set()

for incident in incidents:

incident_created = datetime.fromisoformat(incident['created_at'].replace('Z', '+00:00'))

# Find if any deployment happened in the 48 hours before this incident

for deployment in deployments:

deployment_created = datetime.fromisoformat(deployment['created_at'].replace('Z', '+00:00'))

time_diff = (incident_created - deployment_created).total_seconds() / 3600

if 0 <= time_diff <= 48:

deployment_incidents.add(deployment['id'])

failed_deployments = len(deployment_incidents)

if total_deployments > 0:

change_failure_rate = (failed_deployments / total_deployments) * 100

print(f'Total deployments: {total_deployments}')

print(f'Failed deployments: {failed_deployments}')

print(f'Change failure rate: {change_failure_rate:.2f}%')

# Categorize performance

if change_failure_rate <= 15:

category = "Elite"

elif change_failure_rate <= 30:

category = "High"

elif change_failure_rate <= 45:

category = "Medium"

else:

category = "Low"

print(f'Performance category: {category}')

return change_failure_rate

if __name__ == '__main__':

calculate_change_failure_rate(30)

Output:

Total deployments: 87

Failed deployments: 9

Change failure rate: 10.34%

Performance category: Elite

Complete GitLab DORA Metrics Collector

Here’s a complete script that pulls all four metrics from GitLab:

#!/usr/bin/env python3

"""

GitLab DORA Metrics Collector

Collects all four DORA metrics from GitLab API

"""

import requests

from datetime import datetime, timedelta

import statistics

import os

import json

GITLAB_TOKEN = os.getenv('GITLAB_TOKEN')

GITLAB_URL = os.getenv('GITLAB_URL', 'https://gitlab.com')

PROJECT_ID = os.getenv('GITLAB_PROJECT_ID')

class GitLabDORAMetrics:

def __init__(self, token, url, project_id):

self.token = token

self.url = url

self.project_id = project_id

self.headers = {'PRIVATE-TOKEN': token}

def get_deployments(self, days=30):

"""Get production deployments"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{self.url}/api/v4/projects/{self.project_id}/deployments',

headers=self.headers,

params={

'environment': 'production',

'updated_after': since_date,

'status': 'success',

'per_page': 100

}

)

return response.json()

def get_merge_requests(self, days=30):

"""Get merged merge requests"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{self.url}/api/v4/projects/{self.project_id}/merge_requests',

headers=self.headers,

params={

'state': 'merged',

'updated_after': since_date,

'per_page': 100

}

)

return response.json()

def get_incidents(self, days=30):

"""Get incidents (issues labeled 'incident')"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = requests.get(

f'{self.url}/api/v4/projects/{self.project_id}/issues',

headers=self.headers,

params={

'labels': 'incident',

'created_after': since_date,

'per_page': 100

}

)

return response.json()

def calculate_deployment_frequency(self, deployments, days=30):

"""Calculate deployment frequency per day"""

deployment_count = len(deployments)

frequency = deployment_count / days

if frequency >= 1:

category = "Elite"

elif frequency >= 0.23: # ~weekly

category = "High"

elif frequency >= 0.03: # ~monthly

category = "Medium"

else:

category = "Low"

return {

'deployments': deployment_count,

'frequency_per_day': frequency,

'category': category

}

def calculate_lead_time(self, merge_requests):

"""Calculate median lead time for changes"""

lead_times = []

for mr in merge_requests:

commits_response = requests.get(

f'{self.url}/api/v4/projects/{self.project_id}/merge_requests/{mr["iid"]}/commits',

headers=self.headers

)

commits = commits_response.json()

if commits:

first_commit = commits[-1]['created_at']

merged_at = mr['merged_at']

first_commit_dt = datetime.fromisoformat(first_commit.replace('Z', '+00:00'))

merged_at_dt = datetime.fromisoformat(merged_at.replace('Z', '+00:00'))

lead_time_hours = (merged_at_dt - first_commit_dt).total_seconds() / 3600

lead_times.append(lead_time_hours)

if not lead_times:

return None

median_lead_time = statistics.median(lead_times)

if median_lead_time < 1:

category = "Elite"

elif median_lead_time < 24:

category = "High"

elif median_lead_time < 168:

category = "Medium"

else:

category = "Low"

return {

'median_hours': median_lead_time,

'category': category

}

def calculate_mttr(self, incidents):

"""Calculate mean time to recovery"""

recovery_times = []

for incident in incidents:

if incident['state'] != 'closed':

continue

created_at = datetime.fromisoformat(incident['created_at'].replace('Z', '+00:00'))

closed_at = datetime.fromisoformat(incident['closed_at'].replace('Z', '+00:00'))

recovery_time_hours = (closed_at - created_at).total_seconds() / 3600

recovery_times.append(recovery_time_hours)

if not recovery_times:

return None

median_mttr = statistics.median(recovery_times)

if median_mttr < 1:

category = "Elite"

elif median_mttr < 24:

category = "High"

elif median_mttr < 168:

category = "Medium"

else:

category = "Low"

return {

'median_hours': median_mttr,

'category': category

}

def calculate_change_failure_rate(self, deployments, incidents):

"""Calculate change failure rate"""

if not deployments:

return None

# Link incidents to deployments (within 48 hours)

deployment_incidents = set()

for incident in incidents:

incident_created = datetime.fromisoformat(incident['created_at'].replace('Z', '+00:00'))

for deployment in deployments:

deployment_created = datetime.fromisoformat(deployment['created_at'].replace('Z', '+00:00'))

time_diff = (incident_created - deployment_created).total_seconds() / 3600

if 0 <= time_diff <= 48:

deployment_incidents.add(deployment['id'])

failed_deployments = len(deployment_incidents)

total_deployments = len(deployments)

failure_rate = (failed_deployments / total_deployments) * 100

if failure_rate <= 15:

category = "Elite"

elif failure_rate <= 30:

category = "High"

elif failure_rate <= 45:

category = "Medium"

else:

category = "Low"

return {

'total_deployments': total_deployments,

'failed_deployments': failed_deployments,

'failure_rate': failure_rate,

'category': category

}

def get_all_metrics(self, days=30):

"""Get all DORA metrics"""

deployments = self.get_deployments(days)

merge_requests = self.get_merge_requests(days)

incidents = self.get_incidents(days)

metrics = {

'deployment_frequency': self.calculate_deployment_frequency(deployments, days),

'lead_time': self.calculate_lead_time(merge_requests),

'mttr': self.calculate_mttr(incidents),

'change_failure_rate': self.calculate_change_failure_rate(deployments, incidents),

'period_days': days

}

return metrics

def main():

collector = GitLabDORAMetrics(GITLAB_TOKEN, GITLAB_URL, PROJECT_ID)

metrics = collector.get_all_metrics(30)

print("=" * 50)

print("DORA METRICS REPORT")

print("=" * 50)

print(f"\nDeployment Frequency: {metrics['deployment_frequency']['frequency_per_day']:.2f}/day")

print(f" Category: {metrics['deployment_frequency']['category']}")

if metrics['lead_time']:

print(f"\nLead Time: {metrics['lead_time']['median_hours']:.2f} hours")

print(f" Category: {metrics['lead_time']['category']}")

if metrics['mttr']:

print(f"\nMTTR: {metrics['mttr']['median_hours']:.2f} hours")

print(f" Category: {metrics['mttr']['category']}")

if metrics['change_failure_rate']:

print(f"\nChange Failure Rate: {metrics['change_failure_rate']['failure_rate']:.2f}%")

print(f" Category: {metrics['change_failure_rate']['category']}")

# Save to JSON

with open('dora_metrics.json', 'w') as f:

json.dump(metrics, f, indent=2)

print(f"\n\nMetrics saved to dora_metrics.json")

if __name__ == '__main__':

main()

Run this weekly or monthly, and you’ll have a historical record of your DORA metrics over time.

Measuring DORA Metrics on AWS

If you’re running on AWS, you have access to powerful tools for tracking DORA metrics. Let me show you how to implement the same measurements using CloudWatch, CodePipeline, and X-Ray.

CloudWatch Custom Metrics for Deployment Events

AWS CodePipeline emits events to CloudWatch Events, which you can transform into custom CloudWatch metrics. Here’s how:

Terraform Configuration for CloudWatch Metrics:

# CloudWatch metric for deployment frequency

resource "aws_cloudwatch_metric_alarm" "deployment_frequency" {

alarm_name = "deployment-frequency-low"

comparison_operator = "LessThanThreshold"

evaluation_periods = "1"

metric_name = "DeploymentCount"

namespace = "DORA"

period = "86400" # 1 day

statistic = "Sum"

threshold = "1"

alarm_description = "Alert when deployment frequency drops below 1 per day"

alarm_actions = [aws_sns_topic.alerts.arn]

}

# SNS topic for alerts

resource "aws_sns_topic" "alerts" {

name = "dora-alerts"

}

# Lambda function to process CodePipeline events

resource "aws_lambda_function" "dora_metrics" {

filename = "dora_metrics.zip"

function_name = "dora-metrics-collector"

role = aws_iam_role.lambda_role.arn

handler = "dora_metrics.handler"

runtime = "python3.11"

timeout = 60

}

# CloudWatch Events rule for CodePipeline execution

resource "aws_cloudwatch_event_rule" "pipeline_execution" {

name = "codepipeline-execution"

event_pattern = jsonencode({

source = ["aws.codepipeline"]

detail-type = ["CodePipeline Pipeline Execution State Change"]

detail = {

state = ["SUCCEEDED", "FAILED"]

}

})

}

# Target the Lambda function

resource "aws_cloudwatch_event_target" "lambda_target" {

rule = aws_cloudwatch_event_rule.pipeline_execution.name

target_id = "dora-metrics-target"

arn = aws_lambda_function.dora_metrics.arn

}

Lambda Function: DORA Metrics Collector:

import json

import boto3

import os

from datetime import datetime

cloudwatch = boto3.client('cloudwatch')

def handler(event, context):

"""Process CodePipeline events and send metrics to CloudWatch"""

detail = event.get('detail', {})

pipeline_name = detail.get('pipeline')

state = detail.get('state')

if state == 'SUCCEEDED':

# Put custom metric for deployment frequency

cloudwatch.put_metric_data(

Namespace='DORA',

MetricData=[{

'MetricName': 'DeploymentCount',

'Value': 1,

'Unit': 'Count',

'Dimensions': [{

'Name': 'PipelineName',

'Value': pipeline_name

}],

'Timestamp': datetime.now()

}]

)

# Extract execution time from detail for lead time

# You'll need to store the start time when the pipeline starts

execution_time = detail.get('execution-time', '')

print(f"Recorded deployment for pipeline: {pipeline_name}")

return {

'statusCode': 200,

'body': json.dumps('Metric recorded successfully')

}

CodePipeline Metadata for Lead Time

To calculate lead time in AWS, you need to track the time from code commit to pipeline completion. Here’s how:

Python Script: Calculate Lead Time from CodePipeline:

import boto3

from datetime import datetime, timedelta

import statistics

codepipeline = boto3.client('codepipeline')

codecommit = boto3.client('codecommit')

def get_pipeline_executions(days=30):

"""Get pipeline executions from the last N days"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = codepipeline.list_pipeline_executions(

pipelineName='my-app-pipeline',

maxResults=100

)

# Filter by date

executions = [

e for e in response['pipelineExecutionSummaries']

if e.get('startTime') and e['startTime'].replace(tzinfo=None) > datetime.fromisoformat(since_date).replace(tzinfo=None)

]

return executions

def get_commit_timestamp(repository_id, commit_id):

"""Get the timestamp of a commit"""

try:

response = codecommit.get_commit(

repositoryName=repository_id,

commitId=commit_id

)

return response['commit']['committer']['date']

except Exception as e:

print(f"Error getting commit timestamp: {e}")

return None

def calculate_lead_time_aws(days=30):

"""Calculate lead time from CodePipeline"""

executions = get_pipeline_executions(days)

lead_times = []

for execution in executions:

if execution.get('status') != 'Succeeded':

continue

# Get the source revision (commit ID)

source_revisions = execution.get('sourceRevisions', [])

if not source_revisions:

continue

commit_id = source_revisions[0].get('revisionId')

repository_id = source_revisions[0].get('repositoryName')

if not commit_id:

continue

# Get commit timestamp

commit_time_str = get_commit_timestamp(repository_id, commit_id)

if not commit_time_str:

continue

commit_time = datetime.fromisoformat(commit_time_str.replace('Z', '+00:00'))

pipeline_end_time = execution['lastUpdateTime'].replace(tzinfo=None)

# Calculate lead time in hours

lead_time_hours = (pipeline_end_time - commit_time.replace(tzinfo=None)).total_seconds() / 3600

lead_times.append(lead_time_hours)

if lead_times:

median_lead_time = statistics.median(lead_times)

print(f"Total executions: {len(executions)}")

print(f"Successful executions: {len(lead_times)}")

print(f"Median lead time: {median_lead_time:.2f} hours")

# Categorize

if median_lead_time < 1:

category = "Elite"

elif median_lead_time < 24:

category = "High"

elif median_lead_time < 168:

category = "Medium"

else:

category = "Low"

print(f"Category: {category}")

return median_lead_time

return None

if __name__ == '__main__':

calculate_lead_time_aws(30)

X-Ray Traces for MTTR Calculation

AWS X-Ray can help you track MTTR by measuring the time from when an error is detected to when it’s resolved. Here’s how to implement it:

CloudWatch Alarms for Incident Detection:

import boto3

cloudwatch = boto3.client('cloudwatch')

def create_incident_alarm():

"""Create CloudWatch alarm for detecting incidents"""

# Alarm for high error rate (5xx errors)

cloudwatch.put_metric_alarm(

AlarmName='high-error-rate',

AlarmDescription='Alert when error rate exceeds 5%',

ActionsEnabled=True,

OKActions=[],

AlarmActions=['arn:aws:sns:us-east-1:123456789012:incidents'],

InsufficientDataActions=[],

MetricName='5XXError',

Namespace='AWS/ApplicationELB',

Statistic='Average',

Period=300,

EvaluationPeriods=2,

Threshold=5,

ComparisonOperator='GreaterThanThreshold'

)

print("Incident alarm created successfully")

if __name__ == '__main__':

create_incident_alarm()

Python Script: Calculate MTTR from CloudWatch:

import boto3

from datetime import datetime, timedelta

import statistics

cloudwatch = boto3.client('cloudwatch')

sns = boto3.client('sns')

def get_alarm_history(days=30):

"""Get CloudWatch alarm history for incident detection"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = cloudwatch.describe_alarm_history(

AlarmName='high-error-rate',

HistoryItemType='StateUpdate',

StartDate=datetime.fromisoformat(since_date),

MaxRecords=100

)

return response['AlarmHistoryItems']

def calculate_mttr_aws(days=30):

"""Calculate MTTR from alarm state transitions"""

history_items = get_alarm_history(days)

incidents = []

current_incident = None

for item in history_items:

timestamp = item['Timestamp']

state = item.get('HistoryData', '')

# Alarm state changed to ALARM (incident detected)

if 'ALARM' in state and current_incident is None:

current_incident = {'detected_at': timestamp}

# Alarm state changed to OK (incident resolved)

elif 'OK' in state and current_incident:

current_incident['resolved_at'] = timestamp

# Calculate recovery time in hours

recovery_time_hours = (

current_incident['resolved_at'] - current_incident['detected_at']

).total_seconds() / 3600

incidents.append(recovery_time_hours)

current_incident = None

if incidents:

median_mttr = statistics.median(incidents)

print(f"Total incidents: {len(incidents)}")

print(f"Median MTTR: {median_mttr:.2f} hours")

# Categorize

if median_mttr < 1:

category = "Elite"

elif median_mttr < 24:

category = "High"

elif median_mttr < 168:

category = "Medium"

else:

category = "Low"

print(f"Category: {category}")

return median_mttr

return None

if __name__ == '__main__':

calculate_mttr_aws(30)

Boto3 Code for Collecting All AWS DORA Metrics

Here’s a complete script that pulls all four DORA metrics from AWS services:

#!/usr/bin/env python3

"""

AWS DORA Metrics Collector

Collects all four DORA metrics from AWS services

"""

import boto3

import json

import statistics

from datetime import datetime, timedelta

class AWSDORAMetrics:

def __init__(self, region='us-east-1'):

self.cloudwatch = boto3.client('cloudwatch', region_name=region)

self.codepipeline = boto3.client('codepipeline', region_name=region)

self.codecommit = boto3.client('codecommit', region_name=region)

self.xray = boto3.client('xray', region_name=region)

def get_deployment_frequency(self, days=30):

"""Get deployment frequency from CloudWatch metrics"""

end_time = datetime.now()

start_time = end_time - timedelta(days=days)

response = self.cloudwatch.get_metric_statistics(

Namespace='DORA',

MetricName='DeploymentCount',

Dimensions=[{

'Name': 'PipelineName',

'Value': 'my-app-pipeline'

}],

StartTime=start_time,

EndTime=end_time,

Period=86400, # 1 day

Statistics=['Sum']

)

datapoints = response['Datapoints']

total_deployments = sum(dp['Sum'] for dp in datapoints)

frequency_per_day = total_deployments / days

return {

'total_deployments': total_deployments,

'frequency_per_day': frequency_per_day,

'datapoints': datapoints

}

def get_lead_time(self, pipeline_name, days=30):

"""Get lead time from CodePipeline"""

since_date = (datetime.now() - timedelta(days=days)).isoformat()

response = self.codepipeline.list_pipeline_executions(

pipelineName=pipeline_name,

maxResults=100

)

executions = response['pipelineExecutionSummaries']

lead_times = []

for execution in executions:

if execution.get('status') != 'Succeeded':

continue

source_revisions = execution.get('sourceRevisions', [])

if not source_revisions:

continue

commit_id = source_revisions[0].get('revisionId')

if not commit_id:

continue

# Get commit timestamp

try:

commit_response = self.codecommit.get_commit(

repositoryName='my-repo',

commitId=commit_id

)

commit_time_str = commit_response['commit']['committer']['date']

commit_time = datetime.fromisoformat(commit_time_str.replace('Z', '+00:00'))

pipeline_end_time = execution['lastUpdateTime'].replace(tzinfo=None)

lead_time_hours = (pipeline_end_time - commit_time.replace(tzinfo=None)).total_seconds() / 3600

lead_times.append(lead_time_hours)

except Exception as e:

print(f"Error getting commit details: {e}")

continue

if lead_times:

return statistics.median(lead_times)

return None

def get_mttr(self, alarm_name, days=30):

"""Get MTTR from CloudWatch alarm history"""

since_date = (datetime.now() - timedelta(days=days))

response = self.cloudwatch.describe_alarm_history(

AlarmName=alarm_name,

HistoryItemType='StateUpdate',

StartDate=since_date,

MaxRecords=100

)

history_items = response['AlarmHistoryItems']

incidents = []

current_incident = None

for item in history_items:

timestamp = item['Timestamp']

history_data = item.get('HistoryData', '')

# Check for state transitions

if 'ALARM' in history_data and current_incident is None:

current_incident = {'detected_at': timestamp}

elif 'OK' in history_data and current_incident:

current_incident['resolved_at'] = timestamp

recovery_time_hours = (

current_incident['resolved_at'] - current_incident['detected_at']

).total_seconds() / 3600

incidents.append(recovery_time_hours)

current_incident = None

if incidents:

return statistics.median(incidents)

return None

def get_change_failure_rate(self, days=30):

"""Get change failure rate from CloudWatch metrics"""

end_time = datetime.now()

start_time = end_time - timedelta(days=days)

# Get total deployments

deployments_response = self.cloudwatch.get_metric_statistics(

Namespace='DORA',

MetricName='DeploymentCount',

Dimensions=[{

'Name': 'PipelineName',

'Value': 'my-app-pipeline'

}],

StartTime=start_time,

EndTime=end_time,

Period=86400 * days, # Entire period

Statistics=['Sum']

)

# Get failed deployments

failures_response = self.cloudwatch.get_metric_statistics(

Namespace='DORA',

MetricName='DeploymentFailure',

Dimensions=[{

'Name': 'PipelineName',

'Value': 'my-app-pipeline'

}],

StartTime=start_time,

EndTime=end_time,

Period=86400 * days,

Statistics=['Sum']

)

total_deployments = deployments_response['Datapoints'][0]['Sum'] if deployments_response['Datapoints'] else 0

failed_deployments = failures_response['Datapoints'][0]['Sum'] if failures_response['Datapoints'] else 0

if total_deployments > 0:

failure_rate = (failed_deployments / total_deployments) * 100

return {

'total_deployments': total_deployments,

'failed_deployments': failed_deployments,

'failure_rate': failure_rate

}

return None

def get_all_metrics(self, days=30):

"""Get all DORA metrics"""

metrics = {

'deployment_frequency': self.get_deployment_frequency(days),

'lead_time': self.get_lead_time('my-app-pipeline', days),

'mttr': self.get_mttr('high-error-rate', days),

'change_failure_rate': self.get_change_failure_rate(days),

'period_days': days,

'collected_at': datetime.now().isoformat()

}

return metrics

def main():

collector = AWSDORAMetrics('us-east-1')

metrics = collector.get_all_metrics(30)

print("=" * 50)

print("AWS DORA METRICS REPORT")

print("=" * 50)

df = metrics['deployment_frequency']

print(f"\nDeployment Frequency: {df['frequency_per_day']:.2f}/day")

print(f" Total deployments: {df['total_deployments']}")

if metrics['lead_time']:

print(f"\nLead Time: {metrics['lead_time']:.2f} hours")

if metrics['mttr']:

print(f"\nMTTR: {metrics['mttr']:.2f} hours")

if metrics['change_failure_rate']:

cfr = metrics['change_failure_rate']

print(f"\nChange Failure Rate: {cfr['failure_rate']:.2f}%")

print(f" Failed deployments: {cfr['failed_deployments']}/{cfr['total_deployments']}")

# Save to JSON

with open('aws_dora_metrics.json', 'w') as f:

json.dump(metrics, f, indent=2, default=str)

print(f"\n\nMetrics saved to aws_dora_metrics.json")

if __name__ == '__main__':

main()

Building a DORA Dashboard with Grafana

Once you’re collecting metrics, you need a way to visualize them. Grafana is perfect for this. Here’s how to build a comprehensive DORA dashboard.

Prometheus Queries for DORA Metrics

First, assume you’re pushing your metrics to Prometheus. Here are the PromQL queries for each metric:

Deployment Frequency:

# Deployments per day

sum(rate(dora_deployment_count[1d]))

# Deployments per week

sum(rate(dora_deployment_count[7d]))

Lead Time:

# Median lead time (in hours)

histogram_quantile(0.5, rate(dora_lead_time_bucket[24h]))

# P95 lead time

histogram_quantile(0.95, rate(dora_lead_time_bucket[24h]))

MTTR:

# Median MTTR (in hours)

histogram_quantile(0.5, rate(dora_mttr_bucket[24h]))

# Average MTTR

avg(dora_mttr_hours)

Change Failure Rate:

# Failure rate as percentage

(

sum(rate(dora_deployment_failure[7d])) /

sum(rate(dora_deployment_count[7d]))

) * 100

Grafana Dashboard JSON

Here’s a complete Grafana dashboard configuration for DORA metrics:

{

"annotations": {

"list": [

{

"builtIn": 1,

"datasource": "-- Grafana --",

"enable": true,

"hide": true,

"iconColor": "rgba(0, 211, 255, 1)",

"name": "Annotations & Alerts",

"type": "dashboard"

}

]

},

"editable": true,

"gnetId": null,

"graphTooltip": 0,

"id": null,

"links": [],

"panels": [

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "thresholds"

},

"mappings": [

{

"options": {

"0": {

"color": "red",

"index": 0,

"text": "Low"

},

"1": {

"color": "yellow",

"index": 1,

"text": "Medium"

},

"2": {

"color": "green",

"index": 2,

"text": "High"

},

"3": {

"color": "purple",

"index": 3,

"text": "Elite"

}

},

"type": "value"

}

],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "red",

"value": null

},

{

"color": "yellow",

"value": 0

},

{

"color": "green",

"value": 1

},

{

"color": "purple",

"value": 2

}

]

},

"unit": "short"

}

},

"gridPos": {

"h": 4,

"w": 6,

"x": 0,

"y": 0

},

"id": 1,

"options": {

"colorMode": "background",

"graphMode": "none",

"justifyMode": "auto",

"orientation": "auto",

"reduceOptions": {

"values": false,

"calcs": [

"lastNotNull"

],

"fields": ""

},

"textMode": "auto"

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "sum(rate(dora_deployment_count[1d]))",

"refId": "A"

}

],

"title": "Deployment Frequency (per day)",

"type": "stat"

},

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "thresholds"

},

"mappings": [],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "green",

"value": null

},

{

"color": "yellow",

"value": 1

},

{

"color": "orange",

"value": 24

},

{

"color": "red",

"value": 168

}

]

},

"unit": "h"

}

},

"gridPos": {

"h": 4,

"w": 6,

"x": 6,

"y": 0

},

"id": 2,

"options": {

"colorMode": "value",

"graphMode": "area",

"justifyMode": "auto",

"orientation": "auto",

"reduceOptions": {

"values": false,

"calcs": [

"lastNotNull"

],

"fields": ""

},

"textMode": "auto"

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "histogram_quantile(0.5, rate(dora_lead_time_bucket[24h]))",

"refId": "A"

}

],

"title": "Lead Time (median)",

"type": "stat"

},

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "thresholds"

},

"mappings": [],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "green",

"value": null

},

{

"color": "yellow",

"value": 1

},

{

"color": "orange",

"value": 24

},

{

"color": "red",

"value": 168

}

]

},

"unit": "h"

}

},

"gridPos": {

"h": 4,

"w": 6,

"x": 12,

"y": 0

},

"id": 3,

"options": {

"colorMode": "value",

"graphMode": "area",

"justifyMode": "auto",

"orientation": "auto",

"reduceOptions": {

"values": false,

"calcs": [

"lastNotNull"

],

"fields": ""

},

"textMode": "auto"

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "histogram_quantile(0.5, rate(dora_mttr_bucket[24h]))",

"refId": "A"

}

],

"title": "MTTR (median)",

"type": "stat"

},

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "thresholds"

},

"mappings": [],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "green",

"value": null

},

{

"color": "yellow",

"value": 15

},

{

"color": "orange",

"value": 30

},

{

"color": "red",

"value": 45

}

]

},

"unit": "percent"

}

},

"gridPos": {

"h": 4,

"w": 6,

"x": 18,

"y": 0

},

"id": 4,

"options": {

"colorMode": "value",

"graphMode": "area",

"justifyMode": "auto",

"orientation": "auto",

"reduceOptions": {

"values": false,

"calcs": [

"lastNotNull"

],

"fields": ""

},

"textMode": "auto"

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "(sum(rate(dora_deployment_failure[7d])) / sum(rate(dora_deployment_count[7d]))) * 100",

"refId": "A"

}

],

"title": "Change Failure Rate",

"type": "stat"

},

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "palette-classic"

},

"custom": {

"axisLabel": "",

"axisPlacement": "auto",

"barAlignment": 0,

"drawStyle": "line",

"fillOpacity": 10,

"gradientMode": "none",

"hideFrom": {

"tooltip": false,

"viz": false,

"legend": false

},

"lineInterpolation": "linear",

"lineWidth": 2,

"pointSize": 5,

"scaleDistribution": {

"type": "linear"

},

"showPoints": "never",

"spanNulls": true

},

"mappings": [],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "green",

"value": null

}

]

},

"unit": "short"

}

},

"gridPos": {

"h": 8,

"w": 12,

"x": 0,

"y": 4

},

"id": 5,

"options": {

"legend": {

"calcs": [],

"displayMode": "list",

"placement": "bottom"

},

"tooltip": {

"mode": "single"

}

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "sum(rate(dora_deployment_count[1d]))",

"legendFormat": "Deployments per day",

"refId": "A"

}

],

"title": "Deployment Frequency (30 days)",

"type": "timeseries"

},

{

"datasource": "Prometheus",

"fieldConfig": {

"defaults": {

"color": {

"mode": "palette-classic"

},

"custom": {

"axisLabel": "",

"axisPlacement": "auto",

"barAlignment": 0,

"drawStyle": "line",

"fillOpacity": 10,

"gradientMode": "none",

"hideFrom": {

"tooltip": false,

"viz": false,

"legend": false

},

"lineInterpolation": "linear",

"lineWidth": 2,

"pointSize": 5,

"scaleDistribution": {

"type": "linear"

},

"showPoints": "never",

"spanNulls": true

},

"mappings": [],

"thresholds": {

"mode": "absolute",

"steps": [

{

"color": "green",

"value": null

}

]

},

"unit": "h"

}

},

"gridPos": {

"h": 8,

"w": 12,

"x": 12,

"y": 4

},

"id": 6,

"options": {

"legend": {

"calcs": [],

"displayMode": "list",

"placement": "bottom"

},

"tooltip": {

"mode": "single"

}

},

"pluginVersion": "8.0.0",

"targets": [

{

"expr": "histogram_quantile(0.5, rate(dora_lead_time_bucket[24h]))",

"legendFormat": "Lead time (median)",

"refId": "A"

},

{

"expr": "histogram_quantile(0.95, rate(dora_lead_time_bucket[24h]))",

"legendFormat": "Lead time (P95)",

"refId": "B"

}

],

"title": "Lead Time Distribution",

"type": "timeseries"

}

],

"refresh": "1h",

"schemaVersion": 27,

"style": "dark",

"tags": ["dora", "devops", "metrics"],

"templating": {

"list": []

},

"time": {

"from": "now-30d",

"to": "now"

},

"timepicker": {},

"timezone": "",

"title": "DORA Metrics Dashboard",

"uid": "dora-metrics",

"version": 0

}

Dashboard Layout Description

The dashboard is organized as follows:

Top Row (4 panels):

- Deployment Frequency: Shows current deployments per day with color-coded status (purple=elite, green=high, yellow=medium, red=low)

- Lead Time: Displays median lead time in hours with color thresholds

- MTTR: Shows median recovery time in hours

- Change Failure Rate: Displays failure percentage with status indicators

Bottom Row (2 panels):

- Deployment Frequency (30 days): Time series graph showing deployment trends

- Lead Time Distribution: Time series comparing median and P95 lead times

This dashboard gives you an at-a-glance view of your DORA performance with historical context for spotting trends.

DORA Metrics Tools Comparison

You don’t have to build all of this from scratch. There are numerous tools that can help you track and improve your DORA metrics. Here’s a comparison of the most popular options:

DORA Metrics Tools Comparison

| Tool | Metrics Covered | GitLab Integration | AWS Integration | Cost | Pros | Cons |

|---|---|---|---|---|---|---|

| GitLab Value Stream Analytics | All 4 metrics | Native (built-in) | None | Free (included in GitLab) | Native GitLab integration, no setup required | Limited to GitLab, no AWS support, basic visualizations |

| Datadog CI Visibility | All 4 metrics | Via API integration | Via AWS integration | $$ | Powerful visualizations, integrates with entire Datadog ecosystem | Expensive, steep learning curve |

| Jira + Jenkins | All 4 metrics | Via plugins | Via plugins | $ (existing tools likely already paid for) | Uses existing tools, flexible | Complex setup, multiple integrations required |

| Grafana + Prometheus (Custom) | All 4 metrics | Via custom scripts | Via custom scripts | Free | Full control, powerful dashboards, cost-effective | Requires development effort, ongoing maintenance |

| LinearB | All 4 metrics | Native | Limited | $$$ | Focuses on engineering efficiency, actionable insights | Expensive, overkill for small teams |

| Faros AI | All 4 metrics | Native | Native | $$ | Excellent integrations, handles complexity well | Newer tool, smaller community |

| Harness | All 4 metrics | Native | Native | $$ | Built-in DORA dashboards, strong CD features | Requires using Harness for deployments |

| New Relic | All 4 metrics | Via API | Native | $$ | Strong APM integration, good visualizations | Expensive, can be complex to configure |

My Recommendation

For most teams, I recommend starting with GitLab’s built-in Value Stream Analytics if you’re already using GitLab. It’s free, requires zero setup, and gives you immediate visibility into your DORA metrics.

However, if you’re serious about using DORA metrics to drive improvement (which you should be), I recommend building a custom Grafana + Prometheus solution. Here’s why:

- Cost: Free and open-source

- Flexibility: You can customize metrics, visualizations, and alerts to match your specific needs

- Integration: Works with GitLab, AWS, GitHub, Jenkins, or whatever tools you use

- Control: You own your data and can extend the solution as needed

The scripts I provided earlier in this post are a solid foundation. From there, you can:

- Add Slack notifications when metrics degrade

- Build team-specific dashboards

- Create historical reports for management

- Integrate with incident management tools

Common Pitfalls When Implementing DORA Metrics

I’ve helped dozens of teams implement DORA metrics, and I’ve seen the same mistakes over and over. Here are the most common pitfalls and how to avoid them.

Pitfall 1: Gaming the Metrics

This is the #1 problem. Teams realize they’re being measured on deployment frequency, so they start deploying tiny, meaningless changes just to boost their numbers.

What it looks like:

- Deploying config changes with no actual code changes

- Splitting one feature into 10 tiny PRs to increase deployment count

- Deploying to staging and calling it “production”

Why it’s a problem: You’re optimizing the metric, not the outcome. Your deployment frequency goes up, but you’re not actually delivering value faster.

How to avoid it:

- Focus on lead time as your primary metric, not deployment frequency

- Count meaningful deployments (e.g., require code changes, exclude config-only changes)

- Measure business value delivered alongside DORA metrics (feature usage, revenue impact, customer satisfaction)

Pitfall 2: Using Metrics to Punish Teams

Nothing kills a metrics initiative faster than using it for performance reviews or bonuses.

What it looks like:

- “Your bonus depends on improving MTTR by 20%”

- “Team B has better lead time than Team A”

- Linking DORA metrics to individual performance evaluations

Why it’s a problem: It creates fear. Teams stop experimenting, they avoid taking risks, and they start gaming the metrics (see Pitfall #1). DORA metrics should be for team improvement, not individual evaluation.

How to avoid it:

- Make metrics team-level, not individual

- Focus on improvement over time, not comparison between teams

- Use metrics for learning, not evaluation

- Never tie DORA metrics to compensation

Pitfall 3: Measuring Too Early

You can’t improve what you haven’t measured. But measuring without a baseline is also a mistake.

What it looks like:

- Implementing DORA tracking week 1 of a DevOps transformation

- Declaring “we need elite metrics in 3 months” with no context of current performance

- Comparing your metrics to industry benchmarks without understanding your context

Why it’s a problem: You set unrealistic expectations, create pressure, and miss the opportunity to establish meaningful baselines.

How to avoid it:

- Measure for 3-6 months before setting improvement targets

- Establish your baseline (average performance over that period)

- Set incremental goals (improve by 10-20%, not “jump to elite”)

- Compare yourself to your past performance, not just industry benchmarks

Pitfall 4: Confusing MTTR with MTBF

MTTR (Mean Time to Recovery) is about how quickly you restore service when things fail. MTBF (Mean Time Between Failures) is about how long things run before failing. They’re related, but not the same.

What it looks like:

- Focusing on preventing failures instead of recovering quickly

- Celebrating “we haven’t had an outage in 6 months” while MTTR is 3 days

- Investing heavily in prevention while ignoring recovery processes

Why it’s a problem: You can’t prevent all failures. Elite teams accept that failures will happen and focus on recovering quickly when they do.

How to avoid it:

- Embrace failure: Assume things will break

- Invest in recovery: Automated rollback, clear runbooks, well-practiced incident response

- Measure MTTR: Track it, improve it, celebrate reductions

- Practice chaos engineering: Test your recovery processes regularly

Pitfall 5: Ignoring Change Failure Rate

Deployment frequency, lead time, and MTTR get all the attention. Change failure rate often gets ignored.

What it looks like:

- Deploying faster and faster while failure rate climbs

- Celebrating reduced lead time while incidents increase

- Focusing on speed without considering stability

Why it’s a problem: Speed without stability is a trap. You’re delivering broken code faster, which is actually worse than delivering working code slower.

How to avoid it:

- Track all four metrics, not just the speed-related ones

- Set minimum thresholds: Don’t optimize speed at the expense of stability

- Celebrate low failure rates as much as fast deployments

- Invest in testing: Automated testing, canary deployments, progressive delivery

From Metrics to Improvement

Measuring DORA metrics is useless if you don’t use the data to drive improvement. Here’s a practical guide to improving each metric.

Improvement Actions by Metric

| Metric | Improvement Actions | Expected Impact |

|---|---|---|

| Deployment Frequency | • Smaller PRs (< 200 lines) • Feature flags for incomplete features • Trunk-based development • Automated testing at every stage • Self-service deployments |

Reduces batch size, increases confidence, enables frequent releases |

| Lead Time | • Reduce WIP (Work In Progress) • Auto-merge trivial changes • Reduce review bottlenecks • Parallelize testing • Reduce approval requirements |

Streamlines flow, eliminates delays, accelerates delivery |

| MTTR | • Automated rollback • Clear runbooks • Better alerting (not noisy) • Incident simulation drills • Blameless postmortems |

Accelerates recovery, reduces chaos, builds muscle memory |

| Change Failure Rate | • Shift-left testing (test early) • Canary deployments • Progressive delivery • Automated security scanning • Production-like staging environments |

Catches bugs earlier, reduces blast radius, prevents regressions |

Let me dive deeper into each metric with specific, actionable strategies.

Improving Deployment Frequency

Strategy 1: Smaller PRs

Large PRs take forever to review, are scary to deploy, and increase the risk of bugs. Keep your PRs small.

# BAD: 500-line PR with multiple features

def process_user(user):

# ... 100 lines of validation ...

# ... 50 lines of transformation ...

# ... 200 lines of business logic ...

# ... 150 lines of notification logic ...

# GOOD: Multiple small PRs

# PR 1: Add validation (50 lines)

def validate_user(user):

if not user.email:

raise ValueError("Email required")

# ... 48 more lines ...

# PR 2: Add transformation (30 lines)

def transform_user(user):

user.email = user.email.lower()

# ... 28 more lines ...

# PR 3: Add business logic (100 lines)

def calculate_user_score(user):

# ... 100 lines ...

# PR 4: Add notifications (40 lines)

def send_welcome_email(user):

# ... 40 lines ...

Aim for PRs under 200 lines. I’ve found that PRs under 100 lines get reviewed in under an hour, while PRs over 500 lines can take days.

Strategy 2: Feature Flags

Feature flags let you deploy code without releasing features. This decouples deployment from release, which is a game-changer.

# Using a feature flag service (like LaunchDarkly or Flagsmith)

import ldclient

ld_client = ldclient.get()

def show_new_dashboard(user):

# Check if feature is enabled for this user

flag_key = "new-dashboard-ui"

show_feature = ld_client.variation(flag_key, user.key, False)

if show_feature:

return render_new_dashboard()

else:

return render_old_dashboard()

With feature flags, you can deploy to production multiple times per day without exposing incomplete features to users.

Strategy 3: Trunk-Based Development

Branching patterns like GitFlow kill deployment frequency. Trunk-based development (everyone commits to main) is much faster.

# GitLab CI for trunk-based development

# .gitlab-ci.yml

test:

stage: test

script:

- pytest

only:

- main

deploy_staging:

stage: deploy

script:

- ./deploy.sh staging

environment:

name: staging

only:

- main

deploy_production:

stage: deploy

script:

- ./deploy.sh production

environment:

name: production

when: manual # Require manual approval

only:

- tags # Only deploy tagged releases

Everyone commits to main. Tests run on every commit. You can auto-deploy to staging. Production deploys happen via tags, which you create when you’re ready.

Improving Lead Time

Strategy 1: Reduce Work In Progress (WIP)

Limit how many tasks a team can work on simultaneously. This sounds counterintuitive, but it dramatically improves flow.

# Kanban board with WIP limits

# .gitlab/issue boards

In Progress:

- WIP limit: 3 (max 3 tasks per person)

Code Review:

- WIP limit: 5 (max 5 PRs awaiting review)

Testing:

- WIP limit: 2 (max 2 tasks in QA)

Deployment:

- WIP limit: 1 (max 1 task in deployment)

When your “Code Review” column hits the WIP limit, nobody can start new work until some PRs get reviewed. This forces the team to focus on finishing work, not starting it.

Strategy 2: Auto-Merge Trivial Changes

Not every PR needs thorough review. Automate approval for low-risk changes.

# GitLab CI with auto-merge for documentation changes

# .gitlab-ci.yml

auto_merge_docs:

stage: test

script:

- echo "Documentation change detected"

only:

- merge_requests

rules:

- if: '$CI_PIPELINE_SOURCE == "merge_request_event"'

changes:

- "*.md"

- "docs/**/*"

allow_failure: true

after_script:

- |

if [ $CI_COMMIT_REF_NAME == "docs" ]; then

# Auto-merge documentation PRs

curl -X PUT \

-H "PRIVATE-TOKEN: $GITLAB_TOKEN" \

"$CI_API_V4_URL/projects/$CI_PROJECT_ID/merge_requests/$CI_MERGE_REQUEST_IID" \

-d "merge_when_pipeline_succeeds=true"

fi

Documentation PRs get auto-approved and auto-merged when tests pass. This frees reviewers to focus on actual code changes.

Strategy 3: Parallelize Testing

Slow test suites kill lead time. Run tests in parallel to cut test time dramatically.

# pytest.ini for parallel testing

[pytest]

addopts = -n auto # Use all available CPUs

# Or specify exact number of workers

# addopts = -n 8 # Use 8 parallel workers

If your test suite takes 30 minutes sequentially and you have 8 CPUs, parallel testing cuts it to ~4 minutes.

Improving MTTR

Strategy 1: Automated Rollback

The fastest way to recover from a bad deployment is to automatically roll back.

# GitLab CI with automated rollback

# .gitlab-ci.yml

deploy_production:

stage: deploy

script:

- ./deploy.sh production

- ./health_check.sh || { ./rollback.sh production; exit 1; }

environment:

name: production

url: https://app.example.com

on_failure:

- ./rollback.sh production

If the health check fails, the deployment automatically rolls back. No human intervention required.

Strategy 2: Clear Runbooks

When incidents happen, nobody should be wondering what to do. Create runbooks that document common incident responses.

# Incident Runbook: Database Connection Pool Exhaustion

## Detection

- Alert: "High DB connection usage"

- Metric: db_connections > 90% of max_connections

## Impact

- Application becomes slow or unresponsive

- New requests fail with "connection timeout"

## Immediate Actions

1. Check connection pool size:

```sql

SELECT count(*) FROM pg_stat_activity;

- Identify long-running queries:

SELECT pid, now() - pg_stat_activity.query_start AS duration, query FROM pg_stat_activity WHERE state = 'active' ORDER BY duration DESC; - Kill long-running queries (if necessary):

SELECT pg_terminate_backend(pid) FROM pg_stat_activity WHERE pid = <problematic_pid>;

Permanent Fix

- Increase connection pool size in application config

- Add connection pool monitoring

- Implement query timeouts

Escalation

- If unresolved in 15 minutes: escalate to DBA team

- If unresolved in 30 minutes: declare SEV-2 incident ```

Keep runbooks in version control alongside your code. Update them after every incident.

Strategy 3: Better Alerting

Most teams have too many alerts, which makes them ignore all alerts. Focus on actionable alerts.

# Prometheus alerting rules

# alerts.yml

groups:

- name: application_alerts

rules:

# GOOD: Alert when error rate is high AND sustained

- alert: HighErrorRate

expr: rate(http_requests_total{status=~"5.."}[5m]) > 0.05

for: 5m # Sustained for 5 minutes

labels:

severity: critical

annotations:

summary: "High error rate on "

description: "Error rate is errors/sec for the last 5 minutes"

runbook: "https://runbooks.example.com/high-error-rate"

# BAD: Alert on every 5xx error (too noisy)

# - alert: Any5xxError

# expr: http_requests_total{status=~"5.."} > 0

# for: 1m

Good alerts are specific, actionable, and rare. If you get an alert, you should know exactly what to do.

Improving Change Failure Rate

Strategy 1: Shift-Left Testing

Test earlier in the development process. Bugs caught in unit tests are 10x cheaper than bugs caught in production.

# Example: Test-driven development approach

# tests/test_user_service.py

import pytest

from user_service import UserService

def test_create_user_with_invalid_email():

"""Test that invalid emails are rejected"""

service = UserService()

with pytest.raises(ValueError, match="Invalid email"):

service.create_user(email="not-an-email", name="Test User")

def test_create_user_with_duplicate_email():

"""Test that duplicate emails are rejected"""

service = UserService()

service.create_user(email="[email protected]", name="User 1")

with pytest.raises(ValueError, match="Email already exists"):

service.create_user(email="[email protected]", name="User 2")

def test_create_user_success():

"""Test successful user creation"""

service = UserService()

user = service.create_user(email="[email protected]", name="Test User")

assert user.id is not None

assert user.email == "[email protected]"

assert user.name == "Test User"

Write tests BEFORE you write code. This forces you to think about edge cases upfront.

Strategy 2: Canary Deployments

Roll out changes to a small subset of users first. If something breaks, only a small percentage of users are affected.

# Kubernetes canary deployment

# k8s/canary-deployment.yaml

apiVersion: flagger.app/v1beta1

kind: Canary

metadata:

name: my-app

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: my-app

service:

port: 8080

analysis:

interval: 1m

threshold: 5

maxWeight: 50

stepWeight: 10

metrics:

- name: request-success-rate

thresholdRange:

min: 99

interval: 1m

- name: request-duration

thresholdRange:

max: 500

interval: 1m

This configuration:

- Starts by routing 10% of traffic to the new version

- Monitors success rate and latency

- If metrics are good, increases traffic by 10% every minute

- If metrics degrade, automatically rolls back

Strategy 3: Progressive Delivery

Similar to canary, but with more gradual rollout and automatic rollback.

# Using Argo Rollouts for progressive delivery

# rollout.yaml

apiVersion: argoproj.io/v1alpha1

kind: Rollout

metadata:

name: my-app

spec:

replicas: 5

strategy:

canary:

steps:

- setWeight: 20 # 20% to new version

- pause: {duration: 10m} # Wait 10 minutes

- setWeight: 40 # 40% to new version

- pause: {duration: 10m}

- setWeight: 60 # 60% to new version

- pause: {duration: 10m}

- setWeight: 80 # 80% to new version

- pause: {duration: 10m}

analysis:

templates:

- templateName: success-rate

args:

- name: service-name

value: my-app

This gradually shifts traffic over 40 minutes, giving you plenty of time to catch issues.

DORA Metrics and Platform Engineering

One of the most powerful ways to improve your DORA metrics is through internal developer platforms and platform engineering. Let me explain why.

How Platform Engineering Improves DORA Metrics

| Platform Engineering Capability | DORA Metric Impact | Example |

|---|---|---|

| Golden Paths (pre-configured, approved deployment templates) | Reduces lead time by 40-60% | Developers use standardized templates instead of building deployment pipelines from scratch |

| Self-Service Deployments (developers can deploy without tickets) | Increases deployment frequency by 3-5x | No more waiting for DevOps team to manually approve deployments |

| Standardized Observability (built-in monitoring and alerting) | Reduces MTTR by 50% | Every service automatically gets metrics, logs, and traces |

| Automated Guardrails (security scanning, policy enforcement) | Reduces change failure rate by 30-40% | Scans run automatically, preventing non-compliant code from reaching production |

| Service Catalog (central view of all services and dependencies) | Improves all metrics by reducing context switching | Developers quickly find service ownership, APIs, and documentation |

Concrete Example: Backstage on AWS

I’m currently building an internal developer platform using Backstage on AWS. Here’s how it improves our DORA metrics:

Golden Paths for Faster Lead Time:

# backstage/templates/python-service/template.yaml

apiVersion: backstage.io/v1alpha1

kind: Template

metadata:

name: python-service

title: Python Service Template

spec:

parameters:

- title: Service Name

name: service_name

type: string

required: true

steps:

- id: scaffold

name: Scaffold Project

action: scaffolder:template

input:

url: ./skeleton

values:

service_name: $

- id: deploy

name: Deploy to EKS

action: deploy:eks

input:

cluster: production

namespace: default

service: $

- id: monitor

name: Setup Monitoring

action: monitor:prometheus

input:

service: $

metrics:

- request_rate

- error_rate

- latency

A developer clicks “Create Service”, fills in a name, and 5 minutes later:

- Code repository is created

- CI/CD pipeline is configured

- Service is deployed to EKS

- Monitoring is set up

What used to take 2-3 days now takes 5 minutes. Lead time plummets.

Self-Service Deployments for Higher Frequency:

# Backstage plugin for self-service deployments

# plugins/deploy-service/src/components/DeployButton.tsx

import React from 'react';

export const DeployButton = ({ serviceName, environment }) => {

const handleDeploy = async () => {

// Trigger deployment without any manual approval

const response = await fetch('/api/deploy', {

method: 'POST',

body: JSON.stringify({

service: serviceName,

environment: environment

})

});

if (response.ok) {

alert(`Deployed ${serviceName} to ${environment}`);

} else {

alert('Deployment failed');

}

};

return (

<button onClick={handleDeploy}>

Deploy to {environment}

</button>

);

};

Developers can deploy to production with a single button click. No tickets, no waiting for DevOps approval. Deployment frequency skyrockets.

Standardized Observability for Faster MTTR:

# Automatic observability provisioning

# resources/prometheus-rules.yaml

apiVersion: monitoring.coreos.com/v1

kind: PrometheusRule

metadata:

name: dora-metrics

spec:

groups:

- name: dora

rules:

- alert: HighErrorRate

expr: rate(http_requests_total{status=~"5.."}[5m]) > 0.05

for: 5m

annotations:

summary: "High error rate for "

runbook: "https://backstage.example.com/docs//runbooks"

Every service automatically gets:

- Metrics collection (Prometheus)

- Logging (CloudWatch)

- Tracing (X-Ray)

- Alerting (AlertManager)

- Runbooks (linked in Backstage service catalog)

When an incident occurs, developers have immediate visibility into what’s broken and a link to the runbook. MTTR drops dramatically.

I’m writing a comprehensive guide on Platform Engineering with Backstage on AWS that will cover this in depth. The key insight: platform engineering isn’t just about developer productivity—it’s a force multiplier for DORA metrics.

Sources and Further Reading

This post is based on years of practical experience implementing DORA metrics, but the research behind these metrics comes from Google’s DORA team. Here are the key sources:

- Google Cloud - DORA Research: https://cloud.google.com/blog/products/devops-sre/devops-research-and-assessment-2022

- The original DORA research, now part of Google Cloud

- Annual State of DevOps reports with updated benchmarks

- Free resources for measuring and improving DORA metrics

- Accelerate: The Science of Lean Software and DevOps by Nicole Forsgren, Jez Humble, and Gene Kim

- The book that popularized DORA metrics

- Deep dive into the research behind the four key metrics

- Essential reading for anyone serious about DevOps performance

- GitLab Value Stream Analytics: https://docs.gitlab.com/ee/user/analytics/value_stream_analytics.html

- Documentation on GitLab’s built-in DORA metrics tracking

- If you’re using GitLab, this is the easiest way to get started

- AWS DevOps Monitoring: https://docs.aws.amazon.com/devops/

- AWS’s official DevOps documentation

- Integrations with DORA metrics through CloudWatch and CodePipeline

- Grafana DORA Dashboards: https://grafana.com/grafana/dashboards/

- Community-contributed dashboards for DORA metrics

- Search for “DORA” to find pre-built dashboards you can import

- The Phoenix Project by Gene Kim, Kevin Behr, and George Spafford

- Novel about DevOps transformation (fun, not academic)

- Shows why measuring DevOps performance matters

- Project to Product by Mik Kersten

- Framework for measuring software delivery at scale

- Builds on DORA metrics with additional context for enterprise organizations

Measuring What Matters

When I started this post, I shared a story about being asked “How are we doing?” and not having a good answer. Here’s how that story ends:

After discovering DORA metrics, I spent a month building a simple dashboard using the GitLab API. I pulled deployment frequency, lead time, MTTR, and change failure rate for the past 90 days. Then I presented the data to my VP.

“We’re deploying once a week,” I said, pointing to the graph. “Lead time is around 4 days. MTTR is about 2 days. Our change failure rate is 35%. Across the board, we’re in the ‘medium’ category.”

He nodded, looking at the numbers. “Okay. So we’re average. What’s the plan?”

“Three things,” I said. “First, we’re implementing automated testing to reduce failures. Second, we’re moving to trunk-based development to speed up reviews. Third, we’re setting up automated rollback to cut MTTR in half.”