Kubelet Fine-Grained Authorization: Kill the nodes/proxy Anti-Pattern

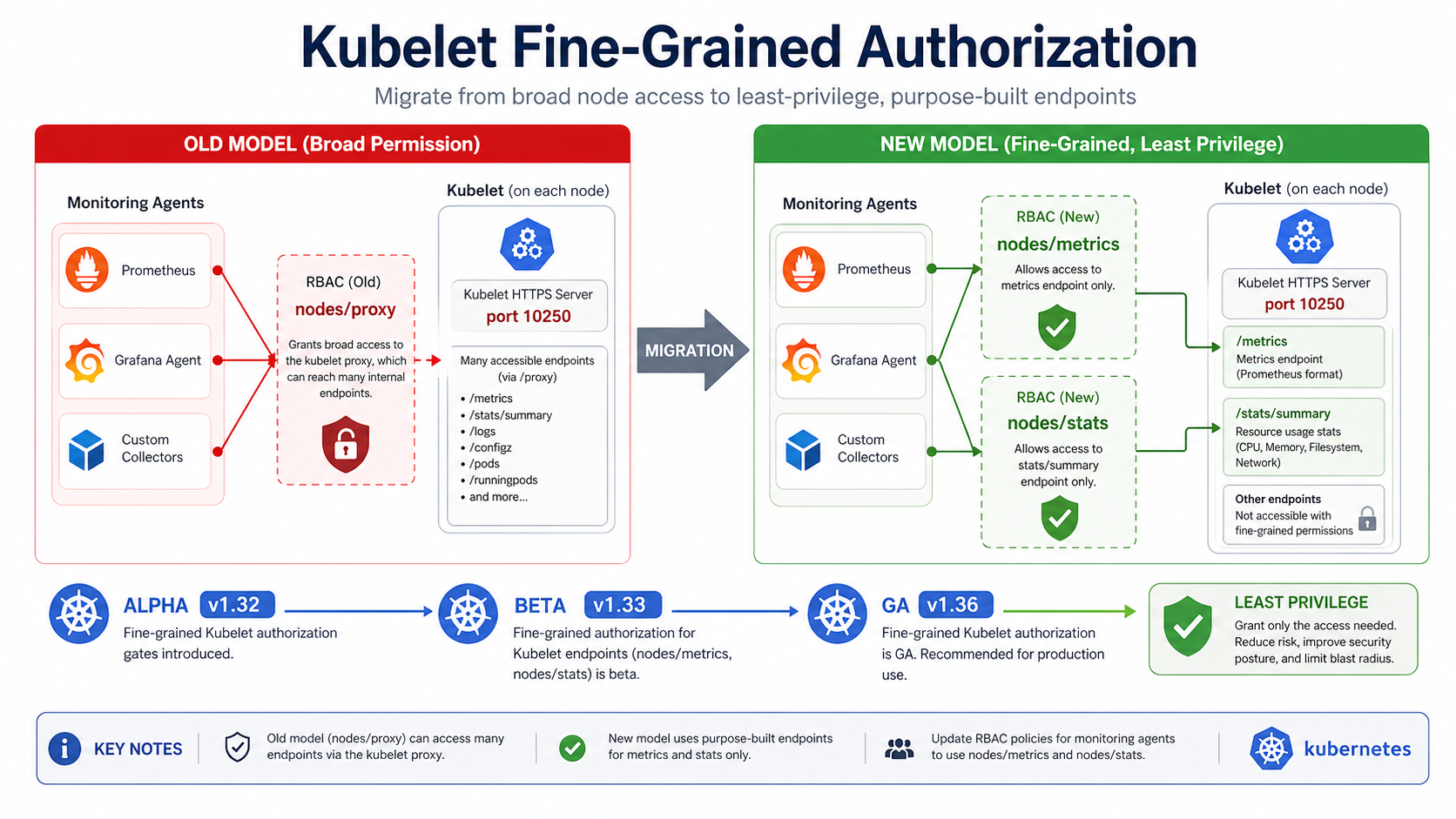

Kubernetes v1.36 makes fine-grained kubelet API authorization generally available. That sounds dry. It is not. It is the upstream answer to a nasty old habit: granting monitoring agents nodes/proxy because they need kubelet metrics.

The kubelet HTTPS API exposes sensitive paths. Metrics are there. Stats are there. Logs and pod listings are there. So is functionality that can become far more powerful than “read node telemetry.” The v1.36 feature lets clusters grant narrower subresources like nodes/metrics and nodes/stats instead of handing out the whole proxy.

What Changed

The feature path is clear: alpha in v1.32, beta and enabled by default in v1.33, GA in v1.36. Kubernetes now maps common kubelet API paths to dedicated node subresources. /metrics/* maps to nodes/metrics. /stats/* maps to nodes/stats. /logs/* maps to nodes/log. /pods and /runningPods/ can use nodes/pods.

There is backward compatibility. If the fine-grained check fails for certain endpoints, kubelet can fall back to nodes/proxy. That helps upgrades, but it also means old broad permissions can keep hiding in your ClusterRoles.

If you already use RBAC hardening for EKS, this is an easy audit item: find every subject with nodes/proxy, decide what it actually reads, and replace it with the narrowest subresource.

I would not start this migration with the scary workloads. Start with the boring scraper. Pick one monitoring ServiceAccount, read the exact kubelet paths it calls, and give it only the matching subresource. If that works for a week, move the next agent. The goal is not to make a heroic RBAC pull request. The goal is to stop treating “read metrics” and “proxy arbitrary kubelet API calls” as the same permission.

The Numbers That Matter

| Fact | Number or date | Source |

|---|---|---|

| Status | GA in Kubernetes v1.36 | Kubernetes Blog |

| Feature gate path | alpha v1.32, beta v1.33, GA v1.36 | Kubernetes Blog |

| Old broad permission | nodes/proxy |

Kubernetes Blog |

| Common replacements | nodes/metrics, nodes/stats, nodes/pods |

Kubernetes Blog |

| Kubelet endpoint | HTTPS API on port 10250 | Kubernetes Blog |

Those facts are the reason this post should be published now, not next quarter. The dates are fresh, the limits are concrete, and the operational impact is clear enough for an engineer to act on today.

How It Works in Practice

Start with read-only observability. Prometheus, Datadog agents, and custom DaemonSets often scrape kubelet metrics. Those should usually need nodes/metrics, maybe nodes/stats, not nodes/proxy. The Kubernetes blog explicitly calls out ecosystem adoption for monitoring tools.

Audit before you edit. Some in-house scripts use kubectl get --raw /api/v1/nodes/$NODE/proxy/... because that path worked for years. Replace the permission first in a staging cluster, then watch scrape errors, API server audit logs, and kubelet authorization failures.

The important part is not only passing the next compliance review. It is reducing what a compromised monitoring agent can do. A metrics agent should not be one permission typo away from node-wide container command execution risk.

There is a practical way to keep the rollout calm. Leave the old ClusterRole in place for a short test window, create the narrow role beside it, then bind one ServiceAccount to the new role in staging. Watch what breaks. If the agent only needed /metrics and /stats/summary, the change should be quiet. If it also reaches into logs or pod listings, you will see that quickly and can decide whether the extra access is legitimate.

Do not skip audit logs. They tell you which user, group, or ServiceAccount still asks for nodes/proxy. That list becomes your migration backlog. Some entries will be real platform tools. Some will be scripts nobody wants to own. Those scripts are exactly where broad kubelet permissions tend to live too long.

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kubelet-metrics-reader

rules:

- apiGroups: [""]

resources: ["nodes/metrics", "nodes/stats"]

verbs: ["get"]

Then bind that role to the ServiceAccount used by the scraper. Keep a separate admin role for operators that genuinely need broader kubelet access.

Gotchas I Would Check First

- Mixed-version clusters may still rely on

nodes/proxyfallback. Plan the migration after control plane and nodes support the feature. - Some dashboards pull both metrics and stats. Give the exact subresources needed instead of guessing.

- The built-in admin role still has broad access by design. Do not use it for agents.

Decision Guide

| Existing use | Better permission |

|---|---|

Scrape /metrics |

nodes/metrics |

Read /stats/summary |

nodes/stats |

| Fetch kubelet logs | nodes/log |

| Full node troubleshooting by admin | dedicated admin role, not an app ServiceAccount |

For related background, keep these existing BitsLovers posts close: Kubernetes v1.36 changes, RBAC hardening for EKS, Prometheus and Grafana on EKS, CloudWatch Container Insights for EKS.

Sources

The migration is small, and the risk reduction is obvious. Treat nodes/proxy like an exception that needs a reason, not the default for observability.

Comments