OPA + Terraform: Policy-as-Code Guardrails in 2026

The first time someone accidentally created a p4d.24xlarge instance in production, we started taking policy-as-code seriously. No one meant to. The Terraform code was correct, the pipeline ran fine, the instance came up. It just cost $32 per hour and had no business being there. By the time anyone noticed, we’d burned several hundred dollars and had a very awkward conversation about guardrails.

That was the moment “we’ll just rely on people being careful” stopped being an acceptable strategy.

Policy-as-code is the answer. Instead of hoping engineers remember every constraint, you codify those constraints and enforce them automatically—before anything gets deployed.

What OPA Is and Why It Fits Terraform

Open Policy Agent (OPA) is a general-purpose policy engine. It takes structured data as input, runs it through rules written in its own language called Rego, and produces a decision. That’s it. The inputs and outputs can be anything JSON-shaped, which makes OPA useful across an unusual range of contexts: HTTP API authorization, Kubernetes admission control, Terraform plan validation.

The language is Rego. It’s declarative, which means you describe what you want to be true rather than writing step-by-step logic. That takes some getting used to. It doesn’t look like Python or Go. But once it clicks, it’s genuinely expressive for policy logic.

Terraform’s role in this is straightforward. When you run terraform plan, Terraform outputs a plan describing what it intends to create, update, or destroy. That plan can be exported as JSON. That JSON is data. OPA can evaluate that data against your policies. If the policies pass, you continue to terraform apply. If they fail, the pipeline stops.

This is not speculative enforcement. You’re evaluating exactly what Terraform is about to do, not a template or a static representation of your code.

Conftest: The Bridge Between Terraform and OPA

OPA itself is a library and a server. You could call the OPA API directly, but for Terraform use cases, Conftest is the practical tool. Conftest is a CLI built on OPA that lets you point it at a file and a set of Rego policies and get pass/fail output.

Install it:

brew install conftest

# or

curl -L https://github.com/open-policy-agent/conftest/releases/download/v0.51.0/conftest_0.51.0_Linux_x86_64.tar.gz | tar xz

sudo mv conftest /usr/local/bin/

The workflow with Terraform is:

terraform plan -out=tfplan.binary

terraform show -json tfplan.binary > tfplan.json

conftest test tfplan.json --policy ./policies/

The tfplan.json file is what you’re evaluating. It contains the full resource graph of intended changes. Conftest reads your Rego files from the policies directory and runs them against the plan JSON.

Understanding the Terraform Plan JSON Structure

Before writing policies, you need to understand what you’re working with. The plan JSON has a structure worth knowing:

{

"resource_changes": [

{

"type": "aws_instance",

"name": "web_server",

"change": {

"actions": ["create"],

"after": {

"instance_type": "t3.micro",

"tags": {

"Environment": "production",

"Owner": "platform-team"

}

}

}

}

]

}

Your policies operate on resource_changes. Each change has a type (the Terraform resource type), a name, the actions being taken (create, update, delete), and the after block showing the resulting state. Most policies focus on after.

Real Policies: The Rules That Actually Matter

No Public S3 Buckets

S3 public access has been the source of more data breaches than most people want to admit. This policy blocks any S3 bucket that doesn’t have public access blocked:

package terraform.aws.s3

import future.keywords.in

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_s3_bucket_public_access_block"

resource.change.actions[_] in ["create", "update"]

config := resource.change.after

not config.block_public_acls == true

msg := sprintf(

"S3 bucket public access block '%s' must have block_public_acls = true",

[resource.name]

)

}

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_s3_bucket"

resource.change.actions[_] in ["create", "update"]

not has_public_access_block(resource.name)

msg := sprintf(

"S3 bucket '%s' must have an associated aws_s3_bucket_public_access_block resource",

[resource.name]

)

}

has_public_access_block(bucket_name) {

resource := input.resource_changes[_]

resource.type == "aws_s3_bucket_public_access_block"

resource.change.after.bucket == bucket_name

}

This covers two scenarios: a public access block that isn’t configured correctly, and an S3 bucket that doesn’t have a public access block resource at all.

Mandatory Tags

Tags are how you track cost, ownership, and environment in AWS. When they’re missing, you lose visibility. This policy requires specific tags on every EC2 instance and S3 bucket:

package terraform.aws.tagging

required_tags := ["Environment", "Owner", "CostCenter"]

deny[msg] {

resource := input.resource_changes[_]

resource.type in ["aws_instance", "aws_s3_bucket", "aws_rds_cluster"]

resource.change.actions[_] in ["create", "update"]

tag := required_tags[_]

not resource.change.after.tags[tag]

msg := sprintf(

"Resource '%s' (%s) is missing required tag: %s",

[resource.name, resource.type, tag]

)

}

The required_tags array is the place you update when your organization’s tagging policy changes. The rule iterates over resource types and tags, generating a violation for each missing combination.

Instance Type Allowlist

Not all instance types belong in every environment. You can maintain an explicit allowlist:

package terraform.aws.instances

allowed_instance_types := {

"t3.micro", "t3.small", "t3.medium", "t3.large",

"m5.large", "m5.xlarge", "m5.2xlarge",

"c5.large", "c5.xlarge", "c5.2xlarge"

}

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_instance"

resource.change.actions[_] in ["create", "update"]

instance_type := resource.change.after.instance_type

not instance_type in allowed_instance_types

msg := sprintf(

"EC2 instance '%s' uses disallowed instance type '%s'. Allowed types: %v",

[resource.name, instance_type, allowed_instance_types]

)

}

This is exactly the policy that would have caught that p4d.24xlarge.

Cost Threshold Estimation

OPA can’t call AWS Pricing API in real time, but you can hardcode hourly costs for common instance types and calculate an estimated spend from the plan:

package terraform.aws.cost

hourly_costs := {

"t3.micro": 0.0104,

"t3.small": 0.0208,

"t3.medium": 0.0416,

"t3.large": 0.0832,

"m5.large": 0.096,

"m5.xlarge": 0.192,

"m5.2xlarge": 0.384,

"c5.large": 0.085,

"c5.xlarge": 0.17,

"c5.2xlarge": 0.34

}

monthly_cost_limit := 1000

instance_cost(resource) = cost {

resource.type == "aws_instance"

instance_type := resource.change.after.instance_type

hourly := hourly_costs[instance_type]

cost := hourly * 730

}

total_monthly_cost := sum([cost |

resource := input.resource_changes[_]

resource.change.actions[_] in ["create"]

cost := instance_cost(resource)

])

warn[msg] {

total_monthly_cost > monthly_cost_limit

msg := sprintf(

"Estimated monthly cost for new EC2 instances ($%.2f) exceeds threshold ($%d). Review before applying.",

[total_monthly_cost, monthly_cost_limit]

)

}

Note this uses warn rather than deny. Cost estimates are a signal, not a hard block. Your team might have a legitimate reason to exceed the threshold. The pipeline should flag it and require an explicit acknowledgment rather than fail outright.

GitLab CI Pipeline Integration

The integration with GitLab CI follows the standard Terraform pipeline pattern: plan, evaluate, apply. Policy evaluation fits cleanly between plan and apply.

stages:

- validate

- plan

- policy

- apply

variables:

TF_ROOT: ${CI_PROJECT_DIR}

TF_VERSION: "1.8.0"

CONFTEST_VERSION: "0.51.0"

image: wp-content/uploads/sites/5/2026/opa-terraform-policy-as-code-featured.jpg

name: hashicorp/terraform:${TF_VERSION}

entrypoint: [""]

.terraform_init: &terraform_init

before_script:

- terraform init -backend-config="bucket=${TF_STATE_BUCKET}"

validate:

stage: validate

<<: *terraform_init

script:

- terraform validate

plan:

stage: plan

<<: *terraform_init

script:

- terraform plan -out=tfplan.binary

- terraform show -json tfplan.binary > tfplan.json

artifacts:

paths:

- tfplan.binary

- tfplan.json

expire_in: 1 hour

policy_check:

stage: policy

image: alpine:3.19

before_script:

- apk add --no-cache curl tar

- |

curl -sL "https://github.com/open-policy-agent/conftest/releases/download/v${CONFTEST_VERSION}/conftest_${CONFTEST_VERSION}_Linux_x86_64.tar.gz" \

| tar xz -C /usr/local/bin/

script:

- conftest test tfplan.json --policy ./policies/ --output table

dependencies:

- plan

apply:

stage: apply

<<: *terraform_init

script:

- terraform apply -auto-approve tfplan.binary

dependencies:

- plan

when: manual

only:

- main

The policy_check job runs on the artifact from plan. The apply job is manual and depends on plan passing through the policy gate. If policy_check fails, GitLab won’t show the apply button as ready—the pipeline stops there.

You can also run Terraform from GitLab CI with environment-specific variable groups, which pairs well with environment-specific policy files.

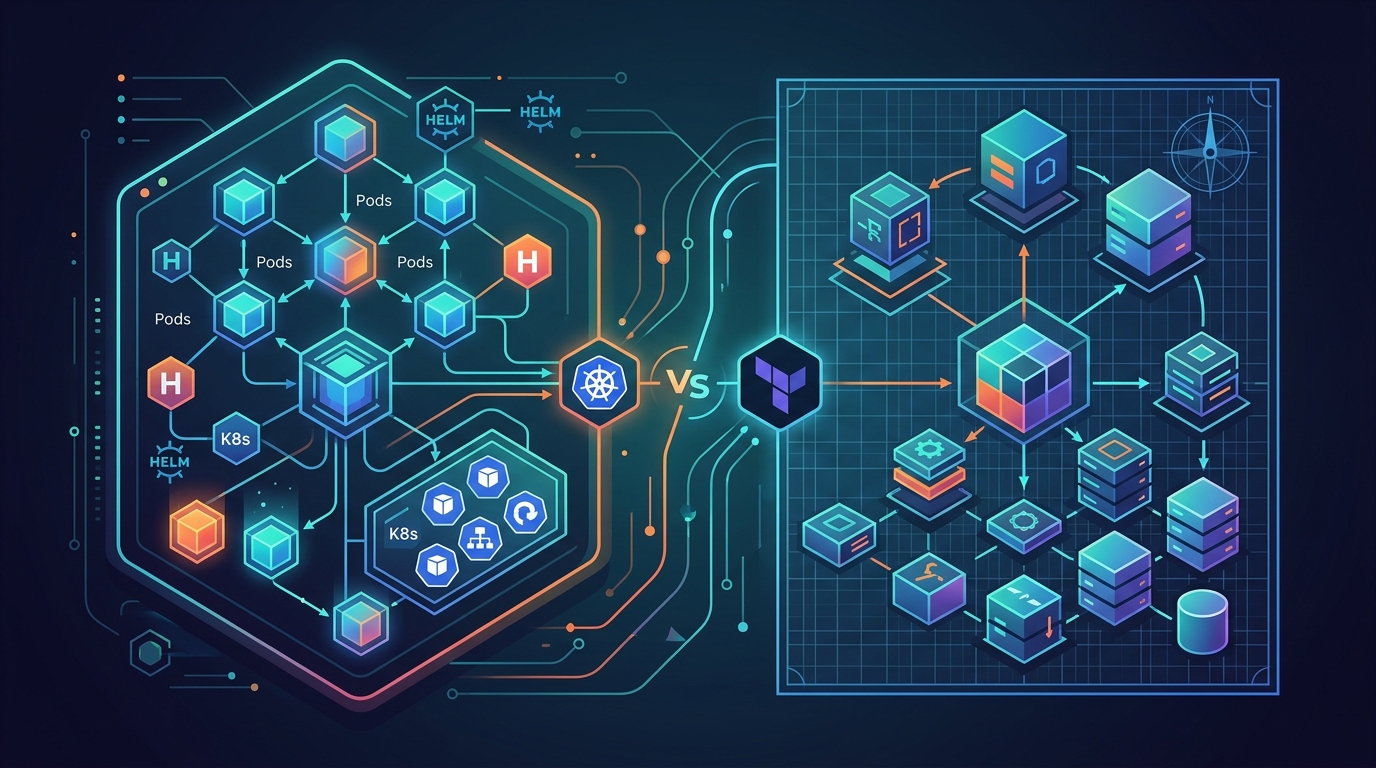

Sentinel vs OPA: Making the Choice

If you’re using Terraform Cloud or Terraform Enterprise, you’ll encounter Sentinel, which is HashiCorp’s built-in policy framework. The comparison is worth understanding.

Sentinel is tightly integrated with the Terraform workflow inside HashiCorp’s commercial offerings. You don’t need to export plan JSON or run a separate CLI—the policy evaluation is a native step. Policies run in three enforcement levels: advisory (warn and continue), soft-mandatory (warn but allow override), and hard-mandatory (block no matter what). The syntax is its own language, similar to Python.

OPA with Conftest works anywhere. It’s not tied to Terraform Cloud. If you’re running self-hosted GitLab CI or GitHub Actions or any other CI system, OPA is the practical choice. The Rego language is more general-purpose, which matters if you’re also using OPA for Kubernetes admission control—you reuse the same tooling and knowledge. The tradeoff is that you manage the integration yourself.

For teams on Terraform Cloud, Sentinel makes sense. For everyone else, OPA is the standard.

Testing Your Rego Policies

Policies have bugs. The only way to trust your policies is to test them. OPA has a built-in test framework that runs Rego test files alongside your policy files.

Create a test plan JSON fixture:

{

"resource_changes": [

{

"type": "aws_instance",

"name": "bad_instance",

"change": {

"actions": ["create"],

"after": {

"instance_type": "p4d.24xlarge",

"tags": {

"Environment": "production"

}

}

}

}

]

}

Write a test file (policies/terraform_test.rego):

package terraform.aws.instances

test_deny_disallowed_instance_type {

deny[_] with input as {

"resource_changes": [{

"type": "aws_instance",

"name": "bad_instance",

"change": {

"actions": ["create"],

"after": {

"instance_type": "p4d.24xlarge"

}

}

}]

}

}

test_allow_approved_instance_type {

not deny[_] with input as {

"resource_changes": [{

"type": "aws_instance",

"name": "good_instance",

"change": {

"actions": ["create"],

"after": {

"instance_type": "t3.medium"

}

}

}]

}

}

Run the tests:

opa test policies/ -v

Test names starting with test_ are automatically discovered and run. You should have positive tests (policy correctly denies bad input) and negative tests (policy correctly allows good input). Both matter. A policy that denies everything passes all your denial tests but breaks everything else.

Building an Organization-Wide Policy Library

Once you have more than one team using Terraform, you want policies enforced consistently. The pattern that works is a shared policy repository.

Structure it like this:

policies/

aws/

s3.rego

ec2.rego

iam.rego

networking.rego

tagging.rego

cost.rego

gcp/

compute.rego

storage.rego

base/

naming.rego

required_tags.rego

tests/

aws/

s3_test.rego

ec2_test.rego

gcp/

compute_test.rego

Teams pull this repository in their CI pipeline using a Git submodule or by referencing a versioned release. When a new policy lands, it gets tagged. Teams pin to a version and upgrade on their own schedule—this avoids the situation where a new mandatory tag requirement breaks ten pipelines at once without warning.

Conftest supports pulling policies from OCI registries and HTTP endpoints too, which gives you a centralized distribution mechanism without requiring every team to manage the submodule:

conftest pull https://your-policy-server.internal/policies.tar.gz

conftest test tfplan.json --policy ./policies/

The versioning discipline is the part most teams skip and later regret. Tag your releases. Have a changelog. Give teams a migration window. Policy libraries that move too fast stop being trusted.

For more on structuring Terraform code that these policies will evaluate, see the Terraform testing guide and the discussion on Terraform vs OpenTofu in 2026.

When Policy-as-Code Is Overkill

Not every team needs this. The complexity is real and the maintenance cost doesn’t disappear.

If you have one Terraform repository, two engineers who talk to each other daily, and a straightforward AWS setup with no shared infrastructure, Conftest adds ceremony without proportional value. A code review catches the same issues. The cognitive overhead of maintaining Rego policies and keeping test fixtures current is not free.

Policy-as-code earns its keep when teams scale past the point where direct communication covers everything. When you have multiple teams deploying infrastructure independently, when audit trails matter for compliance, when a single misconfiguration can have significant cost or security consequences—that’s when the investment pays off.

It also makes sense when you have a platform team responsible for guardrails that product teams shouldn’t need to think about. The policies live in one place, the platform team owns them, and product teams get automated enforcement as a side effect of using the standard pipeline. Nobody has to remember the rules because the rules run automatically.

The p4d.24xlarge incident was the moment that shifted our thinking. Before that, we were the team that would definitely remember to check instance types. After that, we were the team with a policy.

Related Posts

Comments