Terraform Testing in 2026: Native Tests, Terratest, and OPA

I shipped Terraform code without tests for years. Then a terraform apply deleted a production database because a conditional flipped. The resource had a lifecycle { prevent_destroy = true } block — but a variable change caused Terraform to destroy the old resource and create a new one instead of updating it. The prevent_destroy doesn’t stop a replacement. It just yells at you. We had backups. We recovered. But it took four hours on a Saturday night, and I spent the whole time explaining to my manager why nobody had caught this before running it against prod.

That was the last time I pushed Terraform without tests.

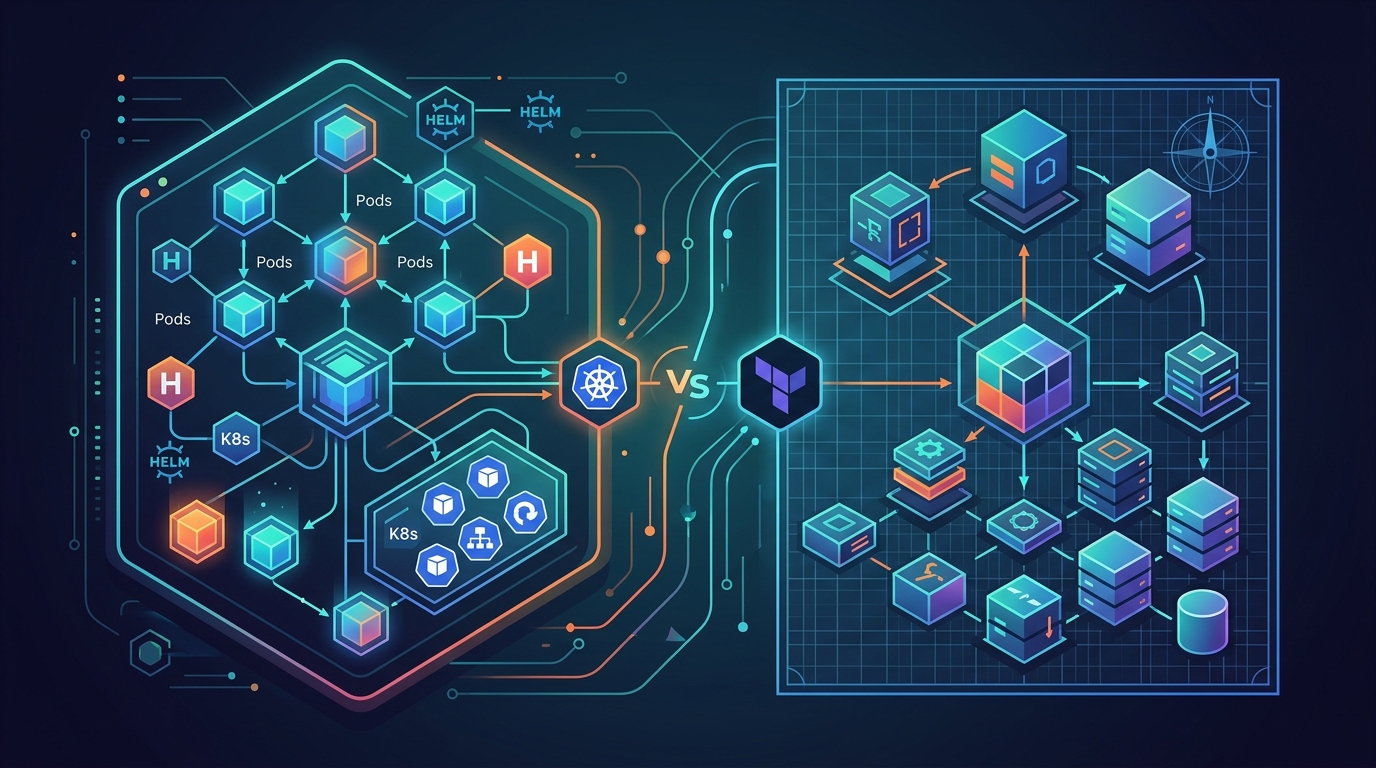

In 2026 we have three serious testing layers for Terraform code: the native terraform test command with .tftest.hcl files, Terratest for real integration tests written in Go, and OPA/Conftest for policy validation on plan output. These aren’t alternatives. They’re a pyramid. Use all three, know when each one applies, and your infrastructure code becomes as testable as your application code.

The Testing Pyramid for Infrastructure

Before we go deep on each tool, the mental model matters.

Unit tests cover your Terraform module logic in isolation. They check that your code computes the right values, produces the expected resource configurations, and handles variables correctly — without creating anything in AWS. They run in seconds. They catch configuration mistakes before any API call happens.

Integration tests spin up real infrastructure in a real account. They deploy your module, run assertions against the actual AWS resources, and tear everything down. They catch IAM permission issues, resource limit errors, and the class of bugs that only appear when your configuration hits a real API.

Policy tests run against the Terraform plan JSON. They don’t create anything. They just read the plan and fail if it violates your rules — like “never create an S3 bucket without encryption” or “this environment cannot delete an RDS instance.”

You want all three. Unit tests give you fast feedback in every commit. Policy tests enforce guardrails in CI before anyone can apply dangerous changes. Integration tests give you the confidence that the whole thing works before you promote to production.

Native terraform test: The Unit Testing Layer

HashiCorp shipped the terraform test command in Terraform 1.6 and it matured significantly through 2024 and 2025. If you’re on OpenTofu, the same syntax works there too. For root configs and simpler modules, this is your first line of defense.

Test files use the .tftest.hcl extension. They sit in your module directory or in a dedicated tests/ subdirectory. The runner picks them up automatically.

Here’s a real test file for an S3 module:

# tests/s3_bucket.tftest.hcl

variables {

bucket_name = "test-bucket-unit"

environment = "test"

force_destroy = true

}

run "bucket_has_correct_tags" {

command = plan

assert {

condition = aws_s3_bucket.this.tags["Environment"] == "test"

error_message = "S3 bucket is missing the correct Environment tag"

}

assert {

condition = aws_s3_bucket.this.tags["ManagedBy"] == "terraform"

error_message = "S3 bucket is missing the ManagedBy tag"

}

}

run "versioning_enabled_by_default" {

command = plan

assert {

condition = aws_s3_bucket_versioning.this.versioning_configuration[0].status == "Enabled"

error_message = "Versioning should be enabled by default"

}

}

run "bucket_not_public" {

command = plan

assert {

condition = aws_s3_bucket_public_access_block.this.block_public_acls == true

error_message = "Public ACLs should be blocked"

}

assert {

condition = aws_s3_bucket_public_access_block.this.restrict_public_buckets == true

error_message = "Public bucket restrictions should be enabled"

}

}

Notice command = plan. These assertions run against the plan output — no resources are created. Run them with:

terraform test

That’s it. Terraform initializes, creates a plan, and evaluates every assertion. No AWS resources. No cost. Runs in a few seconds.

Mock Providers

Where native terraform test gets genuinely powerful is mock providers. You can test modules that call AWS without hitting the real AWS API at all. The mock provider intercepts every provider call and returns synthetic data you define.

# tests/rds_module.tftest.hcl

mock_provider "aws" {

mock_resource "aws_db_instance" {

defaults = {

id = "mock-db-instance-id"

endpoint = "mock-db.cluster.us-east-1.rds.amazonaws.com"

port = 5432

status = "available"

}

}

mock_resource "aws_db_subnet_group" {

defaults = {

id = "mock-subnet-group"

name = "mock-subnet-group"

}

}

}

variables {

db_name = "appdb"

instance_class = "db.t3.micro"

engine_version = "15.4"

environment = "test"

subnet_ids = ["subnet-mock1", "subnet-mock2"]

vpc_id = "vpc-mock"

}

run "rds_multi_az_disabled_for_test" {

command = plan

assert {

condition = aws_db_instance.this.multi_az == false

error_message = "Multi-AZ should be disabled in test environment"

}

}

run "rds_deletion_protection_enabled_in_prod" {

variables {

environment = "prod"

}

command = plan

assert {

condition = aws_db_instance.this.deletion_protection == true

error_message = "Deletion protection must be enabled in production"

}

}

Mock providers mean your unit tests don’t need AWS credentials. They run in your local environment, in a Docker container, anywhere. This is the class of bugs the Backstage story at the top of this post would have caught — the conditional that changed deletion_protection based on environment had never been tested.

When terraform test Falls Short

The terraform test command with command = plan is fast and safe, but it has limits. It can’t tell you whether your IAM policies are correct — because IAM doesn’t validate permission boundaries until you actually try to assume a role and call an API. It can’t tell you whether your security group rules allow the right traffic — you’d need to run an actual EC2 instance to test that. It can’t validate that your ECS task can pull from ECR with the execution role you configured.

For those cases, you need real infrastructure. That means Terratest.

Terratest: Integration Testing with Real AWS Resources

Terratest is a Go library from Gruntwork that’s been the industry standard for integration testing Terraform since around 2018. You write Go tests that deploy your Terraform code, make real API calls to validate the result, and tear everything down in the defer block.

If you’ve never written Go before, the learning curve is real but shallow. You mainly need to understand how defer works and how to run assertions. Terratest abstracts the hard parts.

Here’s a test that deploys an S3 bucket module and verifies it:

// test/s3_bucket_test.go

package test

import (

"testing"

"time"

"github.com/gruntwork-io/terratest/modules/aws"

"github.com/gruntwork-io/terratest/modules/random"

"github.com/gruntwork-io/terratest/modules/terraform"

"github.com/stretchr/testify/assert"

"github.com/stretchr/testify/require"

)

func TestS3BucketModule(t *testing.T) {

t.Parallel()

awsRegion := "us-east-1"

bucketName := fmt.Sprintf("test-bucket-%s", random.UniqueId())

terraformOptions := terraform.WithDefaultRetryableErrors(t, &terraform.Options{

TerraformDir: "../",

Vars: map[string]interface{}{

"bucket_name": bucketName,

"environment": "test",

"force_destroy": true,

},

EnvVars: map[string]string{

"AWS_DEFAULT_REGION": awsRegion,

},

})

defer terraform.Destroy(t, terraformOptions)

terraform.InitAndApply(t, terraformOptions)

// Verify the bucket exists

aws.AssertS3BucketExists(t, awsRegion, bucketName)

// Verify versioning is enabled

actualVersioningStatus := aws.GetS3BucketVersioning(t, awsRegion, bucketName)

assert.Equal(t, "Enabled", actualVersioningStatus)

// Verify public access block

publicAccessBlock := aws.GetS3BucketPublicAccessBlock(t, awsRegion, bucketName)

assert.True(t, publicAccessBlock.BlockPublicAcls)

assert.True(t, publicAccessBlock.RestrictPublicBuckets)

// Verify tags

tags := aws.GetTagsForS3Bucket(t, awsRegion, bucketName)

require.Equal(t, "test", tags["Environment"])

require.Equal(t, "terraform", tags["ManagedBy"])

}

Run it with:

cd test

go test -v -timeout 30m -run TestS3BucketModule

The defer terraform.Destroy is non-negotiable. If you forget it, every test run leaves orphaned AWS resources. The test runs terraform init and terraform apply, waits for the resources to exist, runs your assertions, then destroys. If the assertions fail, defer still fires. Resources still get cleaned up.

Testing a VPC Module End-to-End

For something more complex — like a VPC module — you want to verify the actual networking configuration, not just that resources exist:

func TestVPCModule(t *testing.T) {

t.Parallel()

awsRegion := "us-east-1"

vpcName := fmt.Sprintf("test-vpc-%s", random.UniqueId())

terraformOptions := terraform.WithDefaultRetryableErrors(t, &terraform.Options{

TerraformDir: "../modules/vpc",

Vars: map[string]interface{}{

"vpc_name": vpcName,

"vpc_cidr": "10.100.0.0/16",

"private_subnet_cidrs": []string{"10.100.1.0/24", "10.100.2.0/24"},

"public_subnet_cidrs": []string{"10.100.101.0/24", "10.100.102.0/24"},

"availability_zones": []string{"us-east-1a", "us-east-1b"},

"enable_nat_gateway": true,

},

})

defer terraform.Destroy(t, terraformOptions)

terraform.InitAndApply(t, terraformOptions)

vpcID := terraform.Output(t, terraformOptions, "vpc_id")

privateSubnetIDs := terraform.OutputList(t, terraformOptions, "private_subnet_ids")

publicSubnetIDs := terraform.OutputList(t, terraformOptions, "public_subnet_ids")

// Verify VPC exists with correct CIDR

vpc := aws.GetVpcById(t, vpcID, awsRegion)

assert.Equal(t, "10.100.0.0/16", aws.GetCidrBlock(vpc))

// Verify correct number of subnets

assert.Equal(t, 2, len(privateSubnetIDs))

assert.Equal(t, 2, len(publicSubnetIDs))

// Verify private subnets do NOT have a public IP assignment

for _, subnetID := range privateSubnetIDs {

subnet := aws.GetSubnetByID(t, subnetID, awsRegion)

assert.False(t, aws.GetMapPublicIPOnLaunch(subnet),

"Private subnet %s should not assign public IPs", subnetID)

}

// Verify public subnets have internet gateway routing

for _, subnetID := range publicSubnetIDs {

routeTable := aws.GetRouteTableForSubnet(t, subnetID, vpcID, awsRegion)

assert.True(t, aws.IsPublicSubnet(t, routeTable),

"Public subnet %s should have a route to the internet gateway", subnetID)

}

}

This catches the bugs that only appear when you hit the real API. Wrong CIDR blocks fail GetVpcById. Missing route table associations fail the subnet route check. An IAM permission gap on your CI runner fails at terraform apply with a clear error — and you fix it before it bites you in production.

The Cost Problem

Terratest runs real infrastructure. That costs real money. For an S3 module test, the cost is negligible. For an RDS test with a db.t3.medium instance, you’re paying by the minute, and the test might run for fifteen minutes.

Strategies that actually work: use the smallest viable instance sizes in test configurations (db.t3.micro, t3.nano). Never run integration tests on every commit — they belong in a nightly build or on merge to main. Use a dedicated test AWS account with budget alerts. Tag every resource created by Terratest with CreatedBy=terratest and run a scheduled Lambda to nuke anything older than two hours. Check the for_each pattern when building test helpers that iterate over multiple configurations — it’ll save you from copy-pasting resource blocks in your test fixtures.

OPA and Conftest: Policy-as-Code on Plan Output

Open Policy Agent (OPA) with Conftest gives you something neither of the above tools provides: policy enforcement against the Terraform plan before anything gets created or modified.

The workflow: generate a plan JSON, pass it through Conftest, fail the pipeline if any policy violation is detected. No AWS resources. No Go tests. Just rules evaluated against a JSON document.

First, generate the plan JSON in your pipeline:

terraform init

terraform plan -out=tfplan.binary

terraform show -json tfplan.binary > tfplan.json

Now write a Rego policy. This one enforces S3 encryption and blocks production RDS deletions:

# policy/terraform.rego

package main

import future.keywords.in

# Deny unencrypted S3 buckets

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_s3_bucket"

resource.change.actions[_] == "create"

not resource.change.after.server_side_encryption_configuration

msg := sprintf(

"S3 bucket '%s' must have server-side encryption configured",

[resource.address]

)

}

# Deny RDS deletion in production

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_db_instance"

resource.change.actions[_] == "delete"

resource.change.before.tags.Environment == "production"

msg := sprintf(

"Refusing to delete production RDS instance '%s'. Manual override required.",

[resource.address]

)

}

# Warn about resources without required tags

warn[msg] {

resource := input.resource_changes[_]

resource.change.actions[_] in ["create", "update"]

required_tags := {"Environment", "Owner", "ManagedBy"}

actual_tags := {k | resource.change.after.tags[k]}

missing := required_tags - actual_tags

count(missing) > 0

msg := sprintf(

"Resource '%s' is missing required tags: %v",

[resource.address, missing]

)

}

# Deny security groups with wide-open ingress

deny[msg] {

resource := input.resource_changes[_]

resource.type == "aws_security_group_rule"

resource.change.actions[_] == "create"

resource.change.after.type == "ingress"

resource.change.after.cidr_blocks[_] == "0.0.0.0/0"

resource.change.after.from_port == 0

resource.change.after.to_port == 65535

msg := sprintf(

"Security group rule '%s' allows unrestricted ingress on all ports",

[resource.address]

)

}

Run it with Conftest:

conftest test tfplan.json --policy policy/

Output on a violation:

FAIL - tfplan.json - main - S3 bucket 'module.logs.aws_s3_bucket.this' must have server-side encryption configured

FAIL - tfplan.json - main - Refusing to delete production RDS instance 'aws_db_instance.primary'. Manual override required.

2 tests, 0 passed, 0 warnings, 2 failures, 0 exceptions

Exit code is non-zero. The pipeline fails. Nobody applies a plan with those violations until the code is fixed.

OPA policies are the thing I wish I’d had before the Saturday incident. The policy above would have caught the RDS deletion before the plan ever got near terraform apply.

Putting the Pyramid in a GitLab CI Pipeline

Here’s a complete .gitlab-ci.yml that wires all three layers together. It connects to the GitLab CI Terraform pipeline pattern with the testing layers added on top:

# .gitlab-ci.yml

stages:

- validate

- unit-test

- policy-check

- plan

- integration-test

- apply

variables:

TF_VERSION: "1.9.0"

TF_WORKING_DIR: "."

AWS_DEFAULT_REGION: "us-east-1"

image: wp-content/uploads/sites/5/2026/terraform-testing-2026-featured.jpg

name: hashicorp/terraform:${TF_VERSION}

entrypoint: [""]

.terraform_base:

before_script:

- terraform --version

- terraform init -backend-config="key=${CI_PROJECT_NAME}/${CI_ENVIRONMENT_NAME}/terraform.tfstate"

# Layer 0: Syntax and format validation

validate:

extends: .terraform_base

stage: validate

script:

- terraform validate

- terraform fmt -check -recursive

rules:

- if: $CI_PIPELINE_SOURCE == "merge_request_event"

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH

# Layer 1: Unit tests with terraform test

unit_test:

extends: .terraform_base

stage: unit-test

script:

- terraform test -filter=tests/unit

rules:

- if: $CI_PIPELINE_SOURCE == "merge_request_event"

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH

artifacts:

when: always

reports:

junit: test-results.xml

# Layer 2: Policy validation with OPA/Conftest

policy_check:

extends: .terraform_base

stage: policy-check

image: alpine:3.19

before_script:

- apk add --no-cache curl terraform

- curl -L https://github.com/open-policy-agent/conftest/releases/download/v0.50.0/conftest_0.50.0_Linux_x86_64.tar.gz | tar xz

- mv conftest /usr/local/bin/

- terraform init

script:

- terraform plan -out=tfplan.binary

- terraform show -json tfplan.binary > tfplan.json

- conftest test tfplan.json --policy policy/ --output github

artifacts:

paths:

- tfplan.json

expire_in: 1 day

rules:

- if: $CI_PIPELINE_SOURCE == "merge_request_event"

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH

# Standard plan for review

plan:

extends: .terraform_base

stage: plan

script:

- terraform plan -out=tfplan.binary

- terraform show -no-color tfplan.binary > tfplan.txt

artifacts:

paths:

- tfplan.binary

- tfplan.txt

expire_in: 1 day

rules:

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH

# Layer 3: Integration tests — nightly only

integration_test:

stage: integration-test

image: golang:1.22-alpine

before_script:

- apk add --no-cache terraform git

- cd test && go mod download

script:

- cd test && go test -v -timeout 60m -run TestIntegration ./...

rules:

- if: $CI_PIPELINE_SOURCE == "schedule"

after_script:

- cd test && go test -v -timeout 10m -run TestCleanup ./... || true

# Apply — manual gate for production

apply:

extends: .terraform_base

stage: apply

script:

- terraform apply -auto-approve tfplan.binary

dependencies:

- plan

rules:

- if: $CI_COMMIT_BRANCH == $CI_DEFAULT_BRANCH

when: manual

environment:

name: production

The when: manual gate on apply matters. Automated plans and tests are fine to run on every commit. Actually applying to production requires a human to click a button. See the full GitLab CI IaC pipeline post for how to wire this to remote state and workspace management.

Testing Modules vs Root Configs

There’s a meaningful difference between testing a reusable Terraform module and testing a root configuration that stitches modules together.

For modules, you want comprehensive unit tests and integration tests. The module is a contract — it accepts inputs and produces resources. You test that contract thoroughly because other teams depend on it. Every variable combination that produces different behavior should have a test. Every edge case in your conditionals should have a test.

For root configs, the story changes. Your root config mostly calls modules with specific variable values. Most of the interesting logic lives in the modules, not the root. Your root config testing is about the integration — does this combination of modules produce a working system? That’s an integration test, not a unit test. And it’s expensive.

My approach: modules get full unit test coverage with terraform test and mock providers, plus integration tests on their own. Root configs get policy validation with OPA on every plan, plus a quarterly integration test when something significant changes. Don’t over-invest in root config unit tests — there’s usually not much logic to test.

terraform validate vs plan vs test: Know the Difference

terraform validate checks syntax and basic configuration correctness. It verifies that your HCL parses, that references to resources exist, and that required attributes are present. It’s fast (under a second) and catches typos. It doesn’t need AWS credentials. Run it on every commit as your first gate.

terraform plan goes further. It resolves data sources, calls provider APIs to check existing state, and computes the full diff. It tells you what will change. It needs credentials. It catches things like “this AMI doesn’t exist” or “this VPC was already deleted.” Use it for your policy validation layer — you need a real plan to run OPA against.

terraform test with command = plan sits between the two. It runs a plan scoped to your test variables and evaluates assertions against the result. It’s more targeted than a full plan because you control the inputs exactly. Use it for module logic testing.

terraform test with command = apply (the default when you omit the command) actually creates resources. It’s slower and costs money. Use it sparingly — for tests that need to verify resource behavior that only appears after creation, like checking that an RDS instance’s actual endpoint format matches your DNS automation expectations.

The hierarchy: validate → unit test (plan mode) → policy check → integration test (apply mode). Each layer catches different things and costs different amounts to run.

The Honest Part

Running all three layers consistently takes discipline. The unit tests are easy — they’re fast and free, and there’s no excuse not to run them. The policy tests are also easy once you’ve written the Rego policies, though learning Rego has a curve.

Integration tests are where teams fall off. They’re slow. They cost money. They occasionally fail because of AWS service hiccups unrelated to your code. They need a dedicated test account with the right IAM permissions. It’s easy to skip them in a crunch and tell yourself the unit tests are good enough.

They’re not. The class of bugs that integration tests catch — IAM permission gaps, resource limit errors, networking misconfigurations, provider version incompatibilities — are the bugs that cause Saturday incidents. The S3 encryption policy is a unit test problem. The missing iam:PassRole permission that breaks your ECS service is an integration test problem.

Start with terraform validate in every pipeline. Add terraform test unit tests when you write your first reusable module. Add OPA policies when you have more than one team touching the same Terraform code and you need guardrails. Add Terratest integration tests when your infrastructure is complex enough that the unit tests no longer give you confidence.

You don’t need all three on day one. You need all three before you’re running critical workloads. There’s a window between those two states — use it to build the testing infrastructure before it’s urgent.

The database incident would have cost me nothing with a policy test that said “no deletes on production RDS.” It cost me a Saturday night without one.

For the module patterns that make these tests easier to write, see Terraform Modules Best Practices — well-structured modules with clear inputs and outputs are significantly easier to test than monolithic configurations. If you’re managing multiple environments with for_each, the module testing pattern scales the same way. And if you’re evaluating Terraform vs OpenTofu for 2026, both support the .tftest.hcl format — the Conftest and Terratest layers are identical regardless of which tool you run.

Comments