Kubernetes v1.36 User Namespaces GA: Rootless Isolation That Actually Changes Risk

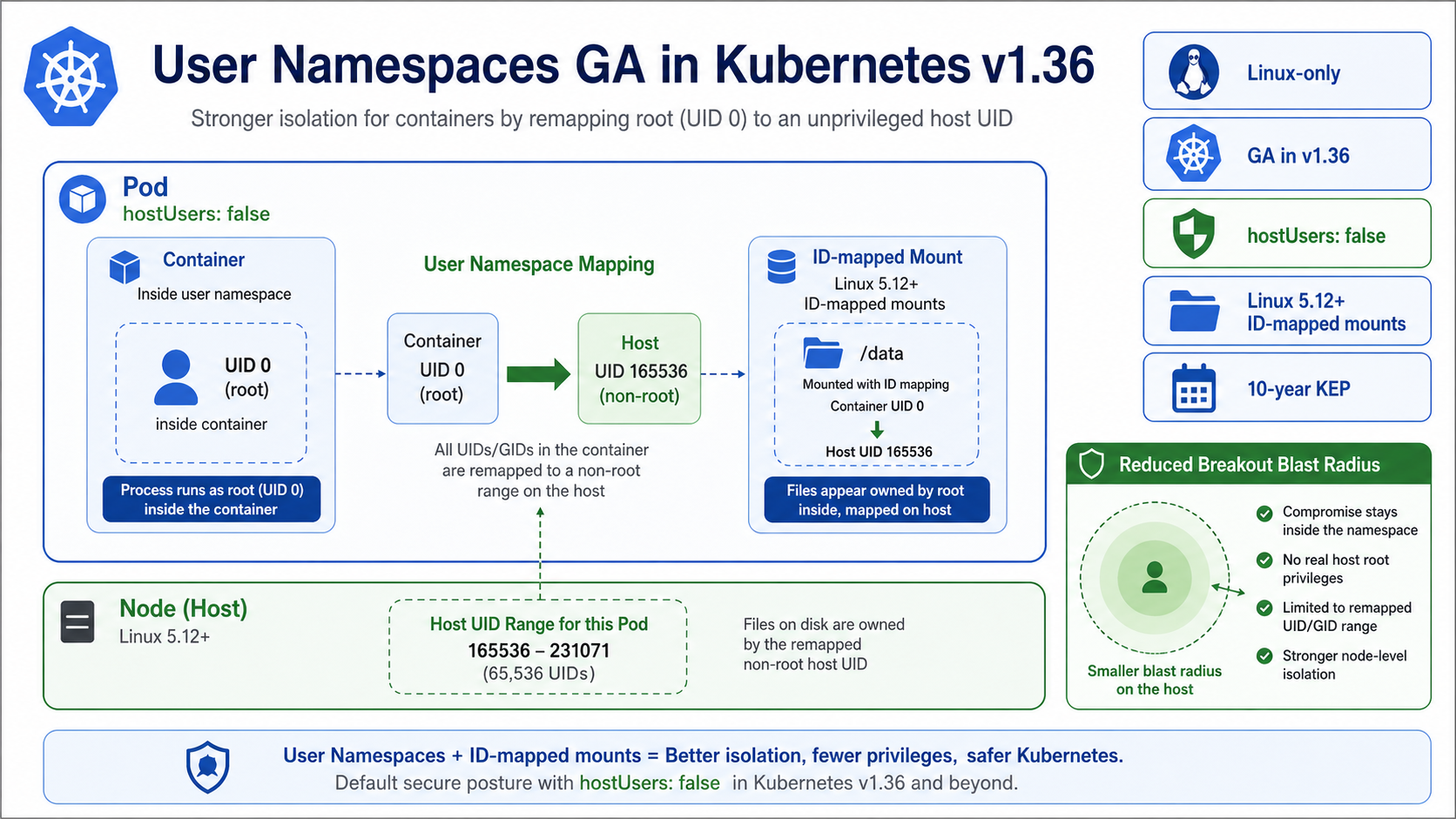

Kubernetes v1.36 promotes User Namespaces to GA, and the important field is only two words: hostUsers: false. That setting lets a pod run with user namespace isolation so UID 0 inside the container maps to a non-root UID on the host.

This is one of those features that sounds academic until you debug a container breakout path. Root inside a container has always been a loaded phrase. User namespaces make that root less useful against the host, which is exactly the kind of boring security improvement platform teams should like.

What Changed

The Kubernetes blog calls out a long road: a 10-year KEP and 6 years of active work. The GA result is Linux-only. It depends on kernel and runtime behavior, and ID-mapped mounts need Linux 5.12 or newer. That is not a checkbox you blindly enable across every cluster in one afternoon.

The win is blast-radius reduction. A process can still believe it is UID 0 inside the container. On the host, that identity is remapped. If the workload escapes a namespace or touches a mounted path in a dangerous way, the host sees a less privileged user instead of true root.

Pair this with the controls in container runtime security on EKS. User namespaces do not replace seccomp, AppArmor, SELinux, runtime scanning, admission policy, or RBAC. They make all of those controls less fragile.

The Numbers That Matter

| Fact | Number or date | Source |

|---|---|---|

| Status | General Availability in Kubernetes v1.36 | Kubernetes Blog |

| Scope | Linux-only | Kubernetes Blog |

| Pod setting | hostUsers: false |

Kubernetes Blog |

| Kernel requirement for ID-mapped mounts | Linux 5.12+ | Kubernetes Blog |

| Project history | 10-year KEP, 6 years of active work | Kubernetes Blog |

Those facts are the reason this post should be published now, not next quarter. The dates are fresh, the limits are concrete, and the operational impact is clear enough for an engineer to act on today.

How It Works in Practice

Start with a test namespace and a workload that does not use privileged mode, host networking, host PID, or unusual volume ownership tricks. Set hostUsers: false, deploy it, and inspect file ownership from inside and outside the container. That is the fastest way to teach the platform team what changed.

Storage deserves special attention. ID-mapped mounts avoid the old recursive chown tax, which matters for big volumes. But older kernels, older runtimes, or CSI drivers with assumptions about UID/GID ownership can still surprise you. Test stateful workloads separately from stateless services.

For EKS, wait for the exact Kubernetes, AMI, runtime, and managed add-on combination you run to support the behavior you need. Upstream GA is the starting line for adoption, not proof that every managed cluster permutation is ready.

apiVersion: v1

kind: Pod

metadata:

name: userns-check

spec:

hostUsers: false

containers:

- name: app

image: public.ecr.aws/docker/library/busybox:latest

command: ["sh", "-c", "id && sleep 3600"]

securityContext:

allowPrivilegeEscalation: false

After deployment, compare id inside the pod with ownership behavior on mounted paths. Do not call the rollout done until logs, metrics, exec, and volume operations all behave normally.

Gotchas I Would Check First

- This is Linux-only. Windows nodes are a separate security model.

- Privileged pods and host namespace usage can erase much of the benefit.

- Old kernels and storage drivers can turn a clean security setting into a rollout problem.

Decision Guide

| Workload type | First move |

|---|---|

| Stateless web service | Good early candidate |

| Build job running untrusted code | High-value candidate, but test runtime policy carefully |

| Privileged DaemonSet | Usually not a fit |

| Stateful workload with CSI volume | Test after stateless services |

For related background, keep these existing BitsLovers posts close: Kubernetes v1.36 release highlights, EKS RBAC security patterns, container runtime security on EKS, Cilium eBPF networking on EKS.

Sources

Use hostUsers: false where it fits, and measure compatibility before forcing it everywhere. The risk reduction is real, but the rollout still deserves engineering discipline.

Comments