Amazon Keyspaces for Cassandra in 2026: Migration Guide and Real Use Cases

Amazon Keyspaces is a serverless, fully managed database service that speaks Apache Cassandra’s query language. That description sounds cleaner than the reality: Keyspaces is not a drop-in Cassandra replacement. It’s compatible with CQL at a level that covers most production workloads but misses enough Cassandra features that a migration without auditing your schema and query patterns will produce surprises.

This guide covers what Keyspaces actually supports, where the pricing math works, how to migrate from self-hosted Cassandra without downtime, and a concrete table design for an IoT telemetry use case.

What Keyspaces Is and What It Is Not

Keyspaces runs on AWS infrastructure, replicates data across three Availability Zones automatically, and handles patching, scaling, and backups without any cluster management on your part. You connect with any Cassandra-compatible driver using the cassandra-sigv4 authentication plugin for IAM-based access — no username/password management.

The CQL compatibility covers CREATE/ALTER/DROP TABLE, SELECT with partition key and clustering column predicates, INSERT, UPDATE, DELETE, TTL, batch operations, and most data types including collections (list, set, map), user-defined types, and blobs.

What it does not support:

- Materialized views. If your application relies on MVs for denormalized read paths, you need to redesign to explicit duplicate tables or use a Lambda function to maintain them.

- Secondary indexes with low-cardinality columns. Keyspaces supports secondary indexes, but they behave differently under load — a full-partition scan can back a secondary index lookup in ways that are expensive and unpredictable. Treat secondary indexes as a convenience for low-traffic queries only.

- Lightweight transactions (LWT) with full semantics.

IF NOT EXISTSandIFconditions are supported for inserts and updates, but LWT performance on Keyspaces is significantly slower than on a self-managed cluster with local Paxos. Do not design hot paths around LWT. - User-defined functions and aggregates. No

CREATE FUNCTION, noCREATE AGGREGATE. - Custom partitioners. Keyspaces uses Murmur3 partitioner only.

- TRUNCATE. You delete rows explicitly or rely on TTL.

If your workload uses materialized views heavily or depends on LWT for correctness in high-throughput paths, Keyspaces requires redesign work before migration, not just a connection string change.

When Keyspaces Makes Sense

The workload profile that fits Keyspaces well is wide-column, append-heavy data with predictable access patterns by partition key. Three categories show up repeatedly:

Time-series data. Sensor readings, application metrics, clickstream events — anything where writes are continuous and reads are always “give me the last N records for this entity.” Cassandra’s data model was designed for exactly this, and Keyspaces inherits it without the cluster management.

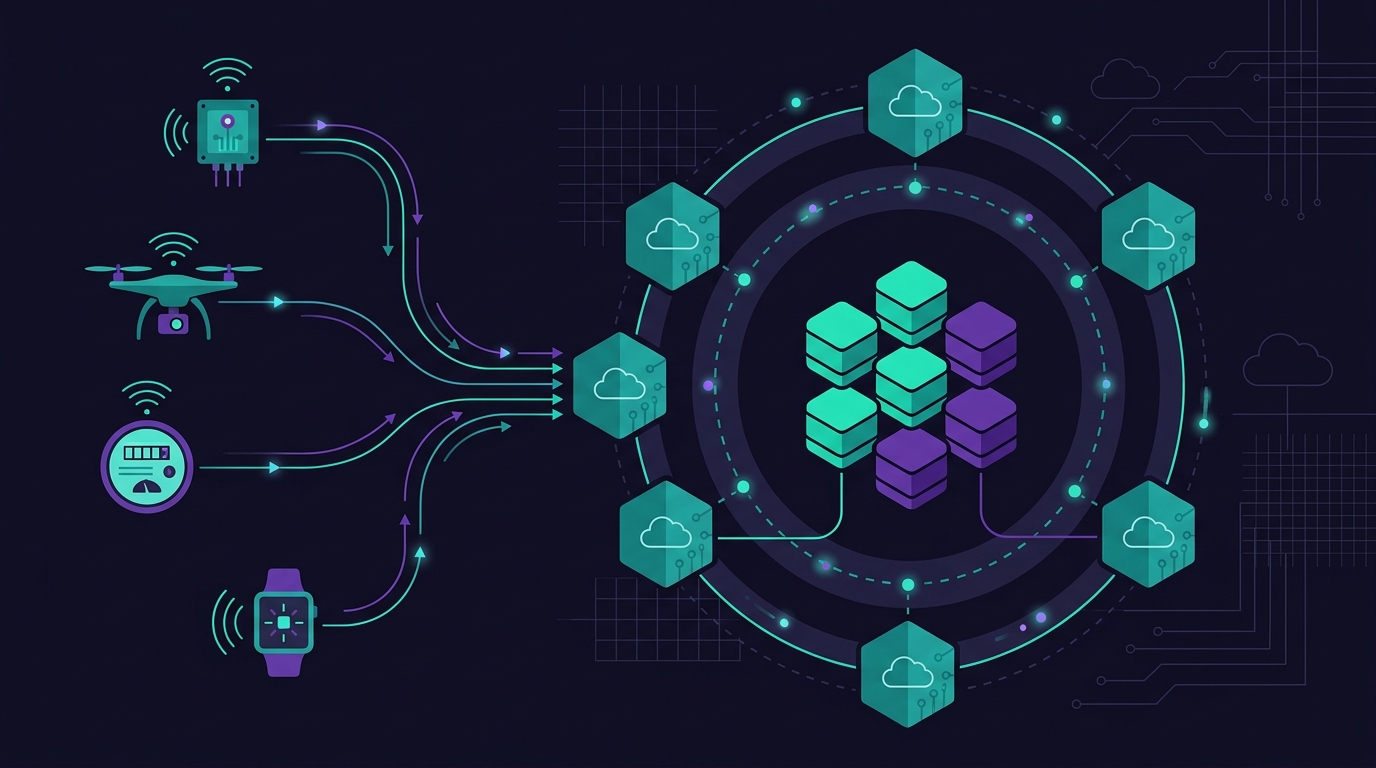

IoT telemetry. High device count, each device writing periodically, reads aggregated per device over a time window. Keyspaces scales write throughput without any manual sharding or capacity planning decisions on your part.

Teams leaving Cassandra for cost or operational reasons. Running a 6-node Cassandra cluster on EC2 r6g.xlarge instances costs roughly $9,500/year in compute alone, plus engineer time for JVM tuning, compaction management, repair jobs, and upgrades. Keyspaces can undercut that at moderate write volumes, and the operational overhead drops to near zero.

Pricing: On-Demand vs. Provisioned

Keyspaces offers two capacity modes, and the choice matters significantly at scale.

On-demand mode charges per request unit. In us-east-1:

- Write request unit (WRU): $1.45 per million

- Read request unit (RRU): $0.29 per million

- Storage: $0.25/GB/month

Provisioned mode with reserved capacity:

- Write capacity unit (WCU): $0.00065/hour per unit

- Read capacity unit (RCU): $0.00013/hour per unit

- Storage: same $0.25/GB/month

For a high-write IoT workload: 50,000 devices each writing a reading every 30 seconds. That’s ~1,667 writes/second, or ~144 million writes/day, ~4.3 billion writes/month.

On-demand cost: 4,300 × $1.45 = $6,235/month in write costs alone.

Provisioned at 1,700 WCU sustained: 1,700 × $0.00065 × 730 hours = $807/month.

The gap is massive. Any workload with predictable, sustained traffic should be on provisioned capacity with reserved capacity commitments (1-year reserved saves ~30%). On-demand is appropriate during development, for workloads with extreme spikes from a very low baseline, or when you genuinely cannot forecast traffic.

Adding TTL-based expiration (30-day retention) on a 50,000-device dataset: assuming 200 bytes per reading, 2 readings/minute per device, 30-day retention: ~50,000 × 2 × 60 × 24 × 30 × 200 bytes ≈ 864 GB. At $0.25/GB/month, that’s $216/month in storage. The total at provisioned capacity is roughly $1,023/month — versus ~$9,500/year ($792/month) just for the EC2 cluster, before any labor costs. See AWS FinOps in 2026 for how to track these costs and right-size as traffic changes.

Migrating from Self-Hosted Cassandra

A zero-downtime migration involves three phases.

Phase 1: Schema and data export. Use cqlsh with the COPY TO command for tables under a few hundred million rows:

cqlsh source-host 9042 -e "COPY keyspace.table TO 'export.csv' WITH HEADER=TRUE"

For large tables, the Keyspaces Data Migrator (AWS open-source tool) handles parallel export with configurable concurrency and automatically respects Keyspaces write throughput limits.

Phase 2: Dual-write. Update your application to write to both the source Cassandra cluster and Keyspaces simultaneously. Keep dual-write running until the backfill completes and read validation confirms data consistency. This phase typically runs 24–72 hours depending on dataset size.

Phase 3: Cut over reads. Switch read traffic to Keyspaces incrementally — start with one service or one query type. Once read traffic is fully on Keyspaces and you’ve verified correctness under production load for at least 48 hours, stop writes to the source cluster.

Before starting, run the Cassandra Compatibility Checker against your schemas. Keyspaces rejects schemas with certain index types or unsupported options at CREATE TABLE time, so discover those during Phase 1, not during Phase 3.

The Partition Key Gotcha

Partition key design on Keyspaces is more consequential than on self-managed Cassandra because throttling behavior is different.

On Cassandra, a hot partition causes localized performance degradation on the nodes that own that token range. You notice it in latency metrics and can react. On Keyspaces, a hot partition that exceeds the per-partition throughput limit gets throttled with a ProvisionedThroughputExceededException immediately, with exponential backoff expected from the client driver.

The per-partition limit in Keyspaces provisioned mode is the table’s provisioned throughput divided by the number of active partitions — but this is not evenly distributed and there is no guaranteed per-partition floor. In practice, any partition receiving more than ~3,000 WCU/second will start to see throttling even if the table-level provisioned capacity is higher.

For IoT data, the naive partition key is device_id. This works as long as no single device writes faster than ~1,500 writes/second. For higher-frequency devices, use a composite partition key: (device_id, shard) where shard is a modulo bucket (e.g., device_id % 8). Reads then require querying all shards and merging, but writes spread across partitions evenly.

Keyspaces vs. DynamoDB for Time-Series

Both services can handle time-series workloads. The choice comes down to your team and your existing code.

Keyspaces wins when:

- You have an existing Cassandra application and want to minimize rewriting

- Your query model relies on CQL range scans within a partition (e.g.,

WHERE device_id = ? AND ts > ? AND ts < ?) — this maps naturally to Cassandra clustering columns and requires no secondary index - You prefer wide rows with many columns per partition over DynamoDB’s key-value mental model

DynamoDB wins when:

- You need single-digit millisecond latency at any scale without driver-level tuning

- You want tighter AWS service integration (DynamoDB Streams → Lambda, DynamoDB Accelerator for caching, PartiQL)

- Your team has no Cassandra experience and you’re starting from scratch

For the specific case of aggregating telemetry into a reporting layer, DynamoDB Streams feeding into Redshift or S3 + Athena is a well-trodden path with solid AWS tooling. See Amazon Redshift vs DynamoDB for how those two services compare as the downstream analytics target.

Real Use Case: 30-Day IoT Telemetry

50,000 IoT devices, each sending temperature/humidity readings every 30 seconds, 30-day retention.

Table design:

CREATE KEYSPACE iot_telemetry

WITH REPLICATION = {'class': 'SingleRegionStrategy'}

AND TAGS = {'Environment': 'production'};

CREATE TABLE iot_telemetry.device_readings (

device_id text,

bucket_day date,

ts timestamp,

temperature decimal,

humidity decimal,

battery_pct smallint,

PRIMARY KEY ((device_id, bucket_day), ts)

) WITH CLUSTERING ORDER BY (ts DESC)

AND default_time_to_live = 2592000

AND TAGS = {'TTL': '30d'};

A few design decisions here:

The partition key is (device_id, bucket_day). Using bucket_day as part of the partition key caps each partition at one day of readings per device: 2 readings/minute × 60 × 24 = 2,880 rows per partition. This is a comfortable partition size and prevents any device from exceeding the per-partition throughput limit on Keyspaces.

CLUSTERING ORDER BY (ts DESC) means the most recent reading is at the top of the partition — efficient for the common query “give me the last 10 readings for this device” without a LIMIT causing a full partition scan.

default_time_to_live = 2592000 (30 days in seconds) handles expiration automatically at no extra charge beyond the storage it consumed while alive.

Read pattern for the last hour of readings for a device:

SELECT ts, temperature, humidity, battery_pct

FROM iot_telemetry.device_readings

WHERE device_id = 'sensor-abc-001'

AND bucket_day = '2026-04-04'

AND ts > '2026-04-04 09:00:00+0000'

ORDER BY ts DESC;

This query hits a single partition — no scatter-gather, no secondary index. At provisioned capacity, it returns in 2–8ms depending on result set size.

For the dashboard use case (all devices, latest reading), maintain a separate device_latest table updated on each write. On Cassandra you’d use a materialized view; on Keyspaces, a Lambda function triggered by application logic or an event on the write path handles this with acceptable overhead at this device count.

At 50,000 devices with the table design above, provisioned at 1,700 WCU and 500 RCU (for dashboard reads), with 30-day TTL managing storage to ~864 GB, the monthly cost runs to approximately $1,023 — with zero Cassandra cluster management required.

Comments