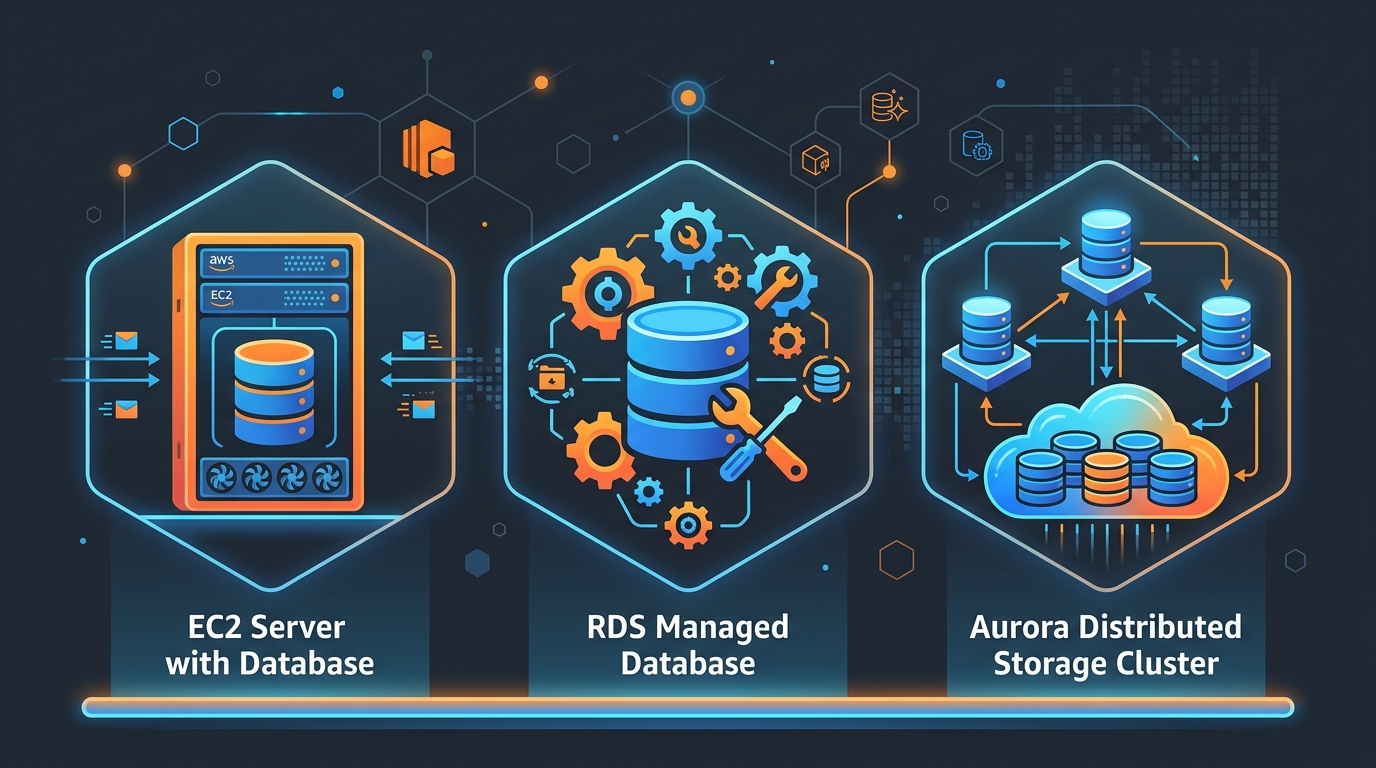

Database on EC2 vs RDS vs Aurora in 2026: When Each Makes Sense

The question of where to run your database on AWS has gotten more complicated, not less. In 2019, the answer was often “just use RDS.” In 2026, you have EC2 DIY, RDS standard, RDS Custom, Aurora Serverless v2, Aurora I/O-Optimized, and Aurora Global Database — each with a legitimate use case and real cost differences that can swing a bill by 40% or more.

This post walks through each option with actual pricing math and the specific conditions that tip the decision one way or another.

Why People Still Run Databases on EC2

Self-managed databases on EC2 haven’t gone away. Three reasons keep showing up in real production environments.

License portability. If your company already owns a perpetual Oracle or SQL Server Enterprise license, you can bring it to EC2 under the Bring Your Own License model. On RDS, Oracle SE2 on a db.r6g.2xlarge runs about $0.48/hour for the instance plus $0.23/hour for the Oracle SE2 license — roughly $5,200/year just in license fees on top of compute. If you own the license outright, EC2 cuts that line entirely.

Configuration depth. PostgreSQL’s huge_pages, custom shared memory settings, OS-level NUMA tuning, or running a patched build of MySQL with specific engine changes — none of that is possible on managed services. Teams running high-frequency trading data or specialized analytics engines often hit this wall.

Legacy migration staging. When you’re lifting a large on-premises Oracle RAC cluster to AWS, going straight to Aurora PostgreSQL in one step is rarely realistic. EC2 buys time: replicate to EC2 first, stabilize, then migrate the application layer, then consider Aurora. The intermediate state can last 6–18 months.

What Self-Managing Actually Costs You

The number that gets underestimated is engineer hours. Running a production PostgreSQL cluster on EC2 means you own:

- Backup jobs (pg_dump or WAL archiving to S3, tested restores)

- Replication setup (Patroni or repmgr for streaming replication, HAProxy or pgBouncer for failover)

- Storage scaling (EBS volume resizing without downtime requires planning)

- OS and PostgreSQL security patching — with zero maintenance window automation

- Monitoring (you build it or buy it: pg_stat_statements, CloudWatch custom metrics, PagerDuty integration)

A conservative estimate for a small team: 15–20 hours per month of DBA-adjacent work to keep a two-node PostgreSQL setup healthy on EC2. At $150/hour loaded engineering cost, that’s $27,000–$36,000/year in hidden overhead. That number alone usually closes the case for managed services for teams under 10 engineers.

RDS in 2026: What You’re Actually Paying For

RDS Multi-AZ gives you synchronous replication to a standby in a second AZ, automated failover in 60–120 seconds, automated backups to S3, OS patching during your maintenance window, and one-click minor version upgrades. The “managed service tax” is real — RDS is roughly 2–3x the price of an equivalent EC2 instance per hour — but it’s bundled with the things listed above.

A db.r6g.xlarge (4 vCPU, 32 GB RAM) in us-east-1:

- RDS Multi-AZ: ~$0.48/hour → $3,504/year on-demand, ~$2,207/year on a 1-year Reserved Instance (no upfront)

- Equivalent EC2

r6g.xlarge: ~$0.20/hour → $1,752/year on-demand

The gap closes when you account for the engineering time above. It widens when you add managed storage: RDS gp3 storage at $0.115/GB/month for 500 GB is $690/year. On EC2 you’d pay the same rate, but you’re managing the EBS volume yourself.

Read replicas on RDS add per-replica instance cost (same pricing tier as the primary) but eliminate the work of setting up and monitoring streaming replication. For a PostgreSQL SaaS app with heavy read traffic, a single read replica at the db.r6g.large tier (~$0.24/hour Multi-AZ) often handles all reporting and analytics traffic without touching the primary. See RDS PostgreSQL Blue/Green Deployment for how to run zero-downtime major version upgrades — something you’re fully responsible for on EC2.

Aurora in 2026: When the Premium Is Justified

Aurora is not just RDS with a different engine. The storage layer is fundamentally different: it’s a distributed, SSD-backed log-structured storage system that replicates six ways across three AZs. Recovery after a crash is measured in seconds, not minutes, because Aurora never needs to replay the redo log from a checkpoint.

Aurora Serverless v2 matters now because it actually scales smoothly. v1 was cold-start-prone and effectively unusable for latency-sensitive apps. v2 scales in increments of 0.5 ACUs (Aurora Capacity Units) from 0.5 to 128 ACUs, responding to load in under a second. An ACU is approximately 2 GB of RAM with proportional CPU. At $0.12/ACU-hour, a database sitting at 1 ACU costs $87.60/month. Under a burst to 16 ACUs during peak traffic, you’re paying $0.12 × 16 × (hours at peak). For apps with strong day/night traffic variation — think B2B SaaS — Serverless v2 can cut the monthly database bill by 35–50% compared to a fixed-size RDS instance.

Aurora I/O-Optimized is the 2026 pricing story worth knowing. Standard Aurora charges $0.20 per million I/O requests. For write-heavy workloads — high-throughput OLTP, audit logging, event sourcing tables — I/O charges can become 30–40% of the total Aurora bill. Aurora I/O-Optimized eliminates the per-I/O charge entirely and replaces it with a flat 30% higher instance and storage cost.

The crossover point: if I/O charges currently represent more than roughly 25% of your Aurora bill, switching to I/O-Optimized saves money. You can check this in Cost Explorer by filtering by service = RDS and usage type containing Aurora:StorageIOUsage. A database writing 800 million I/Os/month pays $160 in I/O charges on standard pricing. With I/O-Optimized, that same database pays 30% more on storage + instance but nothing for I/O — the break-even is around 700–750 million I/Os/month for most r6g instance sizes.

Aurora Global Database runs a primary cluster in one region with up to five read-only secondary regions. Replication lag is typically under 1 second. For applications serving users in Europe and the US from a single dataset, Global Database with a secondary in eu-west-1 costs the replication I/O and secondary storage plus a small data transfer fee — often $200–400/month additional. Compare that to the complexity of application-level multi-region replication and it’s inexpensive. This pairs well with the cost optimization strategies covered in AWS FinOps in 2026.

RDS Custom: The Underused Middle Option

RDS Custom is what you use when you need Oracle or SQL Server with licensing complexity, specific OS configurations, or custom agents running on the instance — but you still want automated backups, CloudWatch integration, and Multi-AZ without building it yourself.

With RDS Custom, you get SSH and SSM access to the underlying EC2 instance. You can install third-party monitoring agents, run custom scripts, modify OS-level parameters, and apply out-of-band patches. AWS still manages the database infrastructure outside the “customization zone” you configure.

For Oracle Database Enterprise Edition with complex RAC-to-single-node migrations, or SQL Server with custom CLR assemblies and linked servers, RDS Custom often replaces a 6-month EC2 DIY project with a 2-week configuration effort.

The Decision Framework

Team size and DBA capacity. Teams without a dedicated DBA should default to RDS or Aurora. The gap between “it runs” and “it’s production-ready” on EC2 is wide and unforgiving at 2 AM.

Database engine. PostgreSQL and MySQL: RDS and Aurora are both excellent. Oracle and SQL Server: evaluate RDS Custom before committing to EC2 DIY. Cassandra or other non-supported engines: EC2 is your only AWS-managed option (or consider Amazon Keyspaces for Cassandra-compatible workloads).

Traffic pattern. Steady, predictable load → RDS with Reserved Instances. Variable, spiky load → Aurora Serverless v2. Globally distributed users needing low-latency reads → Aurora Global Database.

Cost sensitivity. For I/O-heavy workloads, run Aurora I/O-Optimized. For steady-state workloads, RDS r6g Reserved Instances often beat Aurora on pure compute cost. For the absolute lowest database cost at the expense of operational work, EC2 with Reserved Instances wins on paper — but only if your engineer time is free, which it isn’t.

Compliance. RDS and Aurora both support encryption at rest with KMS, IAM authentication, VPC isolation, and automated audit logging. If you need FedRAMP High or specific FIPS-validated cryptography modules, verify current compliance scope at https://aws.amazon.com/compliance/services-in-scope/.

A Concrete Example: PostgreSQL for a SaaS App

Assume: 100 GB database, moderate write load (~50 million I/Os/month), 10,000 peak concurrent connections (handled via pgBouncer), us-east-1.

EC2 r6g.xlarge (self-managed):

- Compute: $0.20/hour → $1,752/year

- EBS

gp3100 GB: $0.08/GB/month → $96/year - Snapshot storage ~50 GB: $0.05/GB/month → $30/year

- Engineer overhead (12 hrs/month × $150): $21,600/year

- Total: ~$23,478/year

RDS PostgreSQL db.r6g.xlarge Multi-AZ (1-year Reserved, no upfront):

- Instance: $2,207/year

- Storage

gp3100 GB: $0.115/GB/month × 2 (Multi-AZ) → $276/year - Automated backups 100 GB: $0.095/GB/month → $114/year

- Engineer overhead (2 hrs/month × $150): $3,600/year

- Total: ~$6,197/year

Aurora PostgreSQL db.r6g.xlarge (1-year Reserved):

- Instance: $2,451/year (Aurora carries a ~10% premium over RDS on the same instance class)

- Aurora storage 100 GB: $0.10/GB/month → $120/year

- I/O 50 million/month: $0.20/million → $120/year

- Engineer overhead (1.5 hrs/month × $150): $2,700/year

- Total: ~$5,391/year

Aurora wins on total cost at this scale because of lower operational overhead (faster failover, no replica lag monitoring complexity, easier version upgrades). At 10x the I/O volume, evaluate I/O-Optimized pricing.

For caching patterns that reduce read load on any of these options, see Amazon ElastiCache and Horizontal vs Vertical Scaling for how read replicas fit into a broader scaling strategy.

The honest answer in 2026: EC2 databases make sense for licensing edge cases, custom engine requirements, or teams with dedicated DBA staff and predictable workloads. For everything else, managed services pay for themselves within the first year when you count engineer time honestly.

Comments