Decoupled Architecture [Exam Tips]

![Decoupled Architecture [Exam Tips]](/wp-content/uploads/sites/5/2021/12/decouple-architecture-e1645067426316.png)

If you haven’t read it yet, check out our post on horizontal vs vertical scaling. Now let’s talk about what decoupling your applications actually means and how to design a decoupled architecture on AWS.

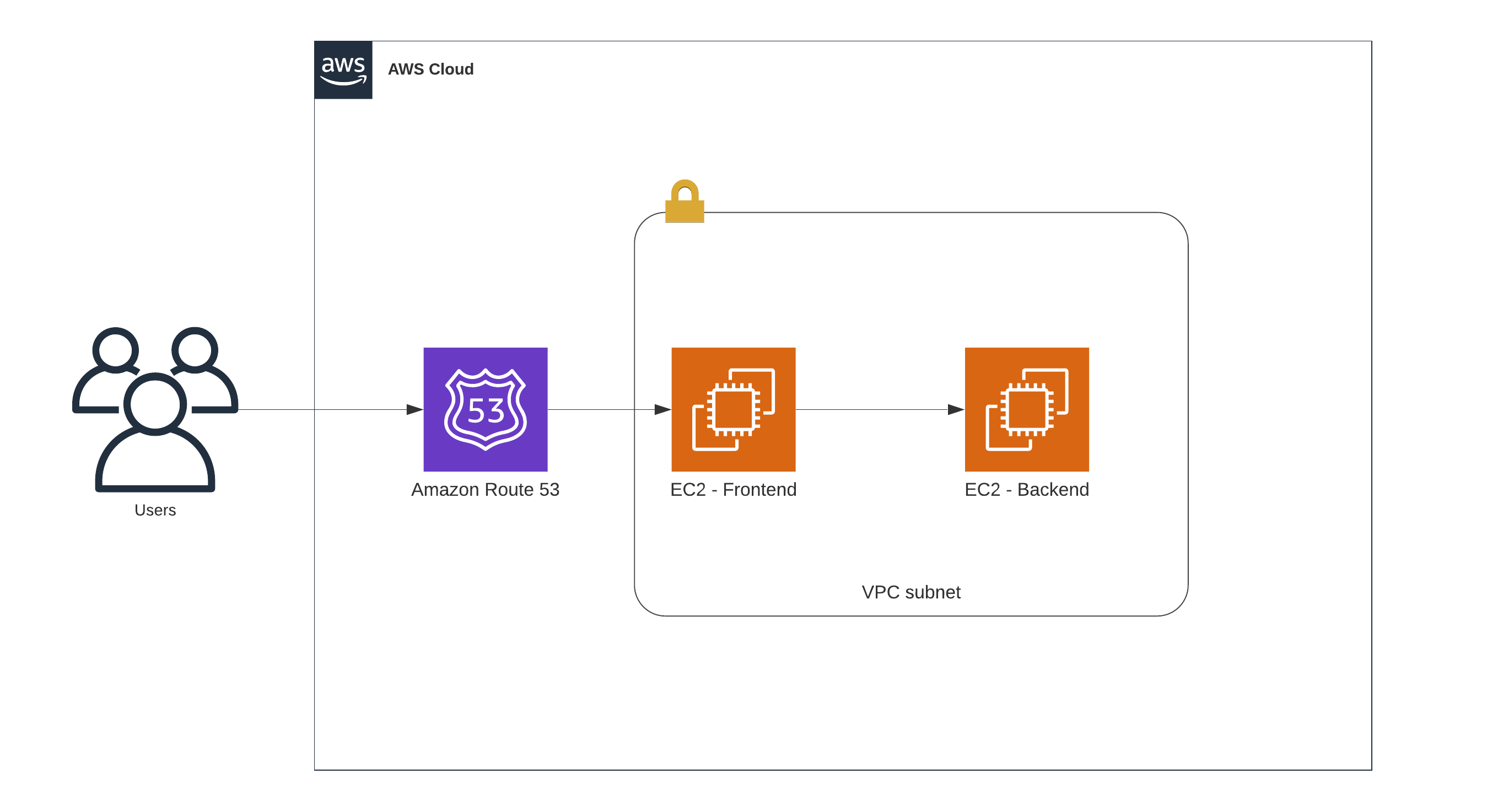

First, what’s wrong with this setup?

tight coupling architecture

tight coupling architecture

At first glance it looks fine. Users send orders through a web server, which forwards them to a backend. But what happens when that web server goes down? Nobody can place orders. That’s tight coupling. The user depends directly on the frontend EC2 instance, which depends directly on the backend EC2 instance. One failure cascades upward.

What is Decoupled Architecture?

Tight coupling is easy to build but creates fragile systems. The fix is to decouple your architecture.

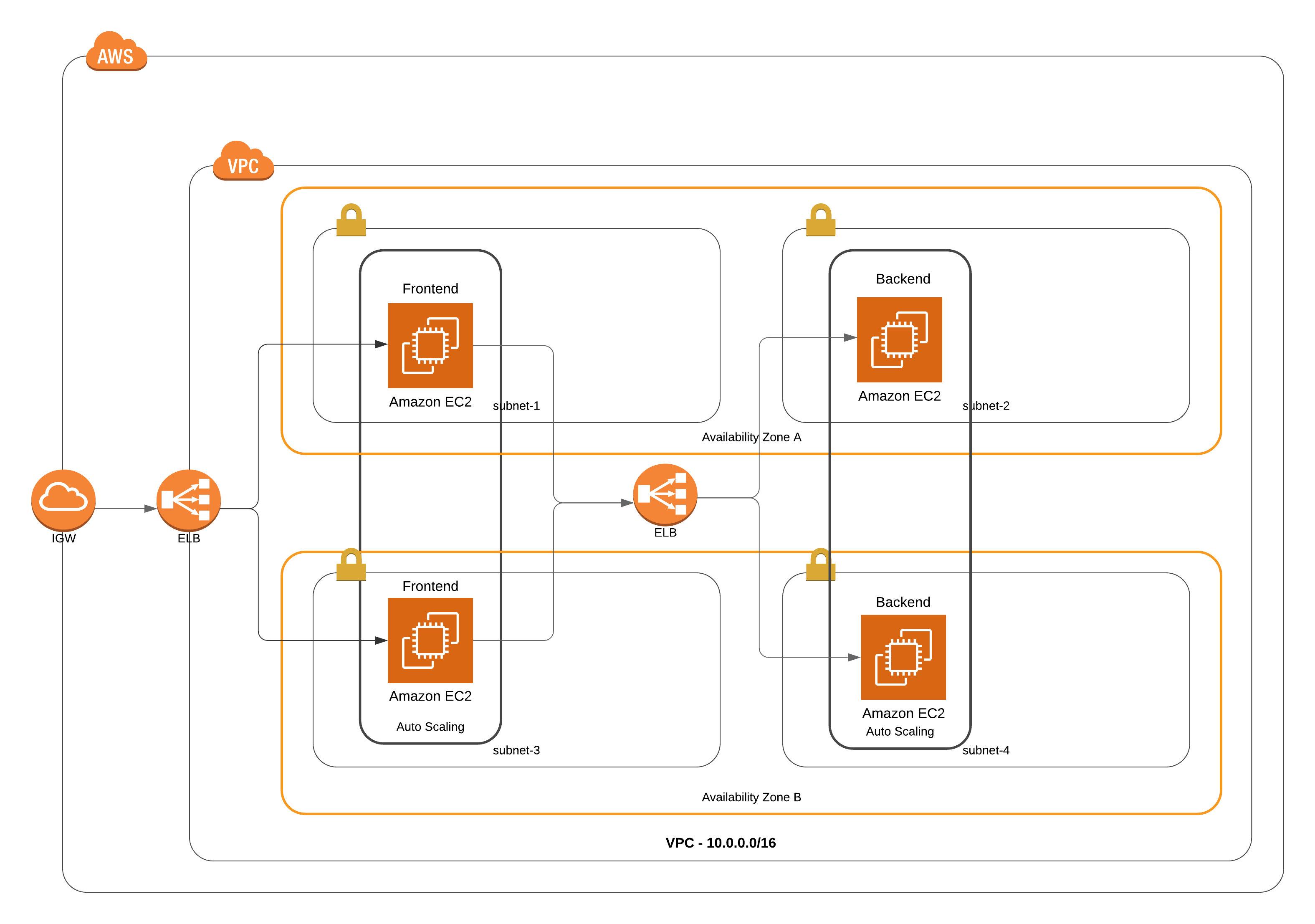

AWS Decoupled Architecture

AWS Decoupled Architecture

How to Decouple Architecture

In the diagram above, users still get the same result, but the request goes through an application load balancer first. The ALB distributes traffic to multiple EC2 instances serving as the frontend. Those instances send HTTP requests to another load balancer in front of the backend, which distributes to backend EC2 instances.

With this setup, if an instance fails the health check on either side, the load balancer routes traffic elsewhere. The user doesn’t notice.

Why Decouple?

The load balancer sends traffic only to healthy instances. This means you can run multiple instances at once. The frontend doesn’t need to know anything about the backend except “send the request to the load balancer.” The load balancer handles the rest.

As long as you keep at least one frontend instance and one backend instance running, you’re fine.

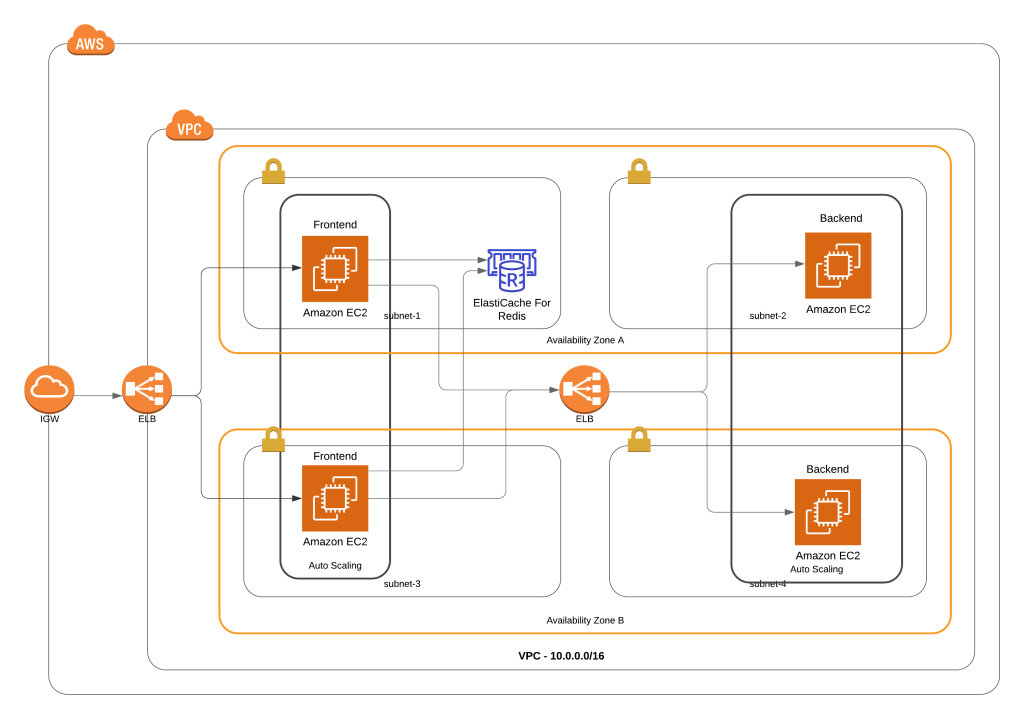

Decouple the Inner Application

Look at your whole architecture to find where you need more decoupling. Take the online store example. Users log in and create orders. If one EC2 instance goes down, the load balancer redirects users to a healthy instance. But the user session was stored on the failed instance, so users have to log in again.

The fix: store sessions outside the instances. AWS gives you ElastiCache (Redis or Memcached) for this.

Let’s look at the updated diagram:

AWS Decoupled Architecture with Redis

AWS Decoupled Architecture with Redis

Redis holds all user sessions. Now when EC2 instances fail or new ones spin up, users keep their session and can keep browsing. They don’t notice the failure happening behind the scenes.

For the differences between Redis and Memcached, see our article on Memcached vs Redis.

AWS Decoupling Services

Loose coupling works better than tight coupling in almost every case. Don’t let one EC2 instance talk directly to another. Build for scalability, high availability, and use managed services.

Load balancers aren’t always the answer though. Sometimes you don’t want a persistent connection from web server to backend. Sometimes you want something that holds messages until the backend is ready to process them, instead of keeping the backend running 24/7.

Three AWS services help here:

Simple Queue Service (SQS)

SQS is a fully managed, highly available messaging service. It sits between the web server and backend instead of a load balancer. The web server places messages in a queue, and the backend pulls messages when ready to process them. The two sides never communicate directly, and you don’t need to keep connections alive.

Simple Notification Service (SNS)

SNS pushes notifications. If you have one message and want to deliver it proactively to multiple endpoints instead of leaving it in a queue, SNS is the right tool.

API Gateway

API Gateway gives you a secure, scalable, highly available entry point for your applications. It controls what users can access in your AWS resources.

Exam Tips

The key point: never tightly couple your applications. On the exam, if you see an answer with tightly-coupled resources, skip it. Look for loose coupling instead.

Specifically: an EC2 instance should never talk directly to another EC2 instance. Always put a load balancer, queue, or other intermediary in between.

Before you go, check our related posts:

Design one High Availability application in AWS [Exam Tips]

Difference between Launch Template and Launch Configuration [Exam Tips]

Conclusion

Every layer of your application needs loose coupling. From users coming through Route 53, through load balancers, to the inner parts of your application. Just because you’ve decoupled the frontend doesn’t mean you’ve decoupled everything.

Never let EC2 talk directly to EC2. And there’s no single solution: load balancers work for synchronous requests, SQS works when the backend can’t be online all the time, and SNS works for fan-out patterns.

Comments