AWS SQS – All Topics that you need to know [Exam Tips]

![AWS SQS – All Topics that you need to know [Exam Tips]](/wp-content/uploads/sites/5/2021/12/amazon-sqs-feature.png)

Let’s talk about how to decouple applications using poll-based messaging. I’ll walk you through what SQS does, the key settings you’ll touch in practice, and how visibility timeout keeps your messages from getting processed twice.

If you want to compare SQS with SNS, check out this post.

We also cover FIFO and Dead-Letter Queues in separate articles.

What is poll-based messaging?

Think of sending a letter. You write it, drop it in a mailbox, and the post office delivers it. Your family member checks the mailbox whenever they want and reads it. That’s poll-based messaging.

A producer (like a web frontend) writes a message to an SQS queue. A backend server polls the queue and retrieves messages when it’s ready to process them. SQS acts like that mailbox.

What is AWS SQS?

SQS is a queue service that lets you decouple your producer and consumer. The producer sends a message to the queue, and the consumer retrieves it later. There’s no direct connection between them.

In a typical setup, you’d have an EC2 instance behind a load balancer. But what happens when the backend isn’t ready to handle a request? With SQS, the frontend sends to the queue, and the backend pulls when it’s ready. No waiting, no dropped requests.

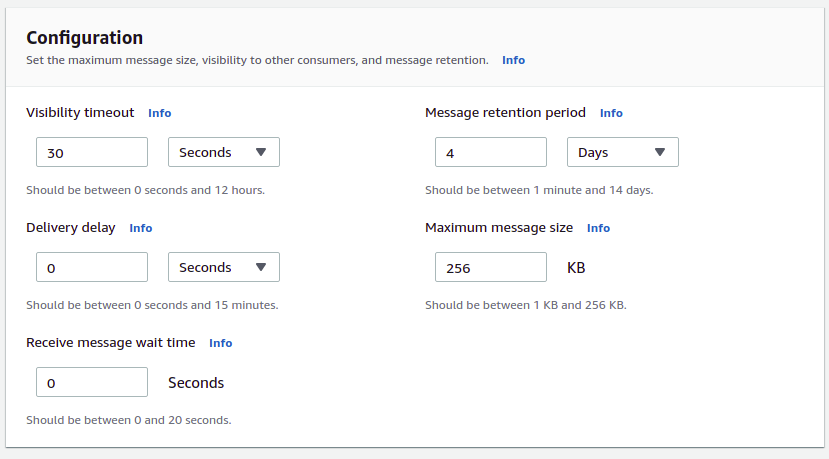

SQS Settings

SQS is straightforward, but a few settings matter. Here are the ones you need for the exam.

Message size

Hard limit: 256KB per message. The message can be text in any format. You can set a lower limit, but you cannot go above 256KB.

Message Retention Period

Messages don’t stay in the queue forever. Default is 4 days. You can configure it between 60 seconds and 14 days. After that, SQS deletes the message.

If your consumer falls behind and messages expire before processing, they’re gone.

Delay Message

Delay defaults to zero. You can set it up to 15 minutes.

When you set a delay, SQS hides the message for that duration before it becomes visible to consumers. Your backend won’t see it until the delay passes.

Why would you use this? Imagine a customer places an order. You might want to delay the confirmation message until after the payment clears on your backend. That way, the customer doesn’t get an email saying “order confirmed” before you actually charged their card.

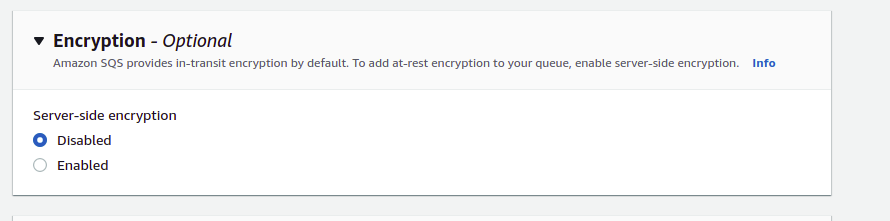

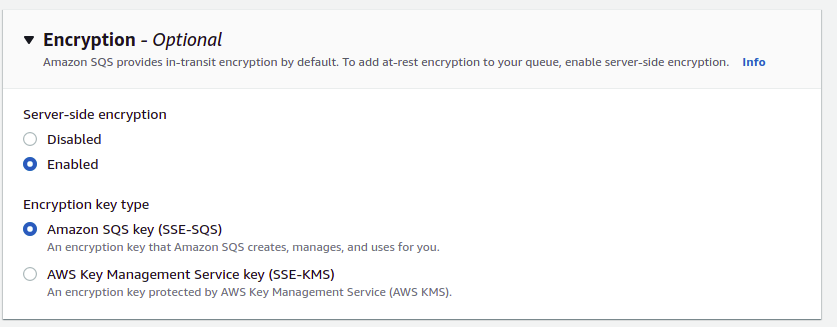

Encryption

SQS encrypts messages in transit by default. At-rest encryption is disabled by default. Enable it by checking the server-side encryption box and selecting a KMS key.

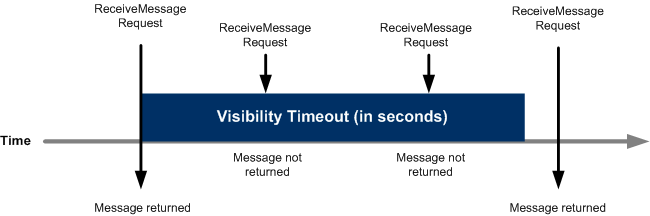

Visibility Timeout

This one trips people up. Here’s how it works.

When your backend retrieves a message, SQS doesn’t delete it automatically. SQS is a distributed system, and your backend might crash after receiving the message but before processing it. So SQS waits for your backend to explicitly delete the message.

To prevent other consumers from grabbing the same message, SQS applies a visibility timeout. During this window, the message stays hidden. Default is 30 seconds. Range is 0 seconds to 12 hours.

Let’s say you set visibility timeout to 60 seconds. Your backend retrieves a message. For the next 60 seconds, other servers polling the queue won’t see that message. If your backend crashes and never processes it, the message reappears after 60 seconds and another server can pick it up.

If your backend finishes processing in 55 seconds, it calls delete on the message and it’s gone from the queue.

Long vs. Short Polling

When your backend retrieves messages, it uses either short polling or long polling. Default is short polling.

Short polling: your server connects, checks for messages, and immediately gets a response whether there are messages or not. If the queue is empty, you wasted an API call.

Long polling: your server sets WaitTimeSeconds greater than zero on the ReceiveMessage call. SQS holds the connection open until a message arrives or the timeout hits (max 20 seconds). You pay less for API calls and your servers use less CPU.

For most workloads, long polling is the better choice. Short polling only makes sense when you absolutely cannot wait for messages.

Queue Depth

Queue depth tells you how many messages are waiting. You can monitor this with CloudWatch and set up Auto Scaling to add more backend servers when the queue builds up.

Check the CloudWatch SQS metrics documentation for everything available.

Exam Tips

SQS shows up frequently on the AWS exam.

Key configurations to know

Know the numbers. Message size is 256KB. Retention ranges from 60 seconds to 14 days. Visibility timeout goes from 0 seconds to 12 hours. Delay maximum is 15 minutes. Long polling max wait is 20 seconds.

Troubleshooting scenarios

The exam will ask you why messages disappeared or why they reappeared. Most issues come from misconfigured visibility timeout. If your timeout is 10 seconds but your server takes 30 seconds to process messages, the lock releases before you’re done and another server grabs the message.

Polling

Long polling is cheaper and more efficient. Short polling burns CPU and costs more. The exam prefers long polling answers.

Message size

Remember 256KB. Format doesn’t matter, JSON or plain text works fine.

Next steps

Know the Dead-Letter Queue and understand FIFO vs Standard queues.

Best Practices

-

Use VPC endpoints for private access to SQS.

-

Create IAM roles for resources that need SQS access.

-

Don’t make queues publicly accessible.

-

Apply least-privilege access principles.

-

Enable server-side encryption. It’s off by default.

-

Use TLS for data in transit.

Learn More

What is the advantage of using SQS FIFO?

How to use SQS Dead-Letter Queue?

What you should know about High Availability on AWS

Comments