Amazon DynamoDB in 2026: Data Modeling, PartiQL, Zero-ETL, and Pricing

DynamoDB has been my go-to for event-driven, high-throughput workloads for years. The core design hasn’t changed — you still need to think hard about partition keys and access patterns before writing a single line of code. But a few things added in the past two years are worth knowing about before you start a new project in 2026: PartiQL queries, zero-ETL integration with Redshift, the Standard-IA table class, and S3 import/export. This post covers the fundamentals and the new stuff.

What DynamoDB is (and what it isn’t)

DynamoDB is a fully managed NoSQL database. No servers to provision, no replication to configure, no maintenance windows. It scales capacity automatically and delivers single-digit millisecond latency at virtually any throughput level.

What it isn’t: a general-purpose database. You can’t do arbitrary JOINs. You can’t run ad-hoc queries against arbitrary columns without paying the price of a full-table Scan. If your access patterns are unpredictable or change frequently, you’ll spend a lot of time adding indexes or rethinking your key design.

All tables are encrypted at rest by default. You can use AWS-owned keys, AWS-managed KMS keys, or customer-managed keys — your choice based on compliance requirements.

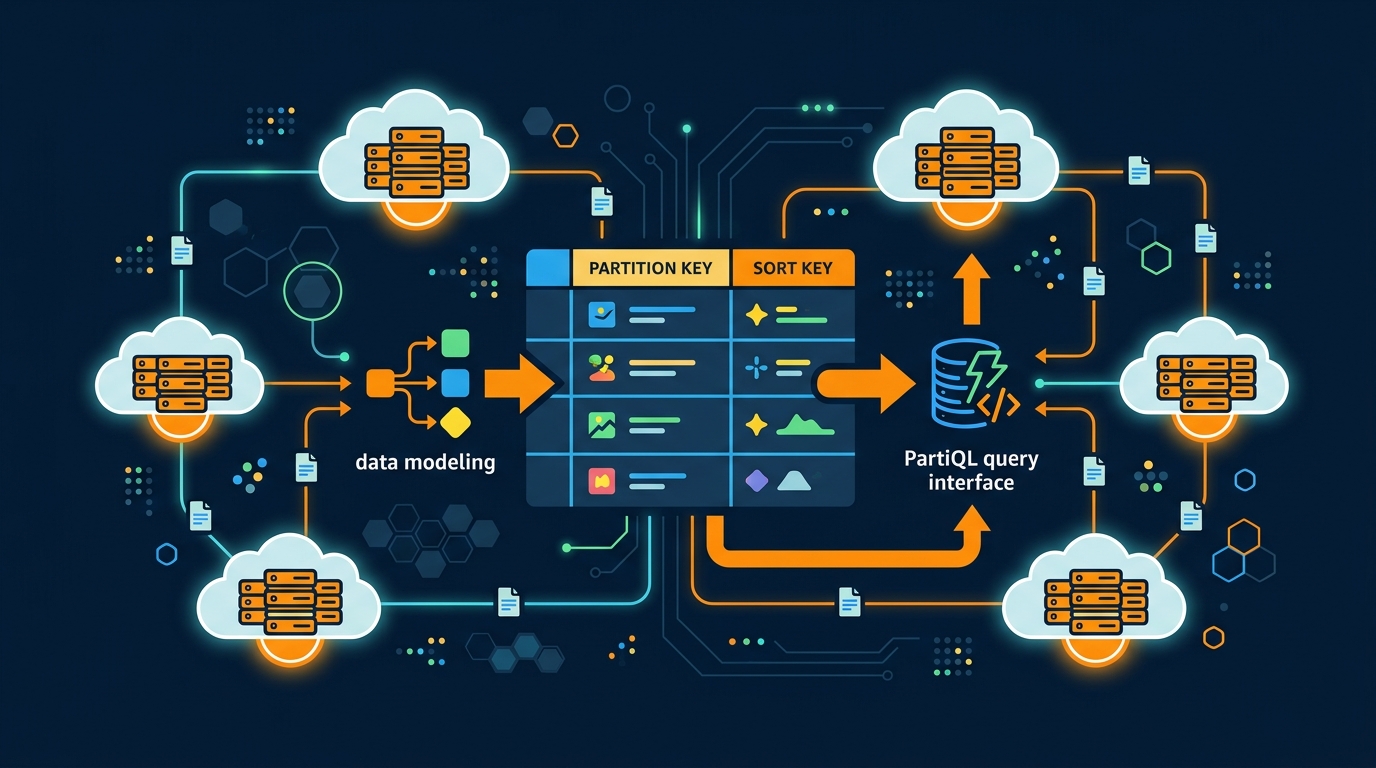

Data modeling in DynamoDB

This is where most teams go wrong. Relational thinking doesn’t transfer. In DynamoDB, you design your keys around your access patterns, not the other way around. The DynamoDB single-table design guide covers key overloading, GSI patterns, and the access-pattern-first methodology in depth.

Primary keys

Every item needs a primary key. A simple primary key is just a partition key. A composite primary key adds a sort key, which lets you query items within a partition in sorted order. Most production tables use composite keys.

Partition keys

DynamoDB hashes the partition key value to determine which physical partition stores the item. Items with the same partition key land on the same partition. Choose partition keys with high cardinality — many distinct values — to distribute traffic evenly across partitions. A hot partition (one key getting disproportionate traffic) will throttle requests even if you have capacity to spare elsewhere.

User IDs, order IDs, and session tokens make good partition keys. Status fields (“active”, “pending”, “closed”) make terrible ones.

Sort keys

The sort key determines how items are ordered within a partition. You can query ranges: all orders for a customer between two dates, all messages in a thread after a certain timestamp, all log entries from a device in the last hour. Sorting only applies within a partition.

Write your queries first. Then design your keys to support those queries. This isn’t optional — it’s the only way to model data in DynamoDB correctly.

A practical example: e-commerce sessions

Here’s a more realistic example than the textbook Books table. An e-commerce session table:

{

"customerId": {"S": "usr_7k2mxp"},

"sessionId": {"S": "sess_2026-04-04T09:15:00Z"},

"cartItems": {"L": [

{"M": {"sku": {"S": "SHOE-001"}, "qty": {"N": "2"}, "price": {"N": "89.99"}}}

]},

"lastActivity": {"S": "2026-04-04T09:22:41Z"},

"expiresAt": {"N": "1775000000"}

}

Partition key: customerId. Sort key: sessionId (prefixed with a timestamp so sessions sort chronologically). The expiresAt attribute drives TTL — expired sessions delete automatically. One query fetches all sessions for a customer in reverse chronological order, which is exactly what the checkout flow needs.

Secondary indexes

Secondary indexes let you query on attributes other than the primary key. You have two options.

Local Secondary Indexes (LSI)

LSIs share the partition key with the main table but use a different sort key. They can only query within a single partition. You define them at table creation time and can’t add or remove them later. Most new designs skip LSIs entirely and go straight to GSIs.

Global Secondary Indexes (GSI)

GSIs have their own partition and sort keys — completely independent from the base table. They can query across all partitions, which makes them far more flexible. GSIs are eventually consistent by default. If you need strong consistency on a GSI, you don’t get it — only the base table supports strongly consistent reads.

Each GSI is essentially a separate DynamoDB table that AWS maintains for you. You pay for the storage and the write capacity consumed replicating data to the index.

The practical limit is 20 GSIs per table, which is more than enough for most designs. If you find yourself needing more, that’s a signal your data model needs rethinking.

Avoid Scan — if you’re scanning a production table, your data model is wrong. Every Scan reads every item, then filters. At scale, this consumes enormous capacity, drives up costs, and hurts latency. Use Query with a partition key, always.

PartiQL: SQL syntax for DynamoDB

DynamoDB added support for PartiQL, a SQL-compatible query language. You can write SELECT, INSERT, UPDATE, and DELETE statements against DynamoDB tables using familiar syntax:

-- Find all orders for a customer since the start of 2026

SELECT * FROM Orders

WHERE customerId = 'C123'

AND orderDate > '2026-01-01'

-- Insert a new item

INSERT INTO Sessions VALUE {

'customerId': 'usr_7k2mxp',

'sessionId': 'sess_2026-04-04T10:00:00Z',

'lastActivity': '2026-04-04T10:00:00Z'

}

-- Update a specific attribute

UPDATE Orders

SET status = 'shipped'

WHERE customerId = 'C123' AND orderId = 'ORD-9981'

Access it via the AWS Console query editor, the CLI (aws dynamodb execute-statement), or any SDK using the ExecuteStatement API.

Important: PartiQL is syntactic sugar over DynamoDB’s existing Query and Scan APIs. It does not unlock relational capabilities. You cannot JOIN tables. You cannot query arbitrary columns without a supporting index — that just becomes a Scan under the hood. The same access pattern constraints apply. What PartiQL gives you is a familiar syntax for teams coming from SQL backgrounds, and a cleaner way to write one-off queries in the console during debugging.

For transactional writes, ExecuteTransaction lets you batch multiple PartiQL statements into a single atomic operation.

Zero-ETL integration with Amazon Redshift

This is one of the more practically useful features added in recent releases. DynamoDB zero-ETL integration with Redshift lets you run analytics on your DynamoDB data without building or maintaining an ETL pipeline.

You enable the integration from the DynamoDB console or via the API. DynamoDB streams changes to Redshift continuously — typically syncing within seconds. In Redshift, your DynamoDB table appears as a regular table you can query with standard SQL, join against other data sources, and run BI tools against.

The practical pattern: your application writes operational data to DynamoDB (fast, at scale, single-digit millisecond latency). Your analytics team queries that same data from Redshift (complex aggregations, multi-table joins, historical trend analysis). Same data, two different query engines, no pipeline to maintain.

Before this existed, the standard approach was DynamoDB Streams → Lambda → S3 → Redshift Spectrum, or some variation of it. That works, but it’s infrastructure to build, monitor, and keep running. Zero-ETL removes most of that.

Be aware: the integration has a cost. You pay for DynamoDB change data capture and Redshift storage. For large, high-write tables, profile the cost before enabling it in production.

DynamoDB table classes

Two table classes let you optimize storage costs based on access frequency.

Standard (default): Optimized for frequently accessed data. Lower per-request cost, higher storage cost. Use this for active operational tables.

Standard-IA (Infrequent Access): 60% lower storage cost, roughly 25% higher per-request cost. Designed for data you access less than once a month — audit logs, historical records, compliance archives, cold data you’re legally required to retain but rarely touch.

You switch table classes with zero downtime, no data migration required. It’s a metadata change. If your storage bill is dominated by a large table of rarely-accessed records, Standard-IA is an easy win. At the current storage pricing of $0.10/GB-month versus $0.25/GB-month for Standard, a 10 TB archive table saves $1,500/month.

Export to S3 and Import from S3

DynamoDB can export an entire table to S3 without consuming read capacity units. The export captures a consistent snapshot at a point in time using the Point-in-Time Recovery (PITR) mechanism, so PITR must be enabled on the table. Output format is DynamoDB JSON or Amazon Ion.

Common use cases: feeding a data lake, long-term archival outside DynamoDB, creating a snapshot before a risky migration.

Import from S3 goes the other direction: create a new DynamoDB table from data stored in S3. The source can be DynamoDB JSON, Amazon Ion, or CSV. This is useful for restoring from an S3 archive, migrating between accounts or regions, or seeding a new environment from a production snapshot.

Both operations appear in the DynamoDB console under the table’s “Exports and streams” tab. The CLI equivalents are aws dynamodb export-table-to-point-in-time and aws dynamodb import-table.

Capacity modes and provisioned throughput

DynamoDB has two billing modes: provisioned and on-demand.

Provisioned capacity: you specify the number of read capacity units (RCUs) and write capacity units (WCUs) per second. One RCU handles one strongly consistent read per second for items up to 4 KB, or two eventually consistent reads. One WCU handles one write per second for items up to 1 KB. You can enable auto scaling to adjust capacity automatically based on utilization targets.

On-demand: no capacity planning. DynamoDB scales instantly to handle any request rate. You pay per request rather than per reserved capacity.

Provisioned with auto scaling is typically 60–70% cheaper than on-demand at steady, predictable traffic levels. On-demand is better for spiky, unpredictable workloads or tables you’re not sure how to size yet. Starting with on-demand and switching to provisioned once you understand your traffic pattern is a reasonable approach.

If you underprovision, requests throttle. Those throttled requests either fail (if your SDK retry budget is exhausted) or increase latency from retries. CloudWatch Contributor Insights is the right tool for finding hot partition keys that cause uneven throttling even when overall capacity looks sufficient.

TTL, global tables, and consistency

Time-to-Live (TTL): designate a numeric attribute as the TTL attribute. DynamoDB reads it as a Unix epoch timestamp and deletes the item after that time. Session data, temporary tokens, rate-limiting windows, and cached computations all benefit from TTL. Deletion happens within minutes of expiration, not instantly.

Global tables: multi-region active-active replication. Writes go to any region and replicate to all others, typically within a second. Last-writer-wins conflict resolution. Useful for disaster recovery and for serving low-latency writes to users in multiple geographic regions.

Consistency: strongly consistent reads guarantee you see the most recent write. Eventually consistent reads (the default) might return stale data for a brief window. Strongly consistent reads consume twice the RCU capacity. Use eventually consistent reads wherever staleness is acceptable — it’s usually fine for reads that don’t follow an immediate write from the same user.

Monitoring

Key CloudWatch metrics to watch:

ConsumedReadCapacityUnits/ConsumedWriteCapacityUnits: actual usage against provisioned capacityReadThrottleEvents/WriteThrottleEvents: throttled requests, which means you’re over capacitySuccessfulRequestLatency: p50, p90, p99 latency by operation typeSystemErrors: DynamoDB-side failures (rare, but set an alarm)

Enable Contributor Insights on tables where you suspect hot partitions. It shows you the top partition keys by request volume, which is invaluable for diagnosing uneven traffic distribution.

Set CloudWatch alarms on WriteThrottleEvents and ReadThrottleEvents before you launch anything to production. Throttling in DynamoDB is silent from the user perspective unless your retry logic surfaces it.

Security

DynamoDB encrypts all data at rest by default and all data in transit over TLS. Use IAM policies to restrict access to specific tables and operations. For fine-grained control, IAM condition keys (dynamodb:LeadingKeys) let you restrict access based on partition key values — useful for multi-tenant tables where each tenant should only access their own items.

VPC endpoints keep DynamoDB traffic within your AWS network. Point-in-time recovery (PITR) lets you restore a table to any second within the last 35 days. On-demand backups copy entire tables to S3 for long-term archival.

Don’t skip IAM least-privilege on DynamoDB. The default tendency is to grant full DynamoDB access to application roles. That’s fine in development. In production, scope access to specific table ARNs and the specific operations your application actually needs.

Pricing (us-east-1, 2026)

On-demand pricing:

| Resource | Price |

|---|---|

| Write request unit | $1.25 per million |

| Read request unit | $0.25 per million |

| Storage (Standard) | $0.25 per GB-month |

| Storage (Standard-IA) | $0.10 per GB-month |

Quick math for a mid-size application: 10 million writes/day plus 50 million reads/day equals 300 million WRUs and 1.5 billion RRUs per month. That’s $375 in write costs plus $375 in read costs — $750/month on-demand before storage.

At that traffic level, provisioned capacity with auto scaling would be roughly $200–250/month, a 65–70% reduction. The break-even point for switching from on-demand to provisioned is usually around 1–2 million requests/day of sustained, predictable traffic.

Don’t forget GSI costs. Each write to a table generates a write to every GSI that projects the changed attributes. A table with five GSIs effectively multiplies your write cost by up to six. Design GSIs carefully and only project the attributes you actually query.

Standard-IA storage costs $0.10/GB-month versus $0.25/GB-month for Standard. The math above assumed Standard. If you have large tables of infrequently accessed data, Standard-IA can cut your storage bill significantly with no performance impact for your access pattern.

Summary

DynamoDB remains the right database for high-throughput, event-driven workloads where you know your access patterns and need consistent latency at scale. The fundamentals haven’t changed: design your keys around your queries, avoid Scan in production, and size capacity appropriately for your traffic pattern.

The additions worth adopting in 2026:

- PartiQL for more readable queries in the console and for teams comfortable with SQL syntax — just remember it doesn’t change the underlying access pattern constraints

- Zero-ETL to Redshift if you need analytics on operational DynamoDB data without building an ETL pipeline

- Standard-IA for archive tables and cold data — easy cost savings with zero migration effort

- Export/Import via S3 for migrations, seeding environments, and long-term archival outside DynamoDB

Related posts:

Comments