Amazon ElastiCache in 2026: Redis OSS, Valkey, and Serverless

Amazon ElastiCache has changed more in the past two years than in the previous five. The Redis licensing drama, a new open-source fork, a serverless tier that actually works, and a generation of Graviton3 nodes that beat the previous gen on both price and performance. If your mental model of ElastiCache is from a blog post written before 2024, it’s probably out of date.

This post covers where things stand in 2026: what changed, how to choose between the engine options, when ElastiCache Serverless makes financial sense, and real configuration patterns for the two most common use cases.

The Redis licensing drama and why Valkey exists

In March 2024, Redis Ltd changed the license for Redis 7.4 and later from BSD to the Server Side Public License (SSPL) and the Redis Source Available License (RSALv2). The SSPL is designed to prevent cloud providers from offering Redis as a managed service without contributing back or paying a license fee. AWS, Google, and Oracle were the obvious targets.

AWS’s response was to become a founding member of the Valkey project under the Linux Foundation. Valkey forked from Redis 7.2.4 (the last BSD-licensed release) in April 2024. The Linux Foundation maintains it as a true open-source project. Valkey 7.2 was followed by Valkey 8.0 in late 2024, which brought performance improvements over both the original Redis 7.2 fork and Redis 8.0.

What this means for ElastiCache: AWS now offers three engine options.

- Valkey — The recommended path for new workloads. Open-source under BSD, actively developed, fully API-compatible with Redis 7.2.

- Redis OSS — Available up to version 7.1 (the last BSD release). No longer receiving active development from AWS. Existing clusters keep running, but this is a maintenance track.

- Memcached — Still supported, still the right choice for specific workloads (more on this later).

AWS has been clear in its documentation: for new clusters, use Valkey unless you have a specific reason not to.

Valkey vs Redis OSS on ElastiCache

For most applications, moving from Redis OSS to Valkey is transparent. The client API is identical — the same RESP protocol, the same commands, the same data structures. Your redis-py, Jedis, or ioredis client connects to a Valkey cluster without modification.

The differences that actually matter in practice:

Valkey 8.0 introduced I/O threading improvements that increase throughput on CPU-bound workloads. Benchmarks from AWS show roughly 20-30% higher ops/sec on GET/SET heavy workloads compared to Redis 7.0 on equivalent hardware. For most applications this headroom rarely gets used, but it matters for high-throughput leaderboards, session stores, and rate limiters.

There is no pricing difference between Valkey and Redis OSS at the node level. You pay for the instance type, not the engine. Where Valkey has an advantage is in future node generation support — AWS will prioritize Valkey for new hardware generations, so Redis OSS clusters are likely to stay on current node generations longer.

Running Redis OSS 7.1 carries no immediate legal risk to you as an AWS customer — ElastiCache handles the infrastructure. But the long-term development of Redis OSS under the new license is less certain than Valkey, which has AWS, Google, Ericsson, and others actively contributing.

For new projects, use Valkey. For existing Redis clusters, there is no urgency to migrate this quarter, but it belongs on your roadmap.

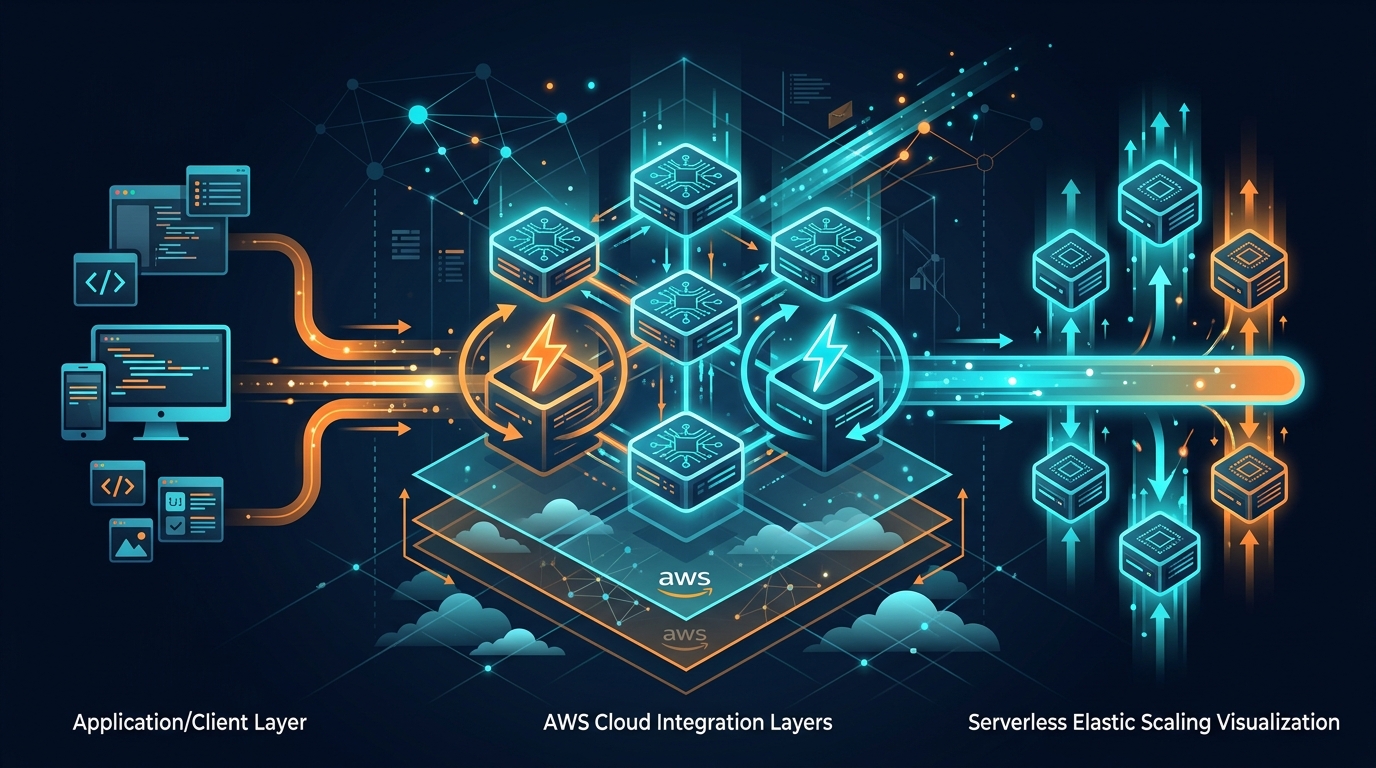

ElastiCache Serverless

ElastiCache Serverless launched at re:Invent 2023 and covers Valkey, Redis OSS, and Memcached. The pitch: no cluster sizing, no node selection, capacity scales automatically, you pay per ElastiCache Processing Unit (ECPU) consumed and per GB of data stored.

One ECPU is roughly equivalent to a single GET or SET operation on a simple key. More complex operations — ZADD with a large sorted set, EVAL scripts, transactions — consume more ECPUs proportionally.

Pricing (us-east-1, April 2026):

- $0.0034 per ECPU

- $0.125 per GB-hour of data stored

Serverless is cost-effective when your cache has spiky or unpredictable traffic. If you are running a workload that peaks at 10x the average load for a few hours a day, a provisioned cluster sized for the peak sits idle most of the time. You pay for reserved capacity whether you use it or not.

Consider a workload averaging 5,000 ops/sec with 10GB of data but spiking to 40,000 ops/sec for 4 hours on weekdays. With provisioned nodes, you need a cluster sized for 40K ops/sec — probably 3x cache.r7g.large nodes at ~$0.166/hr each = $0.498/hr, or roughly $360/month. With Serverless at 5,000 ops/sec average (8.64M ops/day × 30 days = ~260M ECPUs/month) plus 10GB storage:

- ECPUs: 260M × $0.0034 = $884/month

- Storage: 10 × 720 hours × $0.125 = $900/month

- Total: ~$1,784/month

That is notably worse than provisioned for steady-state workloads. The math shifts when the workload is genuinely bursty and the provisioned baseline you would otherwise pay for is large.

Any workload with sustained high throughput — above roughly 2,000-3,000 ops/sec continuously — will be cheaper on provisioned nodes. The per-ECPU model does not reward consistency. A cache.r7g.large reserved for 1 year in us-east-1 costs about $58/month and handles well over 100,000 ops/sec for typical key sizes.

I’d use Serverless for dev/staging environments where you want zero capacity planning, applications with genuinely unpredictable traffic (early-stage products, event-driven workloads), and cases where the operational simplicity justifies a higher monthly bill. Outside those scenarios, the numbers usually don’t work out.

Memcached: still worth knowing about

Memcached gets treated as the legacy option, and for most new architectures that’s fair. But it has two real advantages that Redis and Valkey don’t match.

Memcached uses a slab allocator that wastes less memory per key than Redis’s internal encoding for certain data shapes. If you are storing millions of small string values and nothing else, Memcached can fit 10-20% more data into the same RAM footprint.

Redis (and Valkey pre-8.0 with I/O threading disabled) processes commands in a single thread. Memcached uses multiple threads and scales linearly with CPU cores. On a cache.r7g.4xlarge with 16 vCPUs, Memcached can use all of them. Redis will saturate one core and then depend on I/O threading for partial relief.

Choose Memcached if you have a pure string caching workload with no need for persistence, pub/sub, Lua scripting, or complex data types, and you are running on large multi-core instances where multi-threading provides measurable benefit. For everything else, Valkey.

Sizing guide: r7g nodes in 2026

The current recommended generation for ElastiCache is the r7g family (Graviton3). Compared to r6g:

- 15-20% better price-performance

- Up to 25Gbps network bandwidth on larger sizes

- Available in the same instance families

cache.r7g.large (6.38 GB RAM, 2 vCPUs) is the sweet spot for most production web applications. It provides enough memory for session data, query result caches, and rate-limiting counters for applications handling up to ~50K concurrent users, with headroom to run replicas.

Typical progression:

cache.r7g.medium(3.09 GB) — dev/staging, small apps under 1,000 concurrent sessionscache.r7g.large(6.38 GB) — most production web apps, starting point for Redis/Valkeycache.r7g.xlarge(13.07 GB) — larger session stores, gaming leaderboards, ad-techcache.r7g.2xlarge(26.04 GB) — high-throughput applications, ML feature stores

For production deployments, run at minimum 1 primary + 1 replica in a Multi-AZ configuration. The replica adds ~$0 in latency to read operations (reads still go to primary by default unless you route explicitly) but protects against AZ failure and gives you a failover target in under 60 seconds.

Reserved instances on r7g provide roughly 30% savings over on-demand for 1-year terms and 50%+ for 3-year. If your cache workload is predictable, reserved capacity for the baseline and on-demand for overflow is a standard pattern covered in AWS FinOps. If ElastiCache sits in front of an RDS PostgreSQL backend, blue-green deployments on RDS PostgreSQL let you perform major version upgrades with zero downtime — the cache layer keeps serving requests while the switchover happens.

Real use case: session caching for a web app

This is the most common ElastiCache workload. The pattern: store user sessions keyed by session ID with a TTL that matches your application’s timeout.

import redis

import json

import os

# Connect to ElastiCache Valkey cluster

client = redis.Redis(

host=os.environ["ELASTICACHE_ENDPOINT"],

port=6379,

ssl=True,

ssl_cert_reqs=None, # ElastiCache uses AWS-managed certs

decode_responses=True

)

SESSION_TTL = 3600 # 1 hour in seconds

def get_session(session_id: str) -> dict | None:

data = client.get(f"session:{session_id}")

if data is None:

return None

client.expire(f"session:{session_id}", SESSION_TTL) # sliding TTL

return json.loads(data)

def set_session(session_id: str, session_data: dict) -> None:

client.setex(

f"session:{session_id}",

SESSION_TTL,

json.dumps(session_data)

)

def delete_session(session_id: str) -> None:

client.delete(f"session:{session_id}")

A few things worth noting here:

The ssl=True flag is non-negotiable for ElastiCache clusters not inside a tightly controlled private subnet. In-transit encryption adds negligible latency from within the same VPC.

The EXPIRE call on reads implements a sliding TTL — the session stays alive as long as the user is active, rather than expiring at a fixed time after creation. This is usually what you want for web sessions.

Key namespacing with session: prefix matters when a single cluster serves multiple use cases. Separate prefixes for sessions, API response caches, and rate limiters makes it easy to flush one category without affecting others.

For a horizontally scaled application, all app instances connect to the same ElastiCache endpoint and share session state — this is what makes ElastiCache a prerequisite for stateless scaling of web servers.

Real use case: leaderboard with sorted sets

Sorted sets are one of the features that make Redis and Valkey genuinely different from Memcached. A leaderboard storing up to 10 million players with O(log N) insert and rank lookup:

LEADERBOARD_KEY = "game:leaderboard:weekly"

def submit_score(player_id: str, score: float) -> None:

# ZADD with GT flag: only update if new score is higher

client.zadd(LEADERBOARD_KEY, {player_id: score}, gt=True)

def get_rank(player_id: str) -> int | None:

# ZREVRANK: rank from highest to lowest, 0-indexed

rank = client.zrevrank(LEADERBOARD_KEY, player_id)

return rank + 1 if rank is not None else None # 1-indexed

def get_top_players(count: int = 10) -> list[tuple[str, float]]:

# Returns list of (player_id, score) tuples

return client.zrevrange(LEADERBOARD_KEY, 0, count - 1, withscores=True)

def get_players_around(player_id: str, window: int = 5) -> list[tuple[str, float]]:

rank = client.zrevrank(LEADERBOARD_KEY, player_id)

if rank is None:

return []

start = max(0, rank - window)

end = rank + window

return client.zrevrange(LEADERBOARD_KEY, start, end, withscores=True)

The gt=True flag on ZADD (available in Redis 6.2+ and Valkey) prevents a score regression if a player’s latest run was slower. Without it, a player who already has 9,500 points could accidentally have their score overwritten with 8,200 from an older submission.

At 10 million members, this sorted set occupies roughly 600-700 MB of RAM on a cache.r7g.large. That’s enough for a single weekly leaderboard plus the session data mentioned above. If you need multiple leaderboards — daily, weekly, all-time, per-region — plan for it. Either a larger node or multiple keys distributed across a cluster-mode deployment.

For a comparison of how DynamoDB handles similar patterns, the approaches diverge significantly in both cost and capability at scale.

Cost comparison: Serverless vs reserved r7g.large

To make the tradeoffs concrete, here are two scenarios:

Scenario A — Startup with unpredictable traffic (0-5,000 ops/sec)

Monthly ops: ~50M (assuming 19 ops/sec average, spikes to 5K) Data stored: 2 GB

Serverless:

- ECPUs: 50M × $0.0034 = $170

- Storage: 2 GB × 720h × $0.125 = $180

- Total: $350/month

Provisioned cache.r7g.large on-demand (1 primary + 1 replica):

- 2 × $0.166/hr × 720h = $239/month

Serverless loses here even with unpredictable traffic, because the average throughput is low enough that the per-ECPU cost compounds.

Scenario B — Established app with bursty traffic (average 1,000 ops/sec, spikes to 50,000)

To handle the spike, you need at least cache.r7g.xlarge with 2 replicas provisioned = 3 × $0.332/hr × 720h = $717/month.

Monthly ops: ~2.6B Serverless: 2.6B × $0.0034 + (8 GB × 720h × $0.125) = $8,840 + $720 = $9,560/month

Provisioned wins by a factor of 13x.

The honest read here: ElastiCache Serverless is mainly a convenience product. It removes operational overhead and eliminates capacity planning for environments where you’re willing to pay for that simplicity. For cost-optimized production workloads with consistent or high throughput, provisioned nodes with reserved pricing beat Serverless decisively. I’d only reach for Serverless when the team’s time is worth more than the bill difference.

If you are already thinking about cost optimization across your AWS stack, the AWS FinOps well-architected framework covers reserved capacity strategy, rightsizing, and savings plans in detail.

Comments